05--kubernetes组件与安装

k8s详细概念和两种安装方式,详细步骤会在下文列出,文章很长,根据目录取用。均配置国内源,保证安装成功率。

前言:终于写到kubernetes(k8s),容器编排工具不止k8s一个,它的优势在于搭建集群,也是传统运维和云计算运维的第一道门槛,这里会列出两种安装方式,详细步骤会在下文列出,文章很长,根据目录取用。

1、kubernetes基础名词

官网地址:Kubernetes

中文网地址:Kubernetes 中文网 官网

一个简单的k8s集群组成,包含以下内容

k8s-master 负责任务调度,控制节点

k8s-node1 承载运⾏pod(容器)

k8s-node2 承载运⾏pod(容器)

Master

Master主要负责资源调度,控制副本,和提供统⼀访问集群的⼊⼝。--核⼼节点也是管理节点

Node

Node是Kubernetes集群架构中运⾏Pod的服务节点。Node是Kubernetes集群操作的单元,⽤来承 载被分配Pod的运⾏,是Pod运⾏的宿主机,由Master管理,并汇报容器状态给Master,同时根据Master要求管理容器⽣命周期。同时k8s自带高可用,例如一个pod(容器集合)被创建在node1上,node1故障,pod自动转移到其他健康的node节点。

Node IP

Node节点的IP地址,是Kubernetes集群中每个节点的物理⽹卡的IP地址,是真实存在的物理⽹ 络,所有属于这个⽹络的服务器之间都能通过这个⽹络直接通信(这里指演示用的虚拟机的ip);

pod

Pod直译是⾖荚,可以把容器想像成⾖荚⾥的⾖⼦,把⼀个或多个关系紧密的⾖⼦包在⼀起就是⾖荚 (⼀个Pod)。

在k8s中我们不会直接操作容器,⽽是把容器包装成Pod再进⾏管理运⾏于Node节点 上, 若⼲相关容器的组合被称为pod。

Pod内包含的容器运⾏在同⼀宿主机上,使⽤相同的⽹络命名空间、IP地址和端⼝,能够通过localhost进⾏通信。

Pod是k8s进⾏创建、调度和管理的最⼩单位,它提供了⽐容器更⾼层次的抽象存在,使得部署和管理更加灵活。⼀个Pod可以包含⼀个容器或者多个相关容器。 Pod 就是 k8s 集群⾥的"应⽤";⽽⼀个平台应⽤,可以由多个容器组成。

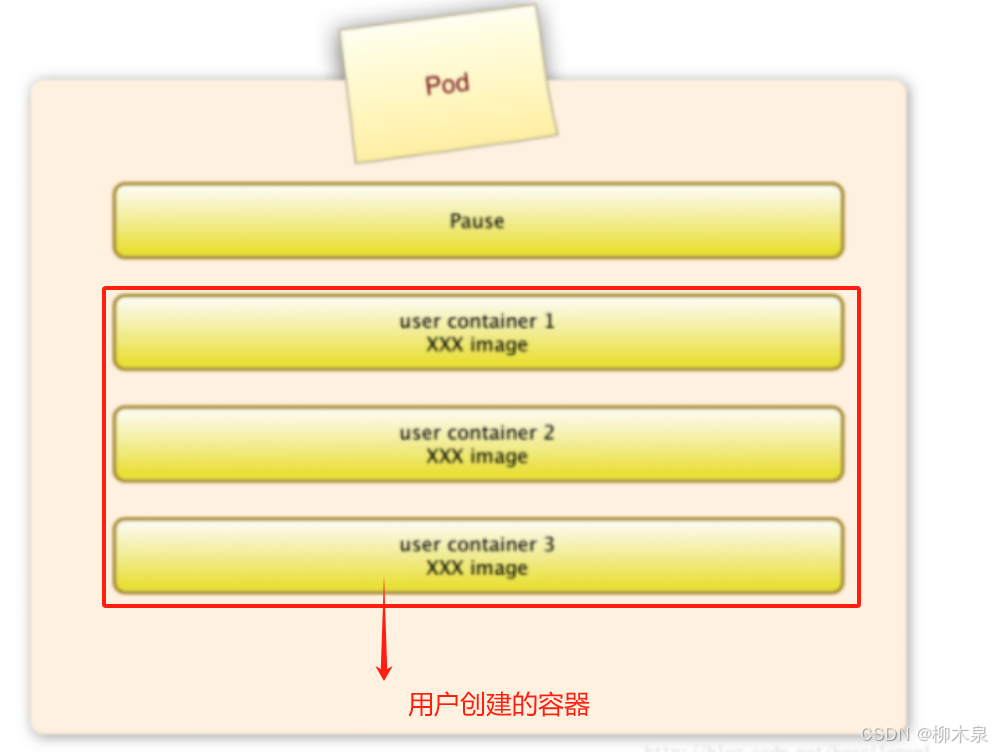

pod的具象图如下:

pause容器

每个Pod中都有⼀个pause容器,pause容器做为Pod的⽹络接⼊点,Pod中其他的容器会使⽤容器映射模式启动并接⼊到这个pause容器,解决pod内容器相互间通讯问题。

属于同⼀个Pod的所有容器共享⽹络的namespace。

Pod Volume(卷挂载)

Docker Volume对应Kubernetes中的Pod Volume;

数据卷,挂载宿主机⽂件、⽬录或者外部存储到Pod中,为应⽤服务提供存储,也可以解决Pod中容器之间共享数据。

资源限制

每个Pod可以设置限额的计算机资源有CPU和Memory;

pod无法挂起,只能创建/运行和删除

Event

事件:在 Kubernetes 中,Pod 可以产生各种事件(Events),这些事件记录了 Pod 的状态变化和相关操作,例如创建、删除、调度、启动失败等。事件帮助管理员和开发者了解和诊断 Pod 的运行状态。Event通常关联到具体资源对象上,是排查故障的重要参考信息

Pod IP

Pod的IP地址,是Docker Engine根据docker⽹桥的IP地址段进⾏分配的,通常是⼀个虚拟的⼆层⽹络,位于不同Node上的Pod能够彼此通信,需要通过Pod IP所在的虚拟⼆层⽹络进⾏通信,⽽ 真实的TCP流量则是通过Node IP所在的物理⽹卡流出的。

2、Kubernetes架构和组件

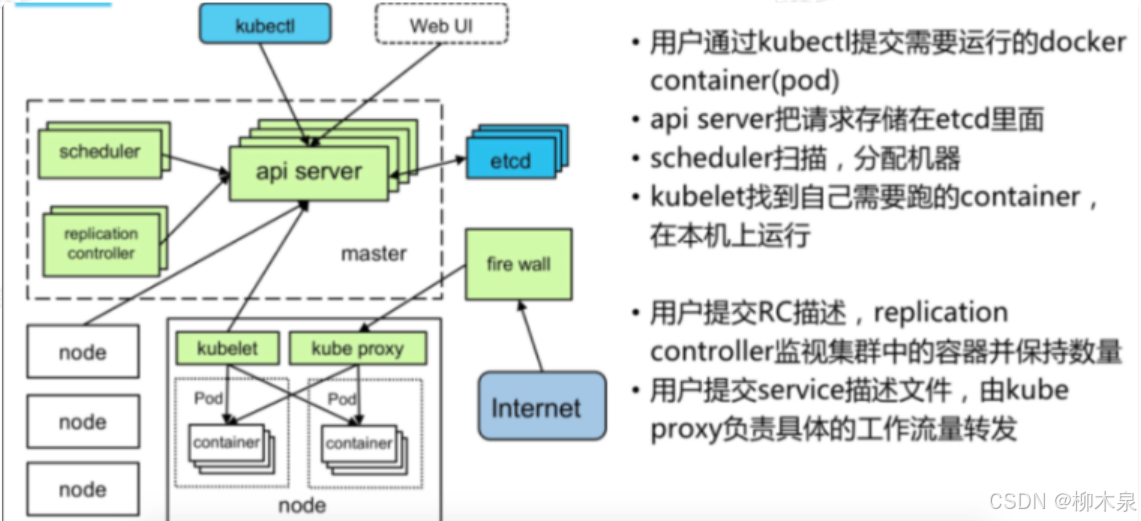

架构图:

这里我用一个流程来说明各个组件之间的作用

|

1. 用户提交请求 2. API Server 接受请求 3. 调度器(Scheduler)读取信息 4. 调度器选择节点并返回请求 5. 节点组件的角色 6. Kubelet 接收任务并创建容器 7. 维持 Pod 的副本数量 8. etcd 数据库的作用 9. Pod 的暴露和流量转发 |

各个组件概念如下:

Kubernetes Master

Kubernetes Master是集群控制节点,负责整个集群的管理和控制,所有控制命令基本都是由Master节点执行。主要组件包括:

-

Kubernetes API Server:

- 系统入口,封装核心对象的增删改查操作。

- 通过RESTful API接口提供服务,数据持久化到Etcd中。

-

Kubernetes Scheduler:

- 为新建Pod选择节点,进行资源调度。

- 组件可替换,支持使用其他调度器。

-

Kubernetes Controller:

- 负责执行各种控制器以保证Kubernetes的正常运行,主要控制器包括:

- Replication Controller:管理Pod副本数量,确保实际副本数量与定义一致。

- Deployment Controller:管理Deployment及其Pod,控制Pod更新。

- Node Controller:检查Node健康状态,标识失效和正常Node。

- Namespace Controller:清理无效Namespace及其API对象。

- Service Controller:管理服务负载和代理。

- EndPoints Controller:关联Service和Pod,创建和更新Endpoints。

- Service Account Controller:创建和管理Service Account及其Secret。

- Persistent Volume Controller:管理Persistent Volume和Claim,执行绑定和清理。

- Daemon Set Controller:创建Daemon Pod,确保在指定Node上运行。

- Job Controller:管理Job及其一次性任务Pod。

- Pod Autoscaler Controller:实现Pod的自动伸缩,根据监控数据和策略进行伸缩。

- 负责执行各种控制器以保证Kubernetes的正常运行,主要控制器包括:

Kubernetes Node

Node节点是Kubernetes集群中的工作负载节点,Master负责分配工作负载(如Docker容器),并在节点宕机时将工作负载转移到其他节点。主要组件包括:

-

Kubelet:

- 管理容器,从API Server接收Pod创建请求,启动、停止容器,监控状态并报告给API Server。

-

Kubernetes Proxy:

- 为Pod创建代理服务,获取Service信息并进行请求路由和转发,实现虚拟转发网络。

-

Docker Engine:

- 负责容器的创建和管理。

-

Flanneld网络插件:

- 提供网络功能。

数据库etcd:

- 分布式键值存储系统,用于保存集群状态数据(如Pod、Service等),推荐独立部署。

3、Kubernetes部署方式

⽅式1. minikube

Minikube是⼀个⼯具,可以在本地快速运⾏⼀个单点的Kubernetes,尝试Kubernetes或⽇常开发 的⽤户使⽤。不能⽤于⽣产环境。

官⽅地址:https://kubernetes.io/docs/setup/minikube/

⽅式2. kubeadm

Kubeadm也是⼀个⼯具,提供kubeadm init和kubeadm join,⽤于快速部署Kubernetes集群。

官⽅地址:https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

⽅式3.yum安装

直接使⽤epel-release yum源,缺点就是版本较低 1.5

⽅式4. ⼆进制包

从官⽅下载发⾏版的⼆进制包,⼿动部署每个组件,组成Kubernetes集群。

4、kubernetes部署(二进制)

| ⽬标任务: 1、Kubernetes集群部署架构规划 2、部署Etcd数据库集群 3、在Node节点安装Docker 4、部署Flannel⽹络插件(用于不同node上容器之间通信) 5、在Master节点部署组件(api-server,schduler,controller-manager) 6、在Node节点部署组件(kubelet,kube-proxy) 7、查看集群状态 8、运⾏⼀个测试示例 9、部署Dashboard(Web UI) 可选 |

系统环境:centos7,开启ssh登录,关闭防火墙,关闭selinux、设置静态ip、时间同步

k8s所需安装包

链接:https://pan.baidu.com/s/1_SU8KtVuzUBh-P4FJW-8GQ?pwd=bo2j

提取码:bo2j 修改主机名,3个节点互相配置域名解析,角色如下(一般情况下是有两个master做高可用,这里只用一个,后续会演示集群扩展)

| IP | 角色 |

|---|---|

| 192.168.188.101 | k8s-master1 |

| 192.168.188.102 | k8s-node1 |

| 192.168.188.103 | k8s-node2 |

4.1、部署Etcd集群

这里是由三个节点组成的etcd集群,正常情况etcd数据库应当和k8s分开部署。

三个etcd之间通信需要https协议,所以需要先做自签名证书。

下载cfssl工具:下载的这些是可执行的二进制命令直接用就可以了

[root@k8s-master1 ~]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@k8s-master1 ~]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@k8s-master1 ~]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

外网环境不稳定的使用云盘下载上传

[root@k8s-master1 ~]# ls

anaconda-ks.cfg cfssl-certinfo_linux-amd64 cfssljson_linux-amd64 cfssl_linux-amd64

[root@k8s-master1 ~]# chmod +x cfssl*

[root@k8s-master1 ~]# ls

anaconda-ks.cfg cfssl-certinfo_linux-amd64 cfssljson_linux-amd64 cfssl_linux-amd64

[root@k8s-master1 ~]# mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@k8s-master1 ~]# mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@k8s-master1 ~]# mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

[root@k8s-master1 ~]# mkdir cert

[root@k8s-master1 ~]# cd cert/

[root@k8s-master1 cert]# vim ca-config.json #生成ca中心的文件

[root@k8s-master1 cert]# cat ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

[root@k8s-master1 cert]#

[root@k8s-master1 cert]# vim ca-csr.json #生成ca中心的请求文件

[root@k8s-master1 cert]# cat ca-csr.json

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

[root@k8s-master1 cert]#

[root@k8s-master1 cert]# vim server-csr.json #服务器证书的请求文件

[root@k8s-master1 cert]# cat server-csr.json

{

"CN": "etcd",

"hosts": [

"192.168.188.101",

"192.168.188.102",

"192.168.188.103"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

[root@k8s-master1 cert]#

生成ca认证中心

[root@k8s-master1 cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2024/08/11 11:00:59 [INFO] generating a new CA key and certificate from CSR

2024/08/11 11:00:59 [INFO] generate received request

2024/08/11 11:00:59 [INFO] received CSR

2024/08/11 11:00:59 [INFO] generating key: rsa-2048

2024/08/11 11:00:59 [INFO] encoded CSR

2024/08/11 11:00:59 [INFO] signed certificate with serial number 405945695011591619580436183577511508583558427674

[root@k8s-master1 cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem server-csr.json

[root@k8s-master1 cert]#

生成了ca-key.pem(ca私钥) ca.pem(ca证书)根据ca证书和私钥根据证书请求文件,签发证书

[root@k8s-master1 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

2024/08/11 11:06:39 [INFO] generate received request

2024/08/11 11:06:39 [INFO] received CSR

2024/08/11 11:06:39 [INFO] generating key: rsa-2048

2024/08/11 11:06:39 [INFO] encoded CSR

2024/08/11 11:06:39 [INFO] signed certificate with serial number 222112643168924562880572112923105301745282612424

2024/08/11 11:06:39 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master1 cert]# ls

ca-config.json ca-csr.json ca.pem server-csr.json server.pem

ca.csr ca-key.pem server.csr server-key.pem

[root@k8s-master1 cert]#

查看生成的内容

[root@k8s-master1 cert]# ls *pem

ca-key.pem ca.pem server-key.pem server.pem

ca证书

ca私钥

server证书

server证书对应的私钥安装Etcd

二进制包下载地址:

Release v3.2.12 · etcd-io/etcd · GitHub

[root@k8s-master1 cert]# cd ..

[root@k8s-master1 ~]# wget https://github.com/etcd-io/etcd/releases/download/v3.2.12/etcd-v3.2.12-linux-amd64.tar.gz

# 云盘链接里面有

[root@k8s-master1 ~]# ls

anaconda-ks.cfg cert etcd-v3.2.12-linux-amd64.tar.gz

将这个包分发给另外两个节点(需要部署etcd的节点)

[root@k8s-master1 ~]# scp etcd-v3.2.12-linux-amd64.tar.gz k8s-node1:/root/

[root@k8s-master1 ~]# scp etcd-v3.2.12-linux-amd64.tar.gz k8s-node2:/root/

在三个节点执行以下操作

# mkdir -p /opt/etcd/{bin,cfg,ssl}

#创建文件存放etcd的程序,配置文件,证书

# tar -zxvf etcd-v3.2.12-linux-amd64.tar.gz

# ls

anaconda-ks.cfg cert etcd-v3.2.12-linux-amd64 etcd-v3.2.12-linux-amd64.tar.gz

# ls etcd-v3.2.12-linux-amd64

Documentation etcd etcdctl README-etcdctl.md README.md READMEv2-etcdctl.md

# mv etcd-v3.2.12-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

# ls /opt/etcd/bin/

etcd etcdctl

编写etcd配置文件

注意节点名,ip配置,删除所有注释,每行结尾不能有空格,使用set list检查

[root@k8s-master1 ~]# vim /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd01" #节点名称,各个节点不能相同

ETCD_DATA_DIR="/var/lib/etcd/default.etcd" #启动后自动创建,提前创建需要提供权限

ETCD_LISTEN_PEER_URLS="https://192.168.188.101:2380" #写本节点的ip

ETCD_LISTEN_CLIENT_URLS="https://192.168.188.101:2379" #写本节点的ip

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.188.101:2380" #写本节点的ip

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.188.101:2379" #写本节点的ip

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.188.101:2380,etcd02=https://192.168.188.102:2380,etcd03=https://192.168.188.103:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

参数解释:

* ETCD_NAME 节点名称,每个节点名称不一样

* ETCD_DATA_DIR 存储数据目录(他是一个数据库,不是存在内存的,存在硬盘中的,所有和k8s有关的信息都会存到etcd里面的)

* ETCD_LISTEN_PEER_URLS 集群通信监听地址

* ETCD_LISTEN_CLIENT_URLS 客户端访问监听地址

* ETCD_INITIAL_ADVERTISE_PEER_URLS 集群通告地址

* ETCD_ADVERTISE_CLIENT_URLS 客户端通告地址

* ETCD_INITIAL_CLUSTER 集群节点地址

* ETCD_INITIAL_CLUSTER_TOKEN 集群Token

* ETCD_INITIAL_CLUSTER_STATE 加入集群的当前状态,new是新集群,existing表示加入已有集群scp到node上,在node上根据情况修改文件内容

[root@k8s-master1 ~]# scp /opt/etcd/cfg/etcd k8s-node1:/opt/etcd/cfg/

在node1上根据情况修改

[root@k8s-master1 ~]# scp /opt/etcd/cfg/etcd k8s-node2:/opt/etcd/cfg/

在node2上根据情况修改使用systemd管理etcd

[root@k8s-master1 ~]# vim /usr/lib/systemd/system/etcd.service

[root@k8s-master1 ~]# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd

ExecStart=/opt/etcd/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

[root@k8s-master1 ~]# 将此文件scp到node上

[root@k8s-master1 ~]# scp /usr/lib/systemd/system/etcd.service k8s-node1:/usr/lib/systemd/system/

[root@k8s-master1 ~]# scp /usr/lib/systemd/system/etcd.service k8s-node2:/usr/lib/systemd/system/

此时先不要载入新建文件,需要将证书导入ssl目录下

[root@k8s-master1 ~]# ls /root/cert

ca-config.json ca-csr.json ca.pem server-csr.json server.pem

ca.csr ca-key.pem server.csr server-key.pem

[root@k8s-master1 ~]# ls /opt/etcd/ssl/

[root@k8s-master1 ~]# cd /root/cert/

[root@k8s-master1 cert]# cp *pem /opt/etcd/ssl/

[root@k8s-master1 cert]# scp *pem k8s-node1:/opt/etcd/ssl/

[root@k8s-master1 cert]# scp *pem k8s-node2:/opt/etcd/ssl/

以下操作在三个节点进行

# systemctl daemon-reload

# systemctl start etcd

执行这一步第一个节点会卡住是因为没有同步启动,导致没有连接到后续节点,不影响后续操作

# systemctl enable etcd检测etcd集群状态命令(记得修改ip)

[root@k8s-master1 cert]# /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.188.101:2379,https://192.168.188.102:2379,https://192.168.188.103:2379" cluster-health

member 3aba7a393ca71fa3 is healthy: got healthy result from https://192.168.188.101:2379

member 874e8d481cfbccd7 is healthy: got healthy result from https://192.168.188.103:2379

member ee161a05c1db844b is healthy: got healthy result from https://192.168.188.102:2379

cluster is healthy

[root@k8s-master1 cert]#如果输出上面信息,就说明集群部署成功。

如果有问题第一步先看日志:/var/log/messages 或 journalctl -u etcd

然后停止etcd数据库然后检查/opt/etcd/cfg/etcd,然后删除/var/lib/etcd/default.etcd所有数据,重新启动。

4.2、node节点部署docker

卸载原有docker(略)

部署方式参考上一篇文章

部署完成后暂时不要启动

4.3、部署Flannel网络插件

Flannel 需要通过 etcd 存储自身的子网信息,因此必须确保能成功连接 etcd 并写入预定义的子网段。Flannel 应该部署在node节点上,而不是在主节点上,因为它的作用是为所有容器提供通信功能。如果没有在主节点上部署应用,就不需要在主节点上部署 Flannel。

部署flannel需要先在master生成证书

[root@k8s-master1 ~]# cd cert/

[root@k8s-master1 cert]# /opt/etcd/bin/etcdctl \

> --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

> --endpoints="https://192.168.188.101:2379,https://192.168.188.102:2379,https://192.168.188.103:2379" \

> set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

[root@k8s-master1 cert]# echo $?

0

将生成的证书copy到剩下的机器上

[root@k8s-master1 ~]# scp -r cert/ k8s-node1:/root/

[root@k8s-master1 ~]# scp -r cert/ k8s-node1:/root/安装Flannel

(两个node节点上完成)

# wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz

这里使用云盘文件代替

# mkdir -p /opt/kubernetes/bin

# tar -zxvf flannel-v0.10.0-linux-amd64.tar.gz

flanneld

mk-docker-opts.sh

README.md

# mv flanneld mk-docker-opts.sh /opt/kubernetes/bin/

# mkdir /opt/kubernetes/cfg

# vim /opt/kubernetes/cfg/flanneld

# cat /opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.188.101:2379,https://192.168.188.102:2379,https://192.168.188.103:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem"

使用systemd管理flannel

(两个node节点上完成)

# vim /usr/lib/systemd/system/flanneld.service

# cat /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target配置Docker启动指定子网段(通过引入flanner配置实现),这里可以将源文件直接覆盖掉

(两个node节点上完成)

# vim /usr/lib/systemd/system/docker.service

# cat /usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

启动flannel和docker

(两个node节点上完成)

# systemctl daemon-reload

# systemctl start flanneld

# systemctl enable flanneld docker

Created symlink from /etc/systemd/system/multi-user.target.wants/flanneld.service to /usr/lib/systemd/system/flanneld.service.

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

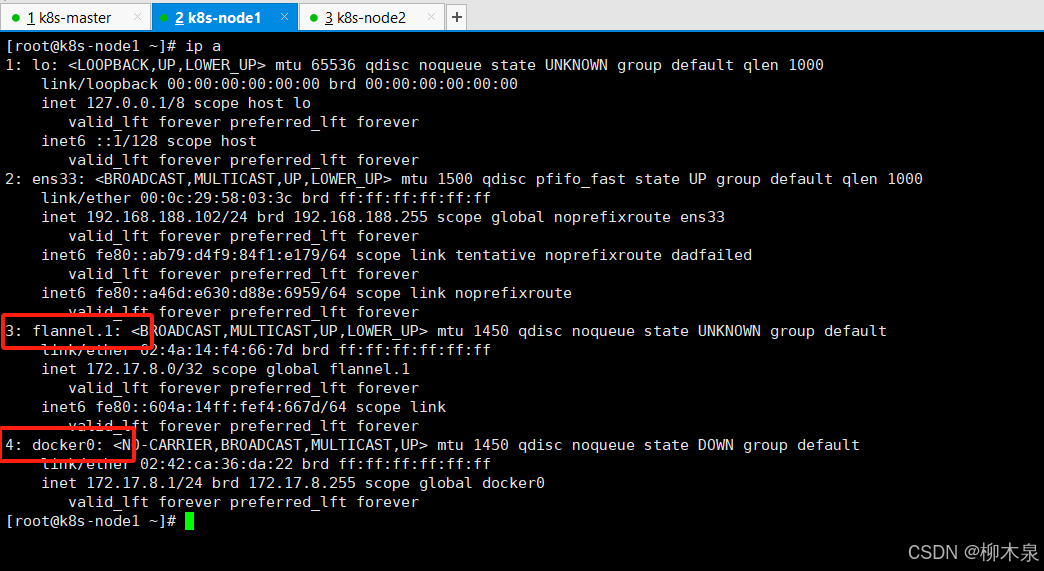

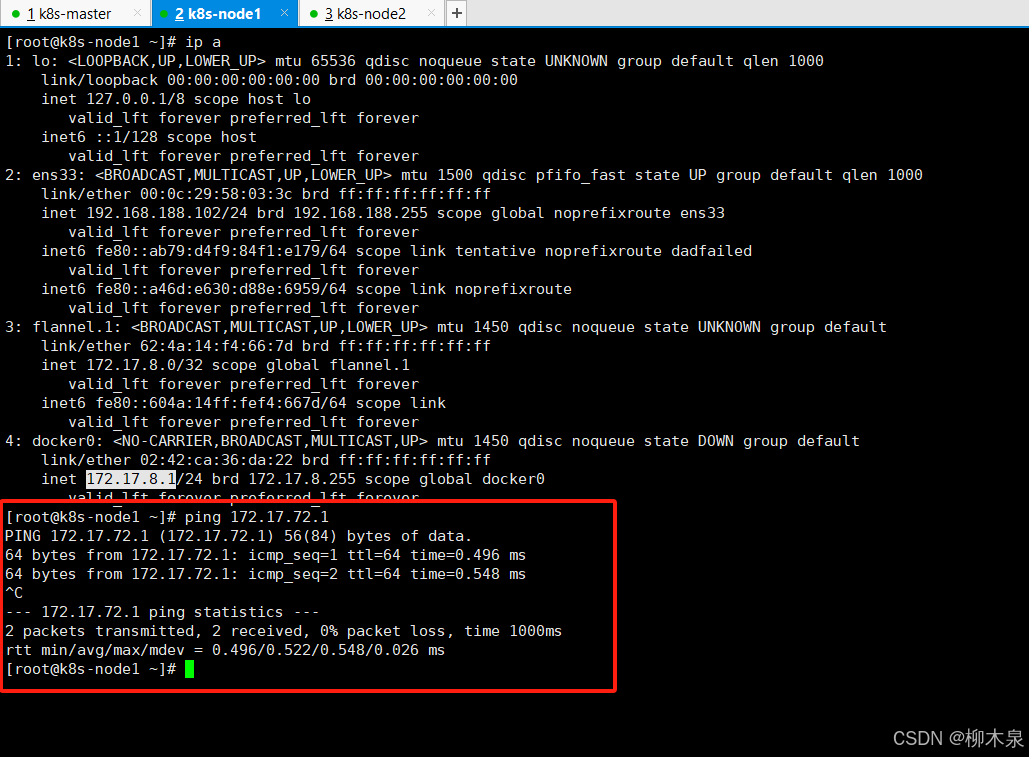

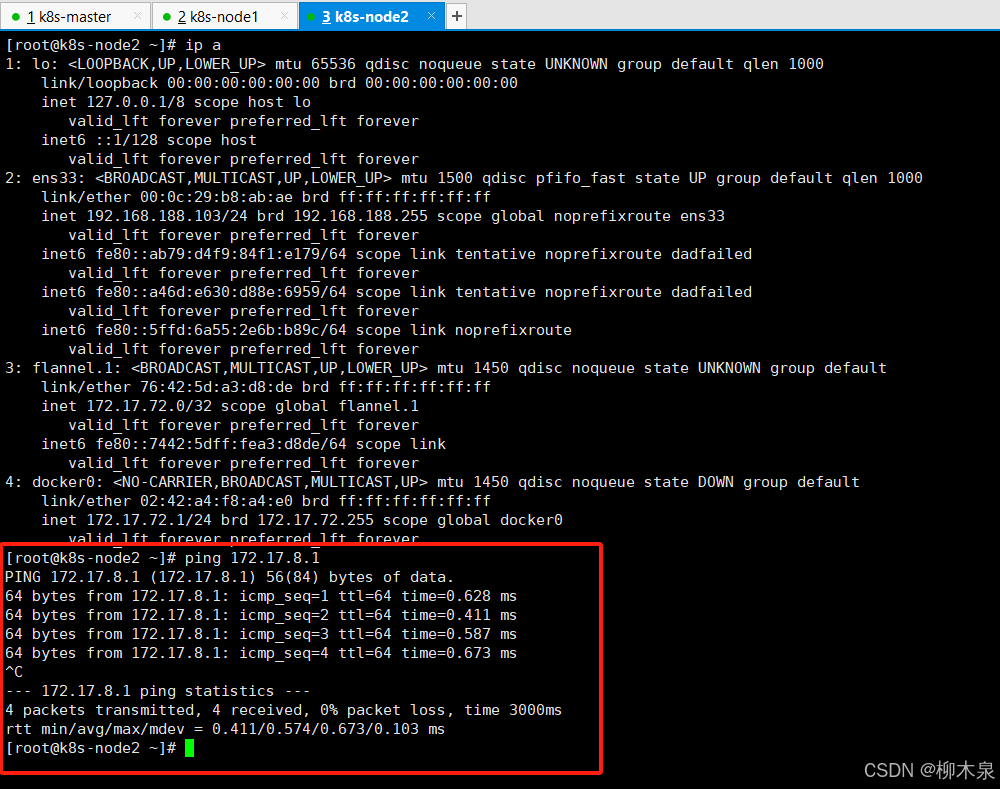

# systemctl start docker检查方法

ip a查看生成的网卡

node1与node2的docker互通测试

4.4、在Master节点部署组件

第一步查看etcd数据库和node上docker以及flanner是否正常,再进行后续操作

4.4.1、生成证书

创建ca证书

[root@k8s-master1 ~]# mkdir -p /opt/crt/

[root@k8s-master1 ~]# cd /opt/crt/

[root@k8s-master1 crt]# vim ca-config.json

[root@k8s-master1 crt]# cat ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

[root@k8s-master1 crt]# vim ca-csr.json

[root@k8s-master1 crt]# cat ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

[root@k8s-master1 crt]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2024/08/12 01:40:59 [INFO] generating a new CA key and certificate from CSR

2024/08/12 01:40:59 [INFO] generate received request

2024/08/12 01:40:59 [INFO] received CSR

2024/08/12 01:40:59 [INFO] generating key: rsa-2048

2024/08/12 01:40:59 [INFO] encoded CSR

2024/08/12 01:40:59 [INFO] signed certificate with serial number 371271588690637678245771582062446800447169685495

[root@k8s-master1 crt]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

生成api server证书(server*.pem给kubelet访问api用)

[root@k8s-master1 crt]# vim server-csr.json

[root@k8s-master1 crt]# cat server-csr.json

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.188.101",

"192.168.188.102",

"192.168.188.103",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

[root@k8s-master1 crt]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

2024/08/12 01:47:17 [INFO] generate received request

2024/08/12 01:47:17 [INFO] received CSR

2024/08/12 01:47:17 [INFO] generating key: rsa-2048

2024/08/12 01:47:17 [INFO] encoded CSR

2024/08/12 01:47:17 [INFO] signed certificate with serial number 560513267303898316157650121391556010442151460732

2024/08/12 01:47:17 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master1 crt]# echo $?

0

[root@k8s-master1 crt]# ls

ca-config.json ca-csr.json ca.pem server-csr.json server.pem

ca.csr ca-key.pem server.csr server-key.pem

生成kube-proxy证书

[root@k8s-master1 crt]# vim kube-proxy-csr.json

[root@k8s-master1 crt]# cat kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

[root@k8s-master1 crt]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

2024/08/12 01:52:18 [INFO] generate received request

2024/08/12 01:52:18 [INFO] received CSR

2024/08/12 01:52:18 [INFO] generating key: rsa-2048

2024/08/12 01:52:18 [INFO] encoded CSR

2024/08/12 01:52:18 [INFO] signed certificate with serial number 551191362201513999751984108198311988195553115643

2024/08/12 01:52:18 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master1 crt]# echo $?

0

最终结果

[root@k8s-master1 crt]# ls *pem

ca-key.pem ca.pem kube-proxy-key.pem kube-proxy.pem server-key.pem server.pem4.4.2、部署api server组件

[root@k8s-master1 ~]# wget https://dl.k8s.io/v1.11.10/kubernetes-server-linux-amd64.tar.gz

[root@k8s-master1 ~]# ls

anaconda-ks.cfg etcd-v3.2.12-linux-amd64 kubernetes-server-linux-amd64.tar.gz

cert etcd-v3.2.12-linux-amd64.tar.gz

[root@k8s-master1 ~]# mkdir -p /opt/kubernetes/{bin,cfg,ssl}

#接下来做的就是把这三个目录填满

[root@k8s-master1 ~]# tar -zxvf kubernetes-server-linux-amd64.tar.gz

[root@k8s-master1 ~]# cd kubernetes/server/bin

[root@k8s-master1 bin]# cp kube-apiserver kube-scheduler kube-controller-manager kubectl /opt/kubernetes/bin/

[root@k8s-master1 bin]# cd /opt/crt/

[root@k8s-master1 crt]# cp server.pem server-key.pem ca.pem ca-key.pem /opt/kubernetes/ssl/

[root@k8s-master1 crt]# cd /opt/kubernetes/cfg/

创建token文件

[root@k8s-master1 cfg]# vim token.csv

[root@k8s-master1 cfg]# cat token.csv

674c457d4dcf2eefe4920d7dbb6b0ddc,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

创建apiserver配置文件

[root@k8s-master1 cfg]# vim kube-apiserver

[root@k8s-master1 cfg]# cat kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.188.101:2379,https://192.168.188.102:2379,https://192.168.188.103:2379 \

--bind-address=192.168.188.101 \

--secure-port=6443 \

--advertise-address=192.168.188.101 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

[root@k8s-master1 cfg]#

api配置文件参数说明

参数说明:

* --logtostderr 启用日志

* --v 日志等级

* --etcd-servers etcd集群地址

* --bind-address 监听地址

* --secure-port https安全端口

* --advertise-address 集群通告地址

* --allow-privileged 启用授权

* --service-cluster-ip-range Service虚拟IP地址段

* --enable-admission-plugins 准入控制模块

* --authorization-mode 认证授权,启用RBAC授权和节点自管理

* --enable-bootstrap-token-auth 启用TLS bootstrap功能,后面会讲到

* --token-auth-file token文件

* --service-node-port-range Service Node类型默认分配端口范围使用systemd管理api server

[root@k8s-master1 cfg]# vim /usr/lib/systemd/system/kube-apiserver.service

[root@k8s-master1 cfg]# cat /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动api server

[root@k8s-master1 cfg]# systemctl daemon-reload

[root@k8s-master1 cfg]# systemctl enable kube-apiserver

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.

[root@k8s-master1 cfg]# systemctl start kube-apiserver

[root@k8s-master1 cfg]# systemctl status kube-apiserver4.4.3、部署schduler组件

创建schduler配置文件

[root@k8s-master1 cfg]# pwd

/opt/kubernetes/cfg

[root@k8s-master1 cfg]# vim kube-scheduler

[root@k8s-master1 cfg]# cat kube-scheduler

KUBE_SCHEDULER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect"

参数说明

参数说明:

* --master 连接本地apiserver

* --leader-elect 当该组件启动多个时,自动选举(HA)systemd管理schduler组件

[root@k8s-master1 cfg]# vim /usr/lib/systemd/system/kube-scheduler.service

[root@k8s-master1 cfg]# cat /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target启动scheduler

[root@k8s-master1 cfg]# systemctl daemon-reload

[root@k8s-master1 cfg]# systemctl enable kube-scheduler

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

[root@k8s-master1 cfg]# systemctl start kube-scheduler

[root@k8s-master1 cfg]# systemctl status kube-scheduler4.4.4、部署controller-manager组件

controller-manager组件属于rc

创建controller-manager配置文件

[root@k8s-master1 cfg]# pwd

/opt/kubernetes/cfg

[root@k8s-master1 cfg]# vim kube-controller-manager

[root@k8s-master1 cfg]# cat kube-controller-manager

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect=true \

--address=127.0.0.1 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem"systemd管理controller-manager

[root@k8s-master1 cfg]# vim /usr/lib/systemd/system/kube-controller-manager.service

[root@k8s-master1 cfg]# cat /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动

[root@k8s-master1 cfg]# systemctl daemon-reload

[root@k8s-master1 cfg]# systemctl enable kube-controller-manager

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.

[root@k8s-master1 cfg]# systemctl start kube-controller-manager

[root@k8s-master1 cfg]# systemctl status kube-controller-manager.service

4.4.5、master节点部署检查

root@k8s-master1 cfg]# /opt/kubernetes/bin/kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-1 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}

etcd-2 Healthy {"health": "true"}

[root@k8s-master1 cfg]#

4.5、在Node节点部署组件

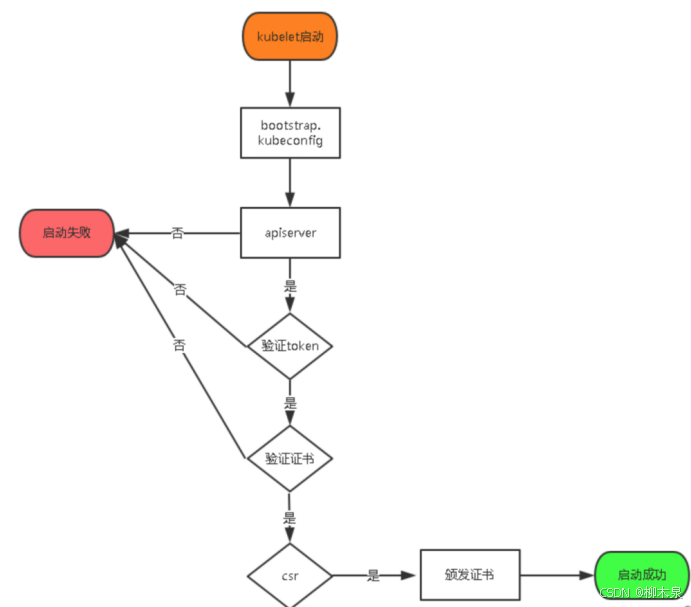

Kubernetes 中的 TLS Bootstrapping 机制。简而言之:

- TLS 认证:当 Kubernetes Master 的 API server 启用了 TLS 认证后,所有通信必须使用有效的证书进行加密。

- kubelet 证书需求:每个 Node 节点上的 kubelet 组件需要一个有效的证书才能与 API server 通信。

- 证书签署的繁琐:如果集群中的节点数量很多,手动签发证书会变得非常繁琐。

- TLS Bootstrapping 机制:为了简化证书管理,kubelet 以低权限用户身份自动向 API server 申请证书。API server 会动态签发 kubelet 需要的证书。

总的来说,TLS Bootstrapping 机制自动化了证书签发过程,简化了节点加入集群的操作。

可以参照下图进行理解

4.5.1、生成证书

先添加kubctl命令

[root@k8s-master1 ~]# ln -s /opt/kubernetes/bin/kubectl /usr/bin/kubectl将kubelet-bootstrap用户绑定到系统集群角色(获得集群角色权限)

[root@k8s-master1 ~]# ln -s /opt/kubernetes/bin/kubectl /usr/bin/kubectl

[root@k8s-master1 ~]# kubectl create clusterrolebinding kubelet-bootstrap \

> --clusterrole=system:node-bootstrapper \

> --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created创建kubeconfig文件(这里尽量每做一步检查一下)

指定api地址(有负载均衡写负载均衡的)和token

[root@k8s-master1 ~]# cd /opt/crt/

[root@k8s-master1 crt]# KUBE_APISERVER="https://192.168.188.101:6443"

[root@k8s-master1 crt]# cat /opt/kubernetes/cfg/token.csv

674c457d4dcf2eefe4920d7dbb6b0ddc,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

[root@k8s-master1 crt]# BOOTSTRAP_TOKEN=674c457d4dcf2eefe4920d7dbb6b0ddc

初步设置,指定证书文件,ca证书嵌入,指定api server,初步生成bootstrap.kubeconfi文件

[root@k8s-master1 crt]# kubectl config set-cluster kubernetes \

> --certificate-authority=ca.pem \

> --embed-certs=true \

> --server=${KUBE_APISERVER} \

> --kubeconfig=bootstrap.kubeconfig

Cluster "kubernetes" set.

[root@k8s-master1 crt]# echo $?

0

设置bootstrap.kubeconfi文件,添加/创建带有token的名为kubelet-bootstrap用户的凭据

[root@k8s-master1 crt]# kubectl config set-credentials kubelet-bootstrap \

> --token=${BOOTSTRAP_TOKEN} \

> --kubeconfig=bootstrap.kubeconfig

User "kubelet-bootstrap" set.

[root@k8s-master1 crt]# echo $?

0

设置上下文参数,指定关联的集群,关联的用户,将这些写入文件中

[root@k8s-master1 crt]# kubectl config set-context default \

> --cluster=kubernetes \

> --user=kubelet-bootstrap \

> --kubeconfig=bootstrap.kubeconfig

Context "default" created.

[root@k8s-master1 crt]# echo $?

0

将 kubectl 的当前上下文切换到名为 default 的上下文

[root@k8s-master1 crt]# kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

Switched to context "default".

[root@k8s-master1 crt]# echo $?

0

创建kube-proxy kubeconfig文件(流程同上)

[root@k8s-master1 crt]# kubectl config set-cluster kubernetes \

> --certificate-authority=ca.pem \

> --embed-certs=true \

> --server=${KUBE_APISERVER} \

> --kubeconfig=kube-proxy.kubeconfig

Cluster "kubernetes" set.

[root@k8s-master1 crt]# echo $?

0

[root@k8s-master1 crt]# kubectl config set-credentials kube-proxy \

> --client-certificate=kube-proxy.pem \

> --client-key=kube-proxy-key.pem \

> --embed-certs=true \

> --kubeconfig=kube-proxy.kubeconfig

User "kube-proxy" set.

[root@k8s-master1 crt]# echo $?

0

[root@k8s-master1 crt]# kubectl config set-context default \

> --cluster=kubernetes \

> --user=kube-proxy \

> --kubeconfig=kube-proxy.kubeconfig

Context "default" created.

[root@k8s-master1 crt]# echo $?

0

[root@k8s-master1 crt]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

Switched to context "default".

[root@k8s-master1 crt]# echo $?

0

查明文件并拷贝

[root@k8s-master1 crt]# ls *.kubeconfig

bootstrap.kubeconfig kube-proxy.kubeconfig

[root@k8s-master1 crt]# scp *.kubeconfig k8s-node1:/opt/kubernetes/cfg/

[root@k8s-master1 crt]# scp *.kubeconfig k8s-node2:/opt/kubernetes/cfg/

4.5.2、组件拷贝

[root@k8s-master1 ~]# ls

anaconda-ks.cfg etcd-v3.2.12-linux-amd64 kubernetes

cert etcd-v3.2.12-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz

[root@k8s-master1 ~]# cd /root/kubernetes/server/bin

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-node1:/opt/kubernetes/bin/

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-node2:/opt/kubernetes/bin/

4.5.3、在node节点创建kubelet配置文件

两个node节点操作

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kubelet

[root@k8s-node1 ~]# cat /opt/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.188.102 \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

[root@k8s-node1 ~]# docker pull registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

node2同上,记得改ip参数说明

参数说明:

* --hostname-override 在集群中显示的主机名

* --kubeconfig 指定kubeconfig文件位置,会自动生成

* --bootstrap-kubeconfig 指定刚才生成的bootstrap.kubeconfig文件

* --cert-dir 颁发证书存放位置

* --pod-infra-container-image 管理Pod网络的镜像其中/opt/kubernetes/cfg/kubelet.config配置文件也需要准备

两个节点操作

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kubelet.config

[root@k8s-node1 ~]# cat /opt/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.188.102

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

webhook:

enabled: false

添加证书路径

两个节点操作

[root@k8s-node1 ~]# mkdir -p /opt/kubernetes/ssl

然后去master

[root@k8s-master1 crt]# pwd

/opt/crt

[root@k8s-master1 crt]# scp *pem k8s-node1:/opt/kubernetes/ssl/

[root@k8s-master1 crt]# scp *pem k8s-node2:/opt/kubernetes/ssl/systemd管理kubelet组件

两个node都要写

[root@k8s-node1 ~]# vim /usr/lib/systemd/system/kubelet.service

[root@k8s-node1 ~]# cat /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target启动kubelet

两个node节点都做

[root@k8s-node1 ~]# systemctl daemon-reload

[root@k8s-node1 ~]# systemctl enable kubelet

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

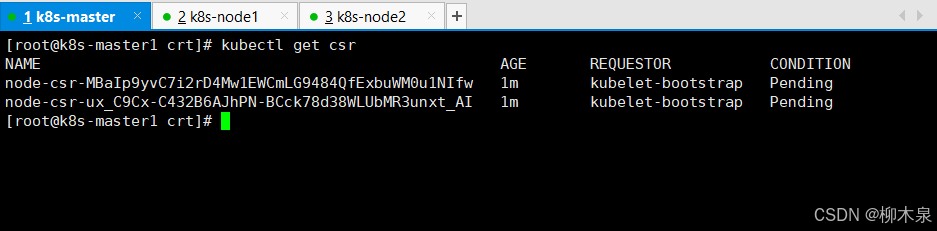

[root@k8s-node1 ~]# systemctl start kubelet回到master节点,查看 Kubernetes 集群中证书签名请求

[root@k8s-master1 crt]# kubectl get csr

可以看到两个请求处于pending(在等待)

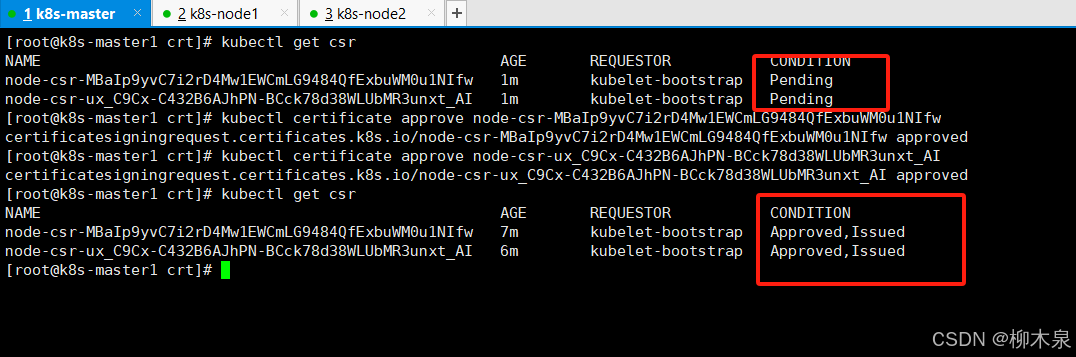

在Master审批Node加入集群

[root@k8s-master1 crt]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-MBaIp9yvC7i2rD4Mw1EWCmLG9484QfExbuWM0u1NIfw 1m kubelet-bootstrap Pending

node-csr-ux_C9Cx-C432B6AJhPN-BCck78d38WLUbMR3unxt_AI 1m kubelet-bootstrap Pending

[root@k8s-master1 crt]# kubectl certificate approve node-csr-MBaIp9yvC7i2rD4Mw1EWCmLG9484QfExbuWM0u1NIfw

certificatesigningrequest.certificates.k8s.io/node-csr-MBaIp9yvC7i2rD4Mw1EWCmLG9484QfExbuWM0u1NIfw approved

[root@k8s-master1 crt]# kubectl certificate approve node-csr-ux_C9Cx-C432B6AJhPN-BCck78d38WLUbMR3unxt_AI

certificatesigningrequest.certificates.k8s.io/node-csr-ux_C9Cx-C432B6AJhPN-BCck78d38WLUbMR3unxt_AI approved

[root@k8s-master1 crt]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-MBaIp9yvC7i2rD4Mw1EWCmLG9484QfExbuWM0u1NIfw 7m kubelet-bootstrap Approved,Issued

node-csr-ux_C9Cx-C432B6AJhPN-BCck78d38WLUbMR3unxt_AI 6m kubelet-bootstrap Approved,Issued

[root@k8s-master1 crt]#

查看node状态

[root@k8s-master1 crt]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.188.102 Ready <none> 2m v1.11.10

192.168.188.103 Ready <none> 1m v1.11.10

[root@k8s-master1 crt]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

192.168.188.102 Ready <none> 3m v1.11.10

192.168.188.103 Ready <none> 2m v1.11.10

[root@k8s-master1 crt]# kubectl get no

NAME STATUS ROLES AGE VERSION

192.168.188.102 Ready <none> 3m v1.11.10

192.168.188.103 Ready <none> 2m v1.11.10

4.5.4、部署kube-proxy组件

创建kube-proxy配置文件

两个node节点都做

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kube-proxy

[root@k8s-node1 ~]# cat /opt/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.188.102 \

--cluster-cidr=10.0.0.0/24 \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

systemd管理kube-proxy组件

两个node节点都做

[root@k8s-node1 ~]# vim /usr/lib/systemd/system/kube-proxy.service

[root@k8s-node1 ~]# cat /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target启动kube-proxy

[root@k8s-node1 ~]# systemctl daemon-reload

[root@k8s-node1 ~]# systemctl enable kube-proxy

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

[root@k8s-node1 ~]# systemctl start kube-proxy4.6、部署完成检查

[root@k8s-master1 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.188.102 Ready <none> 17m v1.11.10

192.168.188.103 Ready <none> 16m v1.11.10

[root@k8s-master1 ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"} 5、kubernetes部署(kubeadm)

与二进制部署不同,kubeadm 部署的所有组件均以容器方式运行。kubeadm 自动创建容器和证书十分便捷,但企业中通常使用二进制部署,以便于日志排错处理。

kubeadm 部署 Kubernetes 的最低配置要求为 2 核 CPU 和 2 GB 内存。当前 Kubernetes 官网最新版本是 1.30,但本演示使用的是企业中较常用的 1.19.1 版本。组件镜像和 kubeadm 版本需与 Kubernetes 版本保持一致,同时也要注意docker的版本。

通常,我们从阿里云下载镜像并重新打标签,因为 kubeadm 初始化 Kubernetes 集群时只识别默认的官网镜像名称。尽管初始化时会自动拉取镜像,我们可以选择不拉取,但默认从官网拉取常常会失败。遇到镜像拉取失败时,可以查看错误信息中的镜像全称。下文将展示完整的操作步骤。

实验环境:

3台,centos7,开启ssh登录,关闭防火墙,关闭selinux、设置静态ip、时间同步

提前配置主机名,互相配置域名解析

5.1、安装docker

三台全部操作

#yum install -y yum-utils device-mapper-persistent-data lvm2

#yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

#yum makecache fast

#yum install -y docker-ce-19.03.2 docker-ce-cli-19.03.2 containerd.io

docker版本可以看一下网上对应表选择一个相近的版本

# docker -v

Docker version 19.03.2, build 6a30dfc

# systemctl start docker

# systemctl enable docker

docker加速及驱动配置

[root@k8s-master1 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": [

"https://hub-mirror.c.163.com",

"https://docker.m.daocloud.io",

"https://ghcr.io",

"https://mirror.baidubce.com",

"https://docker.nju.edu.cn"

]

}

》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》》

1.22之后版本如下

[root@k8s-master1 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": [

"https://hub-mirror.c.163.com",

"https://docker.m.daocloud.io",

"https://ghcr.io",

"https://mirror.baidubce.com",

"https://docker.nju.edu.cn"

],

"exec-opts": ["native.cgroupdriver=systemd"]

}1.22之后不改driver安装k8s会报错。

重启docker,注意此处docker必须设置开机自启

[root@k8s-master1 ~]# systemctl daemon-reload ;systemctl restart docker

5.2、关闭swap

三台都需要操作

# swapoff -a

# vim /etc/fstab

把这行注释掉/dev/mapper/centos-swap swap swap defaults 0 0

# free -m

total used free shared buff/cache available

Mem: 2827 248 1742 9 836 2414

Swap: 0 0 0

5.3、使用kubeadm部署Kubernetes

三台主机操作

[root@k8s-master1 ~]# vim /etc/yum.repos.d/kubernetes.repo

[root@k8s-master1 ~]# cat /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

这个位置因为官网同步原因可能会造成检查错误,所以直接关掉即可这里进行一步错误操作演示如何卸载错误版本

[root@k8s-master1 ~]# yum install -y kubelet kubeadm kubectl

[root@k8s-master1 ~]# yum remove -y `rpm -qa | grep -E "kubeadm|kubectl|kubelet"`正确的下载方式

三台主机操作

# yum install -y kubelet-1.19.1-0.x86_64 kubeadm-1.19.1-0.x86_64 kubectl-1.19.1-0.x86_64 ipvsadm

ipvsadm

用于设置pod转发规则加载ipvs相关的内核模块

三台主机操作

# modprobe ip_vs && modprobe ip_vs_rr && modprobe ip_vs_wrr && modprobe ip_vs_sh && modprobe nf_conntrack_ipv4 && modprobe br_netfilter

# lsmod | grep ip_vs

编辑文件添加开机自启

# echo "modprobe ip_vs && modprobe ip_vs_rr && modprobe ip_vs_wrr && modprobe ip_vs_sh && modprobe nf_conntrack_ipv4 && modprobe br_netfilter" >> /etc/rc.local

# chmod +x /etc/rc.local配置转发相关参数(这里只是为了防止报错,基本用不上)

三台主机

# cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

vm.swappiness=0

EOF

使配置生效

三台主机

# sysctl -p /etc/sysctl.d/k8s.conf

# sysctl --system配置启动kubelet(所有节点)

三个主机

1.配置kubelet建立pod使用的pause镜像

查看docker的驱动

# docker info|grep "Cgroup Driver"

Cgroup Driver: cgroupfs

# DOCKER_CGROUPS=cgroupfs

# echo $DOCKER_CGROUPS

cgroupfs

2.配置kubelet的cgroups

这里使用官网的镜像,pause版本不清楚,随便写一个一会看报错信息修改

# cat >/etc/sysconfig/kubelet<<EOF

> KUBELET_EXTRA_ARGS="--cgroup-driver=cgroupfs --pod-infra-container-image=k8s.gcr.io/pause:3.2"

> EOF

# cat /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS="--cgroup-driver=cgroupfs --pod-infra-container-image=k8s.gcr.io/pause:3.2"阿里源pause

cat >/etc/sysconfig/kubelet<<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=$DOCKER_CGROUPS --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2"

EOF三个主机

# systemctl daemon-reload

# systemctl enable kubelet && systemctl restart kubelet

此时会写入自启动成功,但是启动失败,因为api server还未初始化

Master节点初始化

master操作

[root@k8s-master1 ~]# kubeadm init --kubernetes-version=v1.19.1 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.188.101 --ignore-preflight-errors=Swap

--apiserver-advertise-address=192.168.188.101 ---master的ip地址

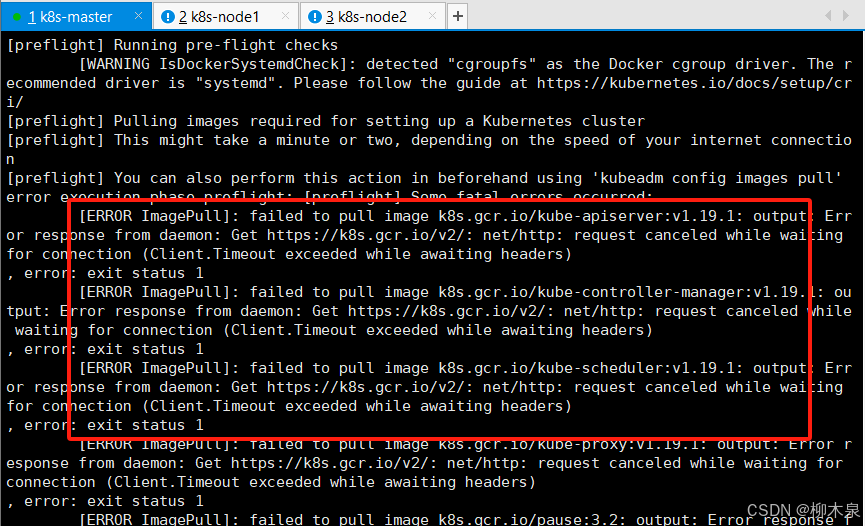

等待镜像拉取报错,时间很长这里可以通过以下参数指定镜像拉取地址

--image-repository=registry.aliyuncs.com/google_containers

这种方法有概率失败,建议手动拉报错信息如下,根据信息查看需要的镜像版本

重置初始化环境

[root@k8s-master1 ~]# kubeadm reset根据所需镜像,编写手动拉取脚本

编写所需镜像列表

[root@k8s-master1 ~]# vim images_need.sh

[root@k8s-master1 ~]# cat images_need.sh

k8s.gcr.io/kube-apiserver:v1.19.1

k8s.gcr.io/kube-controller-manager:v1.19.1

k8s.gcr.io/kube-scheduler:v1.19.1

k8s.gcr.io/kube-proxy:v1.19.1

k8s.gcr.io/pause:3.2

k8s.gcr.io/etcd:3.4.13-0

k8s.gcr.io/coredns:1.7.0

根据上表将地址改为阿里云拉取

[root@k8s-master1 ~]# vim images_pull.sh

[root@k8s-master1 ~]# cat images_pull.sh

#!/bin/bash

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.19.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.19.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.19.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.19.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.13-0

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2

将上面拉取的镜像通过tag改为所需镜像

[root@k8s-master1 ~]# vim images_tag.sh

[root@k8s-master1 ~]# cat images_tag.sh

#!/bin/bash

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.19.1 k8s.gcr.io/kube-controller-manager:v1.19.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.19.1 k8s.gcr.io/kube-proxy:v1.19.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.19.1 k8s.gcr.io/kube-apiserver:v1.19.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.19.1 k8s.gcr.io/kube-scheduler:v1.19.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0 k8s.gcr.io/coredns:1.7.0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.13-0 k8s.gcr.io/etcd:3.4.13-0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2 k8s.gcr.io/pause:3.2将脚本拷贝到每个节点

[root@k8s-master1 ~]# scp *.sh k8s-node1:/root/

[root@k8s-master1 ~]# scp *.sh k8s-node2:/root/

node加入集群也可以自动获取开始获取镜像

三台主机

[root@k8s-master1 ~]# bash images_pull.sh

[root@k8s-master1 ~]# bash images_tag.sh

[root@k8s-master1 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-proxy v1.19.1 33c60812eab8 3 years ago 118MB

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy v1.19.1 33c60812eab8 3 years ago 118MB

k8s.gcr.io/kube-apiserver v1.19.1 ce0df89806bb 3 years ago 119MB

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver v1.19.1 ce0df89806bb 3 years ago 119MB

k8s.gcr.io/kube-controller-manager v1.19.1 538929063f23 3 years ago 111MB

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager v1.19.1 538929063f23 3 years ago 111MB

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler v1.19.1 49eb8a235d05 3 years ago 45.7MB

k8s.gcr.io/kube-scheduler v1.19.1 49eb8a235d05 3 years ago 45.7MB

registry.cn-hangzhou.aliyuncs.com/google_containers/etcd 3.4.13-0 0369cf4303ff 3 years ago 253MB

k8s.gcr.io/etcd 3.4.13-0 0369cf4303ff 3 years ago 253MB

k8s.gcr.io/coredns 1.7.0 bfe3a36ebd25 4 years ago 45.2MB

registry.cn-hangzhou.aliyuncs.com/google_containers/coredns 1.7.0 bfe3a36ebd25 4 years ago 45.2MB

k8s.gcr.io/pause 3.2 80d28bedfe5d 4 years ago 683kB

registry.cn-hangzhou.aliyuncs.com/google_containers/pause 3.2 80d28bedfe5d 4 years ago 683kB

镜像获取后再次进行初始化

master上操作

[root@k8s-master1 ~]# kubeadm init --kubernetes-version=v1.19.1 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.188.101 --ignore-preflight-errors=Swap

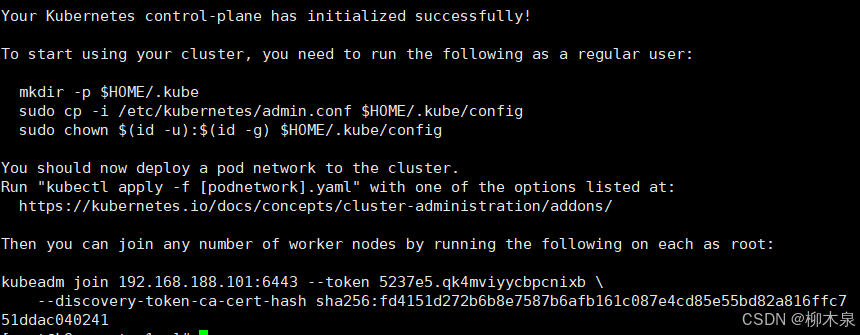

初始化成功后根据提示信息操作

这里运行成功后会产生一条命令,记录下来找个地方保存好一会要用

kubeadm join 192.168.188.101:6443 --token 5237e5.qk4mviyycbpcnixb \

--discovery-token-ca-cert-hash sha256:fd4151d272b6b8e7587b6afb161c087e4cd85e55bd82a816ffc751ddac040241

token默认只有24个小时,如果过期了或者忘了,可以重新获取加入集群的命令

kubeadm token create --print-join-command

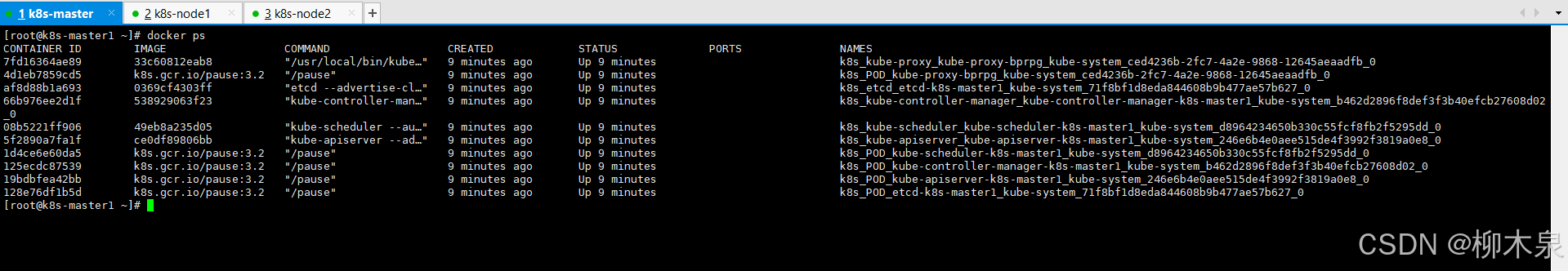

此时docker ps查看master上容器,可以看到组件以容器形式运行

根据初始化产生的信息操作

master上操作

[root@k8s-master1 ~]# mkdir -p $HOME/.kube

[root@k8s-master1 ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master1 ~]# chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady master 18m v1.19.1

此时master节点状态为未准备,原因是网络插件尚未启动

[root@k8s-master1 ~]# kubectl get pod

No resources found in default namespace.

在默认的命名空间下没有pod

这里指定命名空间可以看到两个Pending的dns-pod,同时此时没有flannel pod

[root@k8s-master1 ~]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-f9fd979d6-2xktj 0/1 Pending 0 22m

coredns-f9fd979d6-x7bkv 0/1 Pending 0 22m

etcd-k8s-master1 1/1 Running 0 22m

kube-apiserver-k8s-master1 1/1 Running 0 22m

kube-controller-manager-k8s-master1 1/1 Running 0 22m

kube-proxy-bprpg 1/1 Running 0 22m

kube-scheduler-k8s-master1 1/1 Running 0 22m

配置使用网络插件

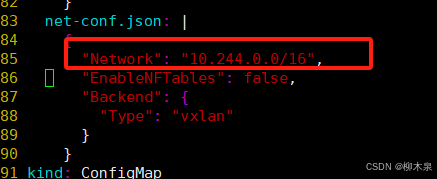

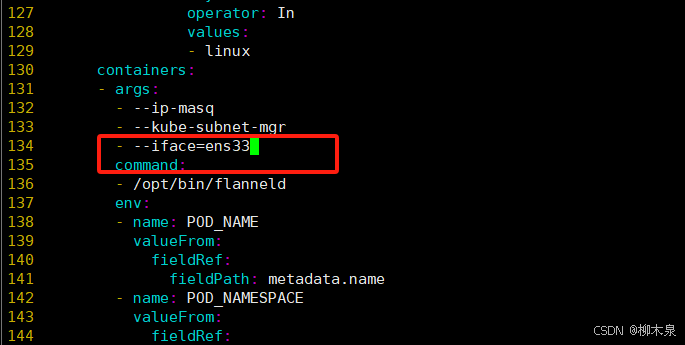

通过编写yaml文件创建flannel pod(这个文件放在文章开始的百度云链接里面了,建议保存一份,没有科学上网环境下载很不方便,有科学上网环境建议选择HK节点)

curl -O https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml使用vim冒号命令进行替换

:% s/namespace: kube-flannel/namespace: kube-system/g

pod尽量创建到同一个命名空间保证网段和初始化时候一样

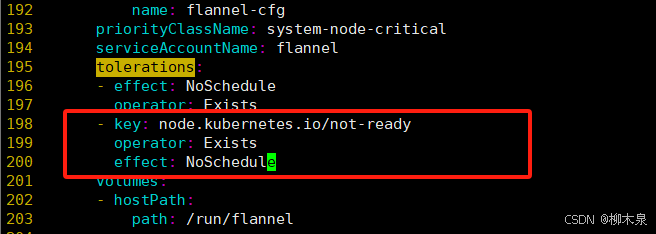

添加宿主机网卡

添加如下内容,忽略notready状态仍然部署pod

- key: node.kubernetes.io/not-ready

operator: Exists

effect: NoSchedule

保存退出后查看yaml文件所需镜像提前下载

三台执行

[root@k8s-master1 ~]# grep image kube-flannel.yml

image: docker.io/flannel/flannel-cni-plugin:v1.5.1-flannel1

image: docker.io/flannel/flannel:v0.25.5

image: docker.io/flannel/flannel:v0.25.5

[root@k8s-master1 ~]# docker pull docker.io/flannel/flannel:v0.25.5

[root@k8s-master1 ~]# docker pull docker.io/flannel/flannel-cni-plugin:v1.5.1-flannel1

这个镜像拉取时候大概率报错,而且多重复几次你会发现报错都不一样

解决方法:

[root@k8s-master1 ~]# docker pull registry.cn-chengdu.aliyuncs.com/k8s_module_images/flannel-cni-plugin:v1.5.1-flannel1

[root@k8s-master1 ~]# docker pull registry.cn-chengdu.aliyuncs.com/k8s_module_images/flannel:v0.25.5

[root@k8s-master1 ~]# docker tag registry.cn-chengdu.aliyuncs.com/k8s_module_images/flannel:v0.25.5 docker.io/flannel/flannel:v0.25.5

[root@k8s-master1 ~]# docker tag registry.cn-chengdu.aliyuncs.com/k8s_module_images/flannel-cni-plugin:v1.5.1-flannel1 docker.io/flannel/flannel-cni-plugin:v1.5.1-flannel1这里读取配置文件

[root@k8s-master1 ~]# mv kube-flannel.yml kube-flannel.yaml

[root@k8s-master1 ~]# kubectl apply -f kube-flannel.yaml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

再次查看pod,flannel pod应该有三个,但是现在node还没有加入,这里只有一个

[root@k8s-master1 ~]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-f9fd979d6-f8rxv 1/1 Running 0 6m25s

coredns-f9fd979d6-frrvc 1/1 Running 0 6m25s

etcd-k8s-master1 1/1 Running 0 6m42s

kube-apiserver-k8s-master1 1/1 Running 0 6m41s

kube-controller-manager-k8s-master1 1/1 Running 0 6m42s

kube-flannel-ds-c576q 1/1 Running 0 2m20s

kube-proxy-96cnh 1/1 Running 0 6m25s

kube-scheduler-k8s-master1 1/1 Running 0 6m42s

[root@k8s-master1 ~]# kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-f9fd979d6-f8rxv 1/1 Running 0 10m 10.244.0.2 k8s-master1 <none> <none>

coredns-f9fd979d6-frrvc 1/1 Running 0 10m 10.244.0.3 k8s-master1 <none> <none>

etcd-k8s-master1 1/1 Running 0 10m 192.168.188.101 k8s-master1 <none> <none>

kube-apiserver-k8s-master1 1/1 Running 0 10m 192.168.188.101 k8s-master1 <none> <none>

kube-controller-manager-k8s-master1 1/1 Running 0 10m 192.168.188.101 k8s-master1 <none> <none>

kube-flannel-ds-c576q 1/1 Running 0 6m28s 192.168.188.101 k8s-master1 <none> <none>

kube-proxy-96cnh 1/1 Running 0 10m 192.168.188.101 k8s-master1 <none> <none>

kube-scheduler-k8s-master1 1/1 Running 0 10m 192.168.188.101 k8s-master1 <none> <none>

将前面初始化生成的命令放在node上执行,然后回到master节点检查

[root@k8s-master1 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 18m v1.19.1

k8s-node1 Ready <none> 3m v1.19.1

k8s-node2 Ready <none> 61s v1.19.15.4、常用命令

[root@k8s-master1 ~]# kubectl describe node k8s-node1

查看详细信息

[root@k8s-master1 ~]# systemctl status kubelet -l

查看报错

[root@k8s-master1 ~]# kubeadm token create

创建新token

[root@k8s-master1 ~]# kubeadm token list

查看token列表

[root@k8s-master1 ~]# openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

获取哈希值

可用来后期新增node节点

[root@k8s-master1 ~]# kubectl drain kub-k8s-node1 --delete-local-data --force --ignore-daemonsets

驱离k8s-node-1节点上的pod

[root@k8s-master1 ~]# kubectl delete node k8s-node1

删除节点

[root@k8s-node1 ~]# kubeadm reset

重置node1节点

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)