k8s:1.20版,centos7,单机,安装记录

最近研究k8s,在实际环境到位前,先在本机搭一个单机的k8s玩玩,把安装过程记录下来,不求全面的教程,而是真实可行的:一、环境说明先说明一下环境,在virtualbox虚拟了一个centos系统:CPU:1核内存:4g系统:CentOS 7.9.2009 64位docker: Docker version 19.03.9再说明一下要安装的软件版本:k8s: 1.20.4etcd: 3.4.13da

最近研究k8s,在实际环境到位前,先在本机搭一个单机的k8s玩玩,把安装过程记录下来,不求全面的教程,而是真实可行的:

一、环境说明

先说明一下环境,在virtualbox虚拟了一个centos系统:

- CPU:1核

- 内存:4g

- 系统:CentOS 7.9.2009 64位

- docker: Docker version 19.03.9

再说明一下要安装的软件版本:

- k8s: 1.20.4

- etcd: 3.4.13

- dashboard:2.4

二、准备

- #登录root用户

- #关闭防火墙

- systemctl stop firewalld

- #关闭selinux

- setenforce 0

- #禁止swap分区

- swapoff -a

- #桥接的IPV4流量传递到iptables 的链

- cat > /etc/sysctl.d/k8s.conf <<EOF

- net.bridge.bridge-nf-call-ip6tables = 1

- net.bridge.bridge-nf-call-iptables = 1

- EOF

- sysctl --system #生效

- #配置k8s yum源

- cat >/etc/yum.repos.d/kubernetes.repo << EOF

- [kubernetes]

- name=Kubernetes

- baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

- enabled=1

- gpgcheck=0

- repo_gpgcheck=0

- gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

- EOF

三、安装kubeadm,kubelet,kubectl

- #安装kubeadm(初始化cluster),kubelet(启动pod)和kubectl(k8s命令工具)

- yum install -y kubelet-1.20.4

- yum install -y kubeadm-1.20.4

- yum install -y kubectl-1.20.4

- #设置开机启动并启动kubelet

- systemctl enable kubelet

- systemctl start kubelet

四、部署Kubernetes

创建k8s.sh脚本,下载所需镜像并更改tagk8s.sh

- #!/bin/bash

- images=(

- kube-apiserver:v1.20.4

- kube-controller-manager:v1.20.4

- kube-scheduler:v1.20.4

- kube-proxy:v1.20.4

- pause:3.2

- etcd:3.4.13-0

- coredns:1.7.0

- )

- for imageName in ${images[@]} ; do

- docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/${imageName}

- docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/${imageName} k8s.gcr.io/${imageName}

- docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/${imageName}

- done

执行脚本./k8s.sh,再用docker images确认镜像是否已经下载下来

执行初始化master命令:

- #初始化Master

- kubeadm init --pod-network-cidr=10.244.0.0/16 --kubernetes-version=v1.20.4 --ignore-preflight-errors=NumCPU --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16 --v=6

因为是root用户,所以根据成功的提示执行:

- export KUBECONFIG=/etc/kubernetes/admin.conf

如果不是root用户,需要

- mkdir -p $HOME/.kube

- sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

- sudo chown $(id -u):$(id -g) $HOME/.kube/config

查看组件状态会发现不对:

- kubectl get cs

需要注释掉/etc/kubernetes/manifests下的kube-controller-manager.yaml和kube-scheduler.yaml的- – port=0,然后重启

- #注释完重启服务

- systemctl restart kubelet.service

- #查看组件状态

- kubectl get cs

接下来解决notready问题,原因是没配置CNI网络插件,这里选用flannel.

- curl https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml >>kube-flannel.yml

- chmod 777 kube-flannel.yml

- kubectl apply -f kube-flannel.yml

等几分钟,再看节点状态kubectl get cs,成功!

每次重新init master都要执行 kubeadm reset

五、部署Dashboard

安装:

- kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.4.0/aio/deploy/recommended.yaml

启动并后台运行:

- kubectl proxy --address='0.0.0.0' --accept-hosts='^*$' &

添加用户获取token:

- kubectl create clusterrolebinding dashboard-cluster-admin --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:kubernetes-dashboard

- kubectl get secret -n kubernetes-dashboard

- kubectl describe secret kubernetes-dashboard-token-knbht -n kubernetes-dashboard

拷贝token,登录

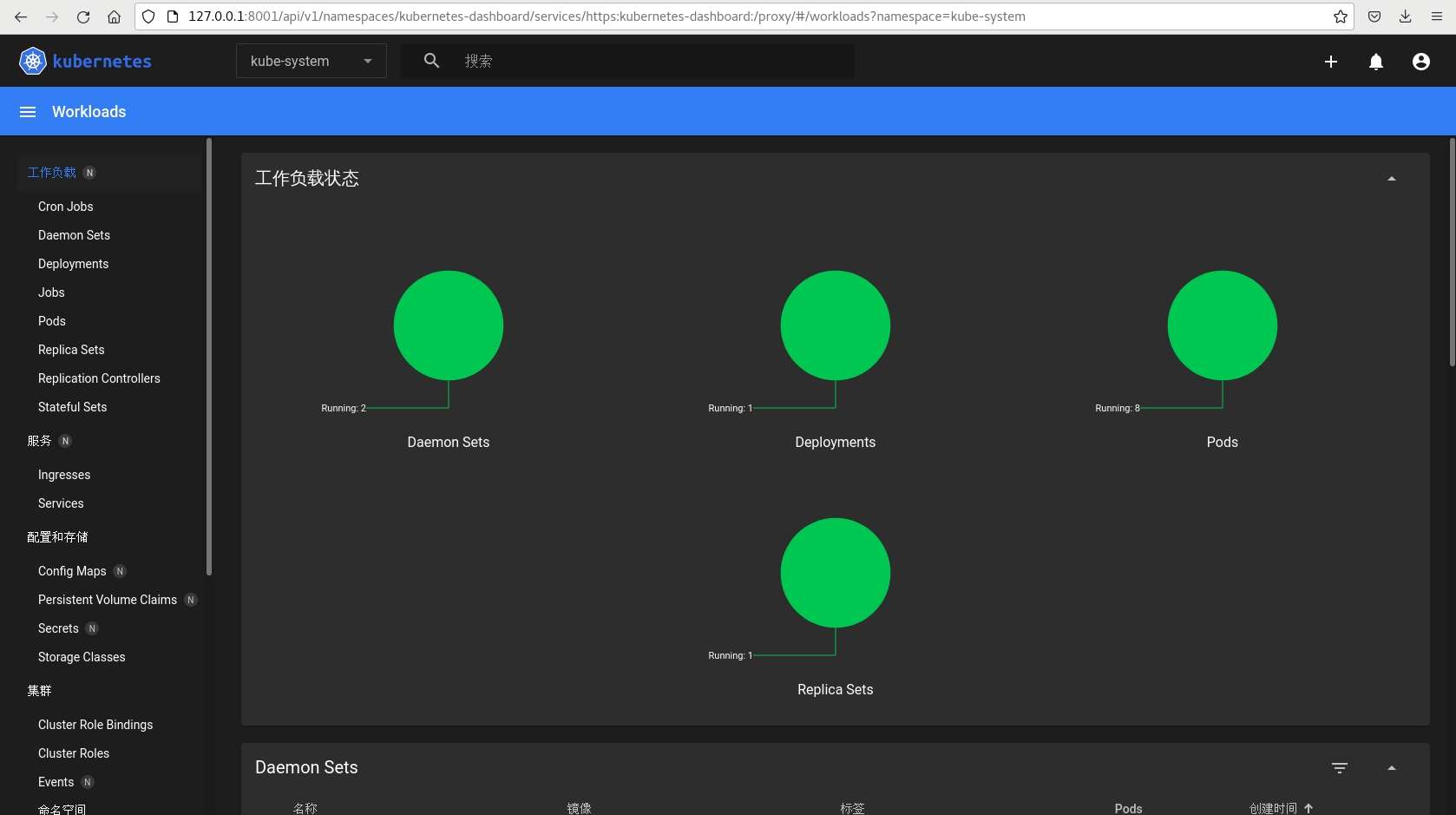

http://127.0.0.1:8001/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy/#/loginx选择Token方式,输入token登录成功!

六、更改master节点的taint

更改master节点的taint,否则无法启动新的pods:

kubectl taint nodes localhost.localdomain node-role.kubernetes.io/master=:PreferNoSchedule

kubectl taint nodes localhost.localdomain node-role.kubernetes.io/master:NoSchedule-

kubectl describe nodes localhost.localdomain

更多推荐

已为社区贡献7条内容

已为社区贡献7条内容

所有评论(0)