I have data with differing weights for each sample. In my application, it is important that these weights are accounted for in estimating the model and comparing alternative models.

I'm using sklearn to estimate models and to compare alternative hyperparameter choices. But this unit test shows that GridSearchCV does not apply sample_weights to estimate scores.

Is there a way to have sklearn use sample_weight to score the models?

Unit test:

from __future__ import division

import numpy as np

from sklearn.datasets import load_iris

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import log_loss

from sklearn.model_selection import GridSearchCV, RepeatedKFold

def grid_cv(X_in, y_in, w_in, cv, max_features_grid, use_weighting):

out_results = dict()

for k in max_features_grid:

clf = RandomForestClassifier(n_estimators=256,

criterion="entropy",

warm_start=False,

n_jobs=-1,

random_state=RANDOM_STATE,

max_features=k)

for train_ndx, test_ndx in cv.split(X=X_in, y=y_in):

X_train = X_in[train_ndx, :]

y_train = y_in[train_ndx]

w_train = w_in[train_ndx]

y_test = y[test_ndx]

clf.fit(X=X_train, y=y_train, sample_weight=w_train)

y_hat = clf.predict_proba(X=X_in[test_ndx, :])

if use_weighting:

w_test = w_in[test_ndx]

w_i_sum = w_test.sum()

score = w_i_sum / w_in.sum() * log_loss(y_true=y_test, y_pred=y_hat, sample_weight=w_test)

else:

score = log_loss(y_true=y_test, y_pred=y_hat)

results = out_results.get(k, [])

results.append(score)

out_results.update({k: results})

for k, v in out_results.items():

if use_weighting:

mean_score = sum(v)

else:

mean_score = np.mean(v)

out_results.update({k: mean_score})

best_score = min(out_results.values())

best_param = min(out_results, key=out_results.get)

return best_score, best_param

if __name__ == "__main__":

RANDOM_STATE = 1337

X, y = load_iris(return_X_y=True)

sample_weight = np.array([1 + 100 * (i % 25) for i in range(len(X))])

# sample_weight = np.array([1 for _ in range(len(X))])

inner_cv = RepeatedKFold(n_splits=3, n_repeats=1, random_state=RANDOM_STATE)

outer_cv = RepeatedKFold(n_splits=3, n_repeats=1, random_state=RANDOM_STATE)

rfc = RandomForestClassifier(n_estimators=256,

criterion="entropy",

warm_start=False,

n_jobs=-1,

random_state=RANDOM_STATE)

search_params = {"max_features": [1, 2, 3, 4]}

fit_params = {"sample_weight": sample_weight}

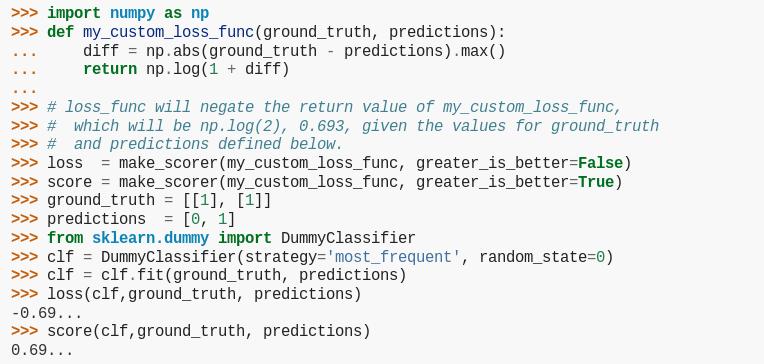

my_scorer = make_scorer(log_loss,

greater_is_better=False,

needs_proba=True,

needs_threshold=False)

grid_clf = GridSearchCV(estimator=rfc,

scoring=my_scorer,

cv=inner_cv,

param_grid=search_params,

refit=True,

return_train_score=False,

iid=False) # in this usage, the results are the same for `iid=True` and `iid=False`

grid_clf.fit(X, y, **fit_params)

print("This is the best out-of-sample score using GridSearchCV: %.6f." % -grid_clf.best_score_)

msg = """This is the best out-of-sample score %s weighting using grid_cv: %.6f."""

score_with_weights, param_with_weights = grid_cv(X_in=X,

y_in=y,

w_in=sample_weight,

cv=inner_cv,

max_features_grid=search_params.get(

"max_features"),

use_weighting=True)

print(msg % ("WITH", score_with_weights))

score_without_weights, param_without_weights = grid_cv(X_in=X,

y_in=y,

w_in=sample_weight,

cv=inner_cv,

max_features_grid=search_params.get(

"max_features"),

use_weighting=False)

print(msg % ("WITHOUT", score_without_weights))

Which produces output:

This is the best out-of-sample score using GridSearchCV: 0.135692.

This is the best out-of-sample score WITH weighting using grid_cv: 0.099367.

This is the best out-of-sample score WITHOUT weighting using grid_cv: 0.135692.

Explanation: Since manually computing the loss without weighting produces the same scoring as GridSearchCV, we know that the sample weights are not being used.

已为社区贡献126442条内容

已为社区贡献126442条内容

所有评论(0)