【Ubuntu22.04配置k8s集群】

为了防止机器之间的请求被防火墙拦截, 需要把3台机器的防火墙都关了(这里是为了省事, 测试环境中使用, 上线时请开放对应所需端口), 另外我们要把iptables也关了。这个只是示例, master启动完成后会有展示一行类似的命令, copy到从机上运行即可, 如果找不到命令。编辑/etc/resolv.conf, 将nameserver后面跟着的地址改成master的地址。同时在3台机子上进行下

集群方式

一主两从

安装方式

本次使用kubeam

环境准备

准备3台2核2G(最低要求), 操作系统为ubuntu22.04的物理机(虚拟机也可以)

主域名解析

同时在3台机子上进行下列操作, ip地址改成自己的,使用ifconfig命令查看

sudo vim /etc/hosts

# 下列列表粘贴到文件中

# 这里仅供测试使用, 企业用dns服务器

192.168.40.135 master

192.168.40.136 node1

192.168.40.137 node2

网络时间同步

需要保证3台机器上的时间是同步的, 这里我们使用Chrony,

Chrony是一个开源自由的网络时间协议 NTP 的客户端和服务器软软件。它能让计算机保持系统时钟与时钟服务器(NTP)同步,因此让你的计算机保持精确的时间,Chrony也可以作为服务端软件为其他计算机提供时间同步服务。

# 下载

sudo apt install chrony

# 启动

sudo systemctl start chronyd

sudo systemctl enable chronyd

# 显示当前时间

date

关闭系统服务

为了防止机器之间的请求被防火墙拦截, 需要把3台机器的防火墙都关了(这里是为了省事, 测试环境中使用, 上线时请开放对应所需端口), 另外我们要把iptables也关了

# 关闭防火墙

sudo ufw disable

# 关闭iptables

sudo iptables -F

sudo iptables -X

sudo iptables -Z

sudo iptables -P INPUT ACCEPT

sudo iptables -P OUTPUT ACCEPT

sudo iptables -P FORWARD ACCEPT

sudo modprobe -r ip_tables

禁用swap分区

旧版本的k8s需要关掉swap分区, 1.28 版开始支持swap,所以1.28版本往后可以不用管

sudo vim /etc/fstab

# 注释掉有关swap的那一行

修改linux的内核参数

sudo vim /etc/sysctl.d/kubernetes.conf

# 将以下内容插入

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip-forward = 1

# 重新加载配置

sudo sysctl -p

# 加载网桥过滤模块

sudo modprobe br_netfilter

# 查看网桥过滤模块是否加载成功

lsmod | grep br_netfilter

配置ipvs的功能

sudo apt install ipset ipvsadm

# 配置ipvsadm 添加模块

sudo tee /etc/modules-load.d/ipvs.conf << EOF

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack

EOF

# 创建加载模块脚本

cat << EOF | tee ipvs.sh

#!/bin/sh

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

# 执行脚本

sudo sh ipvs.sh

# 验证

lsmod | grep ip_vs

重启系统

每台机器都重启一下系统,以使上面配置生效

sudo reboot

Docker

安装

由于docker现在已经被禁用, 这里我们使用国内源下载

# step 1: 安装必要的一些系统工具

sudo apt-get update

sudo apt-get -y install apt-transport-https ca-certificates curl software-properties-common

# step 2: 安装GPG证书

curl -fsSL https://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add -

# Step 3: 写入软件源信息

sudo add-apt-repository "deb [arch=amd64] https://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable"

# Step 4: 更新并安装Docker-CE

sudo apt-get -y update

sudo apt-get -y install docker-ce

# 安装指定版本的Docker-CE:

# Step 1: 查找Docker-CE的版本:

# apt-cache madison docker-ce

# docker-ce | 17.03.1~ce-0~ubuntu-xenial | https://mirrors.aliyun.com/docker-ce/linux/ubuntu xenial/stable amd64 Packages

# docker-ce | 17.03.0~ce-0~ubuntu-xenial | https://mirrors.aliyun.com/docker-ce/linux/ubuntu xenial/stable amd64 Packages

# Step 2: 安装指定版本的Docker-CE: (VERSION例如上面的17.03.1~ce-0~ubuntu-xenial)

# sudo apt-get -y install docker-ce=[VERSION]

docker换国内源

sudo vim /etc/docker/daemon.json

目前国内docker还能用的源

{

"registry-mirrors": [

"https://docker.m.daocloud.io",

"https://noohub.ru",

"https://huecker.io",

"https://dockerhub.timeweb.cloud"

]

}

重启docker, 使其配置生效

sudo systemctl daemon-reload

sudo systemctl restart docker

安装k8s

# 安装基础环境

sudo apt-get install -y ca-certificates curl software-properties-common apt-transport-https curl

sudo curl -s https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | sudo apt-key add -

# 执行配置k8s阿里云源

sudo sh -c "echo 'deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main' >> /etc/apt/sources.list.d/kubernetes.list"

# 执行更新

sudo apt-get update -y

# 安装kubeadm、kubectl、kubelet

sudo apt-get install -y kubelet=1.23.1-00 kubeadm=1.23.1-00 kubectl=1.23.1-00

# 阻止自动更新(apt upgrade时忽略)。所以更新的时候先unhold,更新完再hold。

sudo apt-mark hold kubelet kubeadm kubectl

准备集群镜像

docker在国内拉取所需镜像

#从国内镜像拉取

sudo docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.23.8

sudo docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.23.8

sudo docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.23.8

sudo docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.8

sudo docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6

sudo docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0

sudo docker pull coredns/coredns:1.8.6

docker给镜像打标签

#将拉取下来的images重命名为kubeadm config所需的镜像名字

sudo docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.23.8 k8s.gcr.io/kube-apiserver:v1.23.8

sudo docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.23.8 k8s.gcr.io/kube-controller-manager:v1.23.8

sudo docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.23.8 k8s.gcr.io/kube-scheduler:v1.23.8

sudo docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.8 k8s.gcr.io/kube-proxy:v1.23.8

sudo docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6 k8s.gcr.io/pause:3.6

sudo docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0 k8s.gcr.io/etcd:3.5.1-0

sudo docker tag coredns/coredns:1.8.6 k8s.gcr.io/coredns/coredns:v1.8.6

以上操作在每台机器上都必须做

部署master

kubeam-config.yaml, 这里advertiseAddress改成自己master的地址

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.40.135

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

imagePullPolicy: IfNotPresent

name: master

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: 1.23.1

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16

scheduler: {}

---

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

cgroupDriver: systemd

启动

sudo kubeadm init --config kubeadm-config.yaml

启动完成后执行下列指令

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

coredns如果出现CrashLoopBackOff的错误的解决方案

这里可能会遇到coredns启动不成功的问题

编辑/etc/resolv.conf, 将nameserver后面跟着的地址改成master的地址

网络插件安装

kubectl apply -f flannel.yaml

flannel.yaml

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: flannel

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

k8s-app: flannel

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"EnableNFTables": false,

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: docker.io/flannel/flannel-cni-plugin:v1.5.1-flannel1

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: docker.io/flannel/flannel:v0.25.4

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: docker.io/flannel/flannel:v0.25.4

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

Node 连接主机

sudo kubeadm join 192.168.40.135:6443 --token ijidj9.372c0a4xkbcbbsec --discovery-token-ca-cert-hash sha256:62be027a15f6708883177c471428e47378854e536bb8d93cfcc81f33fd147cc8

这个只是示例, master启动完成后会有展示一行类似的命令, copy到从机上运行即可, 如果找不到命令

可在主机上执行sudo kubeadm token create --print-join-command获取命令

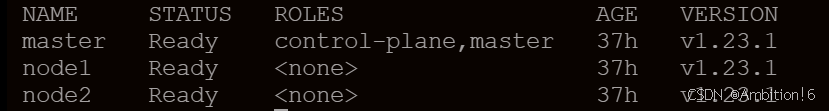

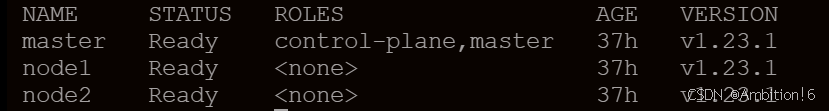

结果

在主机上执行下列命令, 如果结果如下图, 则集群已经配成功

kubectl get nodes

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)