k8s-集群高可用安装

为了实现docker使用的cgroupdriver与kubelet使用的cgroup的一致性,建议修改如下文件内容。192.168.0.200:8888/statsvip访问。192.168.0.11:8888/stats指定ip访问。192.168.0.200 浮动IP。k8s版本 1.26.8。所有node节点安装。

·

环境准备

k8s版本 1.26.8

docker 20.10.22-3.el7

k8s11 192.168.0.11

k8s12 192.168.0.12

k8s13 192.168.0.13

k8s14 192.168.0.14

k8s15 192.168.0.15

192.168.0.200 浮动IP

yum install docker-ce-20.10.22-3.el7 -y

yum install -y kubeadm-1.26.8

# 指定版本安装

yum install kubelet-1.26.8 kubeadm-1.26.8 kubectl-1.26.8 -y

Docker准备

所有node节点安装

Docker 安装 yum源

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum makecache fast

Docker 安装

yum install docker-ce -y

启动Docker服务 开机自启动

systemctl enable docker && systemctl start docker

# 或者

systemctl enable --now docker

修改cgroup方式及配置docker镜像加速器

cat <<EOF> /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://pawd5kdt.mirror.aliyuncs.com"]

}

EOF

# 重启docker

systemctl daemon-reload

systemctl restart docker

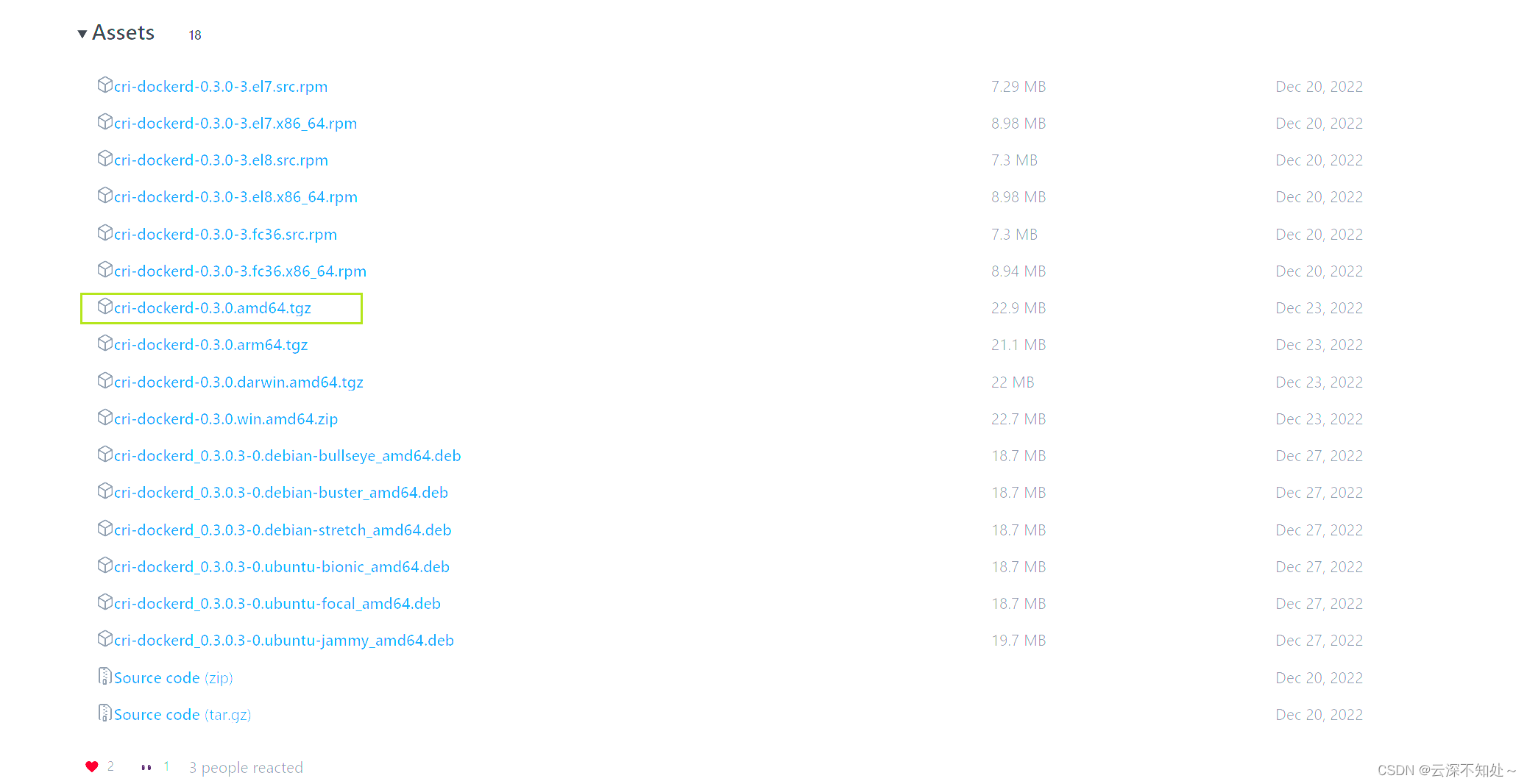

cri-dockerd 安装

下载/上传/解压

https://github.com/Mirantis/cri-dockerd/tags

tar -zxvf cri-dockerd-0.3.0.amd64.tgz -C /usr/local/software/

赋权/拷贝

chmod +x cri-dockerd

# 在 k8s11 节点执行

cp cri-dockerd /usr/local/bin/

scp cri-dockerd k8s12:/usr/local/bin/

scp cri-dockerd k8s13:/usr/local/bin/

scp cri-dockerd k8s14:/usr/local/bin/

scp cri-dockerd k8s15:/usr/local/bin/

配置 cri-docker.service

cat <<"EOF" > /etc/systemd/system/cri-docker.service

[Unit]

Description=CRI Interface for Docker Application Container Engine

Documentation=https://docs.mirantis.com

After=network-online.target firewalld.service docker.service

Wants=network-online.target

Requires=cri-docker.socket

[Service]

Type=notify

ExecStart=/usr/local/bin/cri-dockerd --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.9

ExecReload=/bin/kill -s HUP $MAINPID

TimeoutSec=0

RestartSec=2

Restart=always

StartLimitBurst=3

StartLimitInterval=60s

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

Delegate=yes

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

配置 cri-docker.socket

cat <<"EOF" > /etc/systemd/system/cri-docker.socket

[Unit]

Description=CRI Docker Socket for the API

PartOf=cri-docker.service

[Socket]

ListenStream=%t/cri-dockerd.sock

SocketMode=0660

SocketUser=root

SocketGroup=docker

[Install]

WantedBy=sockets.target

EOF

启动cri-docker并设置开机自动启动

systemctl daemon-reload

systemctl enable cri-docker --now

systemctl is-active cri-docker

高可用组件安装

- 所有master节点安装

yum -y install keepalived haproxy

- ha 配置文件

三台master节点均配置一样 k8s11 k8s12 k8s13

vim /etc/haproxy/haproxy.cfg

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

# turn on stats unix socket

stats socket /var/lib/haproxy/stats

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

#---------------------------------------------------------------------

# main frontend which proxys to the backends

#---------------------------------------------------------------------

frontend apiserver

bind *:9443

mode tcp

option tcplog

default_backend apiserver

#---------------------------------------------------------------------

# static backend for serving up images, stylesheets and such

#---------------------------------------------------------------------

backend apiserver

mode tcp

balance roundrobin

server k8s11 192.168.0.11:6443 check

server k8s12 192.168.0.12:6443 check

server k8s13 192.168.0.13:6443 check

#---------------------------------------------------------------------

# round robin balancing between the various backends

#---------------------------------------------------------------------

listen admin_stats

bind *:8888 #登录页面所绑定的地址加端口

mode http #监控的模式

log 127.0.0.1 local0 err #错误日志等级

stats refresh 30s

stats uri /stats #登录页面的网址,IP:8888/stats 即为登录网址

stats realm welcome login\ Haproxy

stats auth admin:admin123 #web页面的用户名和密码

stats hide-version

stats admin if TRUE

- ha 启动

# 设置开机启动

systemctl enable haproxy

# 开启haproxy

systemctl start haproxy

# 查看启动状态

systemctl status haproxy

访问

192.168.0.11:8888/stats 指定ip访问

192.168.0.200:8888/stats vip访问

- keepalived配置

k8s11

cat > /etc/keepalived/keepalived.conf <<EOF

global_defs {

router_id k8s11

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 3

weight -2

fall 10

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 42

priority 250

advert_int 1

authentication {

auth_type PASS

auth_pass ceb1b3ec013d66163d6ab

}

virtual_ipaddress {

192.168.0.200

}

track_script {

check_haproxy

}

}

EOF

k8s12

cat > /etc/keepalived/keepalived.conf <<EOF

global_defs {

router_id k8s12

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 3

weight -2

fall 10

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 42

priority 200

advert_int 1

authentication {

auth_type PASS

auth_pass ceb1b3ec013d66163d6ab

}

virtual_ipaddress {

192.168.0.200

}

track_script {

check_haproxy

}

}

EOF

k8s13

cat > /etc/keepalived/keepalived.conf <<EOF

global_defs {

router_id k8s13

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 3

weight -2

fall 10

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 42

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass ceb1b3ec013d66163d6ab

}

virtual_ipaddress {

192.168.0.200

}

track_script {

check_haproxy

}

}

EOF

- keepalived 启动

# 启动keepalived

systemctl start keepalived.service

# 设置开机启动

systemctl enable keepalived.service

# 查看启动状态

systemctl status keepalived.service

k8s安装

# 所有节点安装

yum install kubelet-1.26.8 kubeadm-1.26.8 kubectl-1.26.8 -y

配置kubelet

为了实现docker使用的cgroupdriver与kubelet使用的cgroup的一致性,建议修改如下文件内容

cat <<EOF > /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS="--cgroup-driver=systemd"

EOF

# 配置开机自启动

# 设置kubelet为开机自启动即可,由于没有生成配置文件,集群初始化后自动启动

systemctl enable kubelet

# 注意,此时不需要启动,它的配置文件是在k8s集群配置之后才有效

systemctl start kubelet

查看下载镜像

查看镜像

kubeadm config images list

下载镜像

kubeadm config images pull

kubeadm config images pull --cri-socket unix:///var/run/cri-dockerd.sock

--image-repository registry.aliyuncs.com/google_containers

生成配置文件

kubeadm config print init-defaults > kubeadm-config.yaml

kubeadm config print init-defaults --component-configs KubeletConfiguration

kubeadm config print init-defaults --component-configs KubeProxyConfiguration

vim kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.0.11

bindPort: 6443

nodeRegistration:

criSocket: unix:///var/run/cri-dockerd.sock

imagePullPolicy: IfNotPresent

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: 1.26.8

networking:

dnsDomain: cluster.local

podSubnet: 10.244.0.0/16

serviceSubnet: 10.96.0.0/12

scheduler: {}

apiServerCertsSANs:

- 192.168.0.200

controlPlaneEndpoint: "192.168.0.200:9443"

---

apiVersion: kubelet.config.k8s.io/v1beta1

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.crt

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 0s

cacheUnauthorizedTTL: 0s

cgroupDriver: systemd

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

cpuManagerReconcilePeriod: 0s

evictionPressureTransitionPeriod: 0s

fileCheckFrequency: 0s

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 0s

imageMinimumGCAge: 0s

kind: KubeletConfiguration

logging:

flushFrequency: 0

options:

json:

infoBufferSize: "0"

verbosity: 0

memorySwap: {}

nodeStatusReportFrequency: 0s

nodeStatusUpdateFrequency: 0s

rotateCertificates: true

runtimeRequestTimeout: 0s

shutdownGracePeriod: 0s

shutdownGracePeriodCriticalPods: 0s

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 0s

syncFrequency: 0s

volumeStatsAggPeriod: 0s

集群初始化

kubeadm init --config=kubeadm-config.yaml --upload-certs --v=9

加入其他master节点

- 复制k8s证书到其他master节点

# 复制文件

/etc/kube

rnetes/pki/ca.crt

/etc/kubernetes/pki/ca.key

/etc/kubernetes/pki/sa.key

/etc/kubernetes/pki/sa.pub

/etc/kubernetes/pki/front-proxy-ca.crt

/etc/kubernetes/pki/front-proxy-ca.key

/etc/kubernetes/pki/etcd/ca.key

/etc/kubernetes/pki/etcd/ca.crt

vim aa.txt

/etc/kubernetes/pki/ca.crt

/etc/kubernetes/pki/ca.key

/etc/kubernetes/pki/sa.key

/etc/kubernetes/pki/sa.pub

/etc/kubernetes/pki/front-proxy-ca.crt

/etc/kubernetes/pki/front-proxy-ca.key

/etc/kubernetes/pki/etcd/ca.key

/etc/kubernetes/pki/etcd/ca.crt

# 压缩

tar czf cert.tar.gz -T aa.txt

# 查看

tar tf cert.tar.gz

# 传到其他master

scp cert.tar.gz k8s12:$PWD

scp cert.tar.gz k8s13:$PWD

# 解压到指定目录

tar zxf cert.tar.gz -C /

#加入master节点

kubeadm join 192.168.0.200:9443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:7656cff0d7180b1a126503e494c470a6d611af46876170eb1cc3cbc601a03573 --control-plane --certificate-key 78959298f66f35ec2f0b9c1c57ad894e7f26fc8705022cf6c2de3b835ed9e8e8 --cri-socket unix:///var/run/cri-dockerd.sock

# 加入工作节点

kubeadm join 192.168.0.200:9443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:7656cff0d7180b1a126503e494c470a6d611af46876170eb1cc3cbc601a03573 --cri-socket unix:///var/run/cri-dockerd.sock

集群重置

kubeadm reset --cri-socket /var/run/cri-dockerd.sock

rm -rf $HOME/.kube/config

部署网络插件 calico

# maste k8s11 节点执行

# 下载 calico 插件:

wget https://docs.projectcalico.org/manifests/calico.yaml --no-check-certificate

# 下载完后还需要修改里面定义Pod网络(CALICO_IPV4POOL_CIDR),与前面kubeadm init的–pod-network-cidr指定的一样

- name: CALICO_IPV4POOL_CIDR

value: "10.244.0.0/16"

# 下载 calico 相关镜像

kubectl apply -f calico.yaml

卸载

yum -y remove kubelet kubeadm kubectl

sudo kubeadm reset -f

sudo rm -rvf ~/.kube/

sudo rm -rvf /etc/kubernetes/

sudo rm -rvf /etc/systemd/system/kubelet.service.d

sudo rm -rvf /etc/systemd/system/kubelet.service

sudo rm -rvf /usr/bin/kube*

sudo rm -rvf /etc/cni

sudo rm -rvf /opt/cni

sudo rm -rvf /var/lib/etcd

sudo rm -rvf /var/etcd

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)