k8s部署nacos failed to req API:/api//nacos/v1/ns/instance after all servers([nacos-headless.nacos:8848

k8s部署nacos集群无法注册服务

问题描述

在k8s测试部署nacos集群后,nacos集群各节点均启动正常,也能正常访问nacos管理页面,但是启动其他服务时却无法注册服务

nacos版本:2.0.3

报错如下:

2024-03-23 08:00:35.725 ERROR --- [ main] io.seata.server.Server : nettyServer init error:ErrCode:503, ErrMsg:failed to req API:/api//nacos/v1/ns/instance after all servers([nacos-headless.nacos:8848]) tried: server is DOWNnow, detailed error message: Optional[Distro protocol is not initialized]

==>

java.lang.RuntimeException: ErrCode:503, ErrMsg:failed to req API:/api//nacos/v1/ns/instance after all servers([nacos-headless.nacos:8848]) tried: server is DOWNnow, detailed error message: Optional[Distro protocol is not initialized]

at io.seata.core.rpc.netty.NettyServerBootstrap.start(NettyServerBootstrap.java:160) ~[seata-core-1.3.0.jar:na]

at io.seata.core.rpc.netty.AbstractNettyRemotingServer.init(AbstractNettyRemotingServer.java:55) ~[seata-core-1.3.0.jar:na]

at io.seata.core.rpc.netty.NettyRemotingServer.init(NettyRemotingServer.java:52) ~[seata-core-1.3.0.jar:na]

at io.seata.server.Server.main(Server.java:102) ~[classes/:na]

Caused by: com.alibaba.nacos.api.exception.NacosException: failed to req API:/api//nacos/v1/ns/instance after all servers([nacos-headless.nacos:8848]) tried: server is DOWNnow, detailed error message: Optional[Distro protocol is not initialized]

at com.alibaba.nacos.client.naming.net.NamingProxy.reqAPI(NamingProxy.java:490) ~[nacos-client-1.2.0.jar:na]

at com.alibaba.nacos.client.naming.net.NamingProxy.reqAPI(NamingProxy.java:395) ~[nacos-client-1.2.0.jar:na]

at com.alibaba.nacos.client.naming.net.NamingProxy.reqAPI(NamingProxy.java:391) ~[nacos-client-1.2.0.jar:na]

at com.alibaba.nacos.client.naming.net.NamingProxy.registerService(NamingProxy.java:210) ~[nacos-client-1.2.0.jar:na]

at com.alibaba.nacos.client.naming.NacosNamingService.registerInstance(NacosNamingService.java:207) ~[nacos-client-1.2.0.jar:na]

at com.alibaba.nacos.client.naming.NacosNamingService.registerInstance(NacosNamingService.java:182) ~[nacos-client-1.2.0.jar:na]

at io.seata.discovery.registry.nacos.NacosRegistryServiceImpl.register(NacosRegistryServiceImpl.java:85) ~[seata-discovery-nacos-1.3.0.jar:na]

at io.seata.core.rpc.netty.NettyServerBootstrap.start(NettyServerBootstrap.java:156) ~[seata-core-1.3.0.jar:na]

... 3 common frames omitted

<==

nacos.yaml配置如下

apiVersion: v1

kind: Service

metadata:

name: nacos-headless

namespace: nacos

labels:

app: nacos-headless

spec:

type: NodePort

ports:

- port: 8848

name: server

targetPort: 8848

- port: 9848

name: client-rpc

targetPort: 9848

- port: 9849

name: raft-rpc

targetPort: 9849

- port: 7848

name: old-raft-rpc

targetPort: 7848

selector:

app: nacos

---

apiVersion: v1

kind: ConfigMap

metadata:

name: nacos-cm

namespace: nacos

data:

mysql.host: "mysql-write.mysql"

mysql.port: "3306"

mysql.user: "root"

mysql.password: "123456"

mysql.db.name: "nacos_config"

mysql.db.param: "characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useSSL=false&serverTimezone=UTC"

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: nacos

namespace: nacos

spec:

serviceName: nacos-headless

replicas: 3

template:

metadata:

labels:

app: nacos

annotations:

pod.alpha.kubernetes.io/initialized: "true"

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- nacos

topologyKey: "kubernetes.io/hostname"

containers:

- name: nacos

imagePullPolicy: Always

image: nacos/nacos-server:latest

resources:

requests:

cpu: 500m

memory: 2Gi

ports:

- containerPort: 8848

name: client

- containerPort: 9848

name: client-rpc

- containerPort: 9849

name: raft-rpc

- containerPort: 7848

name: old-raft-rpc

env:

- name: NACOS_REPLICAS

value: "3"

- name: MYSQL_SERVICE_HOST

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.host

- name: MYSQL_SERVICE_DB_NAME

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.db.name

- name: MYSQL_SERVICE_PORT

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.port

- name: MYSQL_SERVICE_USER

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.user

- name: MYSQL_SERVICE_PASSWORD

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.password

- name: SPRING_DATASOURCE_PLATFORM

value: "mysql"

- name: NACOS_SERVER_PORT

value: "8848"

- name: NACOS_APPLICATION_PORT

value: "8848"

- name: PERFER_HOST_MODE

value: "hostname"

- name: NACOS_SERVERS

value: "nacos-0.nacos-headless.nacos.svc.cluster.local:8848 nacos-1.nacos-headless.nacos.svc.cluster.local:8848 nacos-2.nacos-headless.nacos.svc.cluster.local:8848"

selector:

matchLabels:

app: nacos

排查思路

-

一、检查NACOS_SERVERS配置情况

如果你给nacos创建了一个命名空间叫nacos

检查以下配置中namespace的位置是否对应,在nacos-headless后的nacos为命名空间名称- name: NACOS_SERVERS value: "nacos-0.nacos-headless.nacos.svc.cluster.local:8848 nacos-1.nacos-headless.nacos.svc.cluster.local:8848 nacos-2.nacos-headless.nacos.svc.cluster.local:8848" -

二、检查cluster.conf文件

通过kubectl exec 命令进入nacos pod中,定位到/home/nacos/conf,查看cluster.conf文件kubectl exec -it nacos-0 -n nacos -- cat /home/nacos/conf/cluster.conf显示结果如下

10.244.152.173:8848 nacos-0.nacos-headless.nacos.svc.cluster.local:8848 nacos-1.nacos-headless.nacos.svc.cluster.local:8848 nacos-2.nacos-headless.nacos.svc.cluster.local:8848可以看到其中多了一行ip地址,在上面的配置文件中,我的配置文件选用了PERFER_HOST_MODE=hostname的参数,但是这里还是追加了每个pod的ip地址(具体原因这里不再深究)

通过访问nacos管理页面可以看到

其中多了一个ip的节点,是当前pod实例的ip地址,而且其他服务均处于CANDIDATE状态,说明此时服务间的选举并没有完成,切入点就是这个额外的ip地址解决方案:

挂载nacos中的/home/nacos/conf文件夹,手动去除额外的ip,保证每个pod间的配置一致

参考配置,用注释标记对应的位置

apiVersion: v1

kind: Service

metadata:

name: nacos-headless

namespace: nacos

labels:

app: nacos-headless

spec:

type: NodePort

ports:

- port: 8848

name: server

targetPort: 8848

- port: 9848

name: client-rpc

targetPort: 9848

- port: 9849

name: raft-rpc

targetPort: 9849

- port: 7848

name: old-raft-rpc

targetPort: 7848

selector:

app: nacos

---

apiVersion: v1

kind: ConfigMap

metadata:

name: nacos-cm

namespace: nacos

data:

mysql.host: "mysql-write.mysql"

mysql.port: "3306"

mysql.user: "root"

mysql.password: "123456"

mysql.db.name: "nacos_config"

mysql.db.param: "characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useSSL=false&serverTimezone=UTC"

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: nacos

namespace: nacos

spec:

serviceName: nacos-headless

replicas: 3

template:

metadata:

labels:

app: nacos

annotations:

pod.alpha.kubernetes.io/initialized: "true"

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- nacos

topologyKey: "kubernetes.io/hostname"

containers:

- name: nacos

imagePullPolicy: Always

image: nacos/nacos-server:latest

# volumeMounts:

# - name: nacos-config

# mountPath: /home/nacos/conf/ #挂载目标的路径

resources:

requests:

cpu: 500m

memory: 2Gi

ports:

- containerPort: 8848

name: client

- containerPort: 9848

name: client-rpc

- containerPort: 9849

name: raft-rpc

- containerPort: 7848

name: old-raft-rpc

env:

- name: NACOS_REPLICAS

value: "3"

- name: MYSQL_SERVICE_HOST

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.host

- name: MYSQL_SERVICE_DB_NAME

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.db.name

- name: MYSQL_SERVICE_PORT

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.port

- name: MYSQL_SERVICE_USER

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.user

- name: MYSQL_SERVICE_PASSWORD

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.password

- name: SPRING_DATASOURCE_PLATFORM

value: "mysql"

- name: NACOS_SERVER_PORT

value: "8848"

- name: NACOS_APPLICATION_PORT

value: "8848"

- name: PERFER_HOST_MODE

value: "hostname"

- name: NACOS_SERVERS

value: "nacos-0.nacos-headless.nacos.svc.cluster.local:8848 nacos-1.nacos-headless.nacos.svc.cluster.local:8848 nacos-2.nacos-headless.nacos.svc.cluster.local:8848"

# volumes:

# - name: nacos-config

# nfs:

# server: 192.168.11.243 #挂载服务器IP

# path: /data/nfs/rw/nacos/conf #挂载到服务器的具体路径

# readOnly: false

selector:

matchLabels:

app: nacos

这里挂载方式采用了nfs,可以根据实际情况选用其他方式,配置完成后记得打开注释,这里最好把所有conf中的文件都挂载出来

重点是application.properties cluster.conf两个文件

1.4.0-ipv6_support-update.sql application.properties cluster.conf nacos-logback.xml schema.sql

修改cluster.conf文件

nacos-0.nacos-headless.nacos.svc.cluster.local:8848

nacos-1.nacos-headless.nacos.svc.cluster.local:8848

nacos-2.nacos-headless.nacos.svc.cluster.local:8848

这里光修改cluster.conf文件还不够,经过几天的查询验证,从github上参考了这个解决方案issues10432

修改application.properties,追加以下参数

nacos.inetutils.prefer-hostname-over-ip=true

重启nacos节点即可

验证效果

重新进入nacos pod中查看cluster.conf文件

#2024-03-23T16:39:57.507

nacos-0.nacos-headless.nacos.svc.cluster.local:8848

nacos-1.nacos-headless.nacos.svc.cluster.local:8848

nacos-2.nacos-headless.nacos.svc.cluster.local:8848

此时已经不会追加当前pod节点的ip了

重新查看nacos管理页面

可以看到nacos已经正常显示leader的节点信息

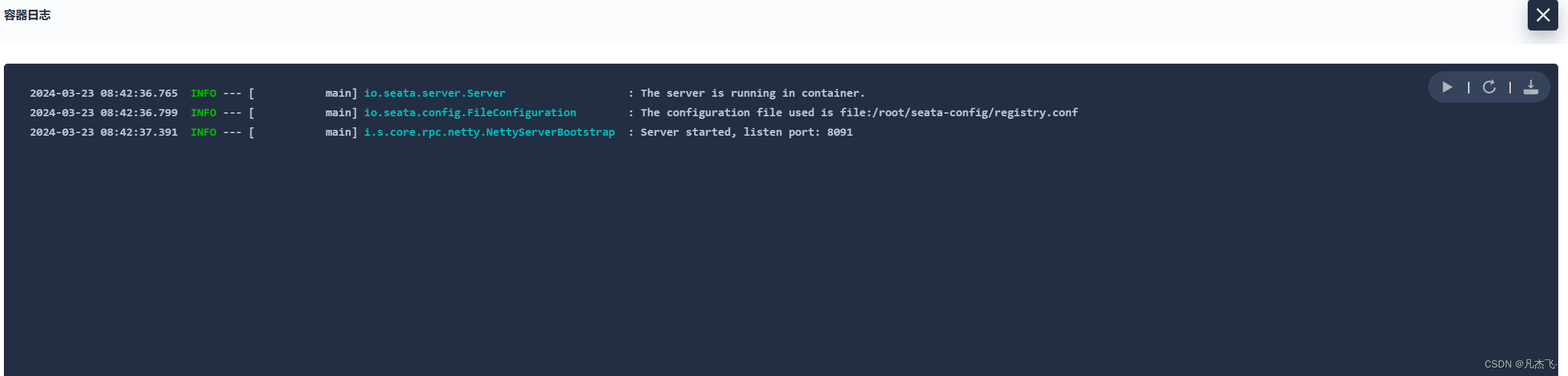

重新构建此前报错的seata-server节点

节点不再报错,并且能够正常注册到nacos中了,至此问题解决

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)