k8s系列之二集群环境搭建以及插件安装

终端工具很好用。1、虚拟机三台(ip按自己的网络环境相应配置)(master/node)2、关闭防火墙(master/node)3、关闭selinux(master/node)4、关闭swap(master/node)5、添加主机名与IP对应的关系(master/node)#添加如下内容:#保存退出6、修改主机名(master/node)[root@localhost ~] hostname k8

前置条件

终端工具MobaXterm很好用。

1、虚拟机三台(ip按自己的网络环境相应配置)(master/node)

| 节点 | ip |

|---|---|

| k8s-master | 192.168.200.150 |

| k8s-node1 | 192.168.200.151 |

| k8s-node2 | 192.168.200.152 |

2、关闭防火墙(master/node)

systemctl stop firewalld

systemctl disable firewalld

查看防火墙状态:systemctl status firewalld

3、关闭selinux(master/node)

setenforce 0 # 临时关闭

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config # 永久关闭

4、关闭swap(master/node)

swapoff -a # 临时关闭;关闭swap主要是为了性能考虑

free # 可以通过这个命令查看swap是否关闭了

sed -ri 's/.*swap.*/#&/' /etc/fstab # 永久关闭

5、添加主机名与IP对应的关系(master/node)

$ vim /etc/hosts

#添加如下内容:

192.168.200.150 k8s-master

192.168.200.151 k8s-node1

192.168.200.152 k8s-node2

#保存退出

6、修改主机名(master/node)

#k8s-master

[root@localhost ~] hostname

localhost.localdomain

[root@localhost ~] hostname k8s-master ##临时生效

[root@localhost ~] hostnamectl set-hostname k8s-master ##重启后永久生效

#k8s-node1

[root@localhost ~] hostname

localhost.localdomain

[root@localhost ~] hostname k8s-node1 ##临时生效

[root@localhost ~] hostnamectl set-hostname k8s-node1 ##重启后永久生效

7、桥接设置(master/node)

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

以上几步最好照着都执行一下,以免后面报一大堆错

安装docker(master/node)

注意docker与k8s 的版本对照关系。本次按照的k8s版本为1.18.0,对应的docker版本为docker-ce-19.03.9-3.el7

如果已经安装了dokcer就不需要重复安装了

1.安装wget

$ yum -y install wget

2.添加docker yum源

$ wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O/etc/yum.repos.d/docker-ce.repo

3.安装docker

$ yum -y install docker-ce-19.03.9-3.el7 docker-ce-cli-19.03.9-3.el7

4.设置开机启动

$ systemctl enable docker

5.启动docker

$ systemctl start docker

6.编辑docker配置文件

mkdir /etc/docker/

cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://gqs7xcfd.mirror.aliyuncs.com","https://hub-mirror.c.163.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

EOF

7.重启docker

$ systemctl restart docker

安装kubernetes

1.为kubernetes添加阿里云YUM软件源(master/node)

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[k8s]

name=k8s

enabled=1

gpgcheck=0

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

EOF

2.安装kubeadm,kubelet和kubectl(master/node)

版本可以选择自己要安装的版本号,我安装的是1.18.0

$ yum install -y kubelet-1.18.0 kubectl-1.18.0 kubeadm-1.18.0

3.设置开机自启动

$ systemctl enable kubelet

master节点初始化

部署Kubernetes (master) ,node节点不需要执行kubeadm init

kubeadm init \

--apiserver-advertise-address=192.168.200.150 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.18.0 \

--service-cidr=10.1.0.0/16 \

--pod-network-cidr=10.244.0.0/16

因为初始化的时候会做一下检查,如果出现一下错误。

[preflight] Running pre-flight checks error execution phase preflight:

[preflight] Some fatal errors occurred:

[ERROR Swap]: running with swap on is not supported. Please disable swap [preflight] If you know what you are doing, you can make

a check non-fatal with--ignore-preflight-errors=...To see the

stack trace of this error execute with --v=5 or higher

重启一下就好了,刚才修改的swap没有生效。

初始化完成可以看到下面的画面,如果有错误的话需要排查一下。

配置kubectl

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

查看节点

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 6m42s v1.18.0

在两台Node节点上执行join命令

在两台node节点上分别执行join命令

kubeadm join 192.168.200.150:6443 --token 6mjjzr.imfudxt8568utrvv \

--discovery-token-ca-cert-hash sha256:c89fd5ec80eac07429aeb450d475eec53d7d88e51c489c817fd5b7d8f3ebe3ce

查看节点

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 9m54s v1.18.0

k8s-node1 NotReady 42s v1.18.0

k8s-node2 NotReady 46s v1.18.0

安装插件

1.安装 flannel

从官网下载yaml文件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

然后执行yaml文件

kubectl apply -f kube-flannel.yml

查看所有pod

kubectl get pods -A

查看命名空间pod

kubectl -n <namespace> get pods -o wide

2.部署busybox来测试集群各网络情况

vi busybox.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: busybox

spec:

replicas: 2

selector:

matchLabels:

name: busybox

template:

metadata:

labels:

name: busybox

spec:

containers:

- name: busybox

image: busybox

imagePullPolicy: IfNotPresent

args:

- /bin/sh

- -c

- sleep 1; touch /tmp/healthy; sleep 30000

readinessProbe:

exec:

command:

- cat

- /tmp/healthy

initialDelaySeconds: 1

kubectl apply -f busybox.yaml

查看所有pod

[root@k8s-master ~]# kubectl get pods -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

default busybox-7c84546778-69hq7 1/1 Running 0 90s 10.244.2.2 k8s-node1 <none> <none>

default busybox-7c84546778-plzwg 1/1 Running 0 90s 10.244.1.2 k8s-node2 <none> <none>

进入pod,查看pod 和各node是否互通

kubectl exec -it busybox-7c84546778-69hq7 -- /bin/sh

/ # ping -c 10.244.1.2

ping: invalid number '10.244.1.2'

/ # ping -c -2 10.244.1.2

ping: invalid number '-2'

/ # ping -c 2 10.244.1.2

PING 10.244.1.2 (10.244.1.2): 56 data bytes

64 bytes from 10.244.1.2: seq=0 ttl=62 time=0.504 ms

64 bytes from 10.244.1.2: seq=1 ttl=62 time=1.611 ms

--- 10.244.1.2 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.504/1.057/1.611 ms

/ # ping -c 2 192.168.200.151

PING 192.168.200.151 (192.168.200.151): 56 data bytes

64 bytes from 192.168.200.151: seq=0 ttl=64 time=0.070 ms

64 bytes from 192.168.200.151: seq=1 ttl=64 time=0.074 ms

--- 192.168.200.151 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.070/0.072/0.074 ms

/ # ping -c 2 192.168.200.152

PING 192.168.200.152 (192.168.200.152): 56 data bytes

64 bytes from 192.168.200.152: seq=0 ttl=63 time=0.273 ms

64 bytes from 192.168.200.152: seq=1 ttl=63 time=0.203 ms

--- 192.168.200.152 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.203/0.238/0.273 ms

部署dashboard

下载dashboard

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml

#修改dashboard配置,特别要注意空格,要按照前面的对齐!

#vim recommended.yaml

#增加nodeport配置

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort #增加此行

ports:

- port: 443

targetPort: 8443

nodePort: 30000 #增加此行

selector:

k8s-app: kubernetes-dashboard

kubectl apply -f recommended.yaml

[root@k8s-master ~]# kubectl get pod -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-6b4884c9d5-vmfzc 1/1 Running 0 2m9s

kubernetes-dashboard-7f99b75bf4-2d7sg 1/1 Running 0 2m9s

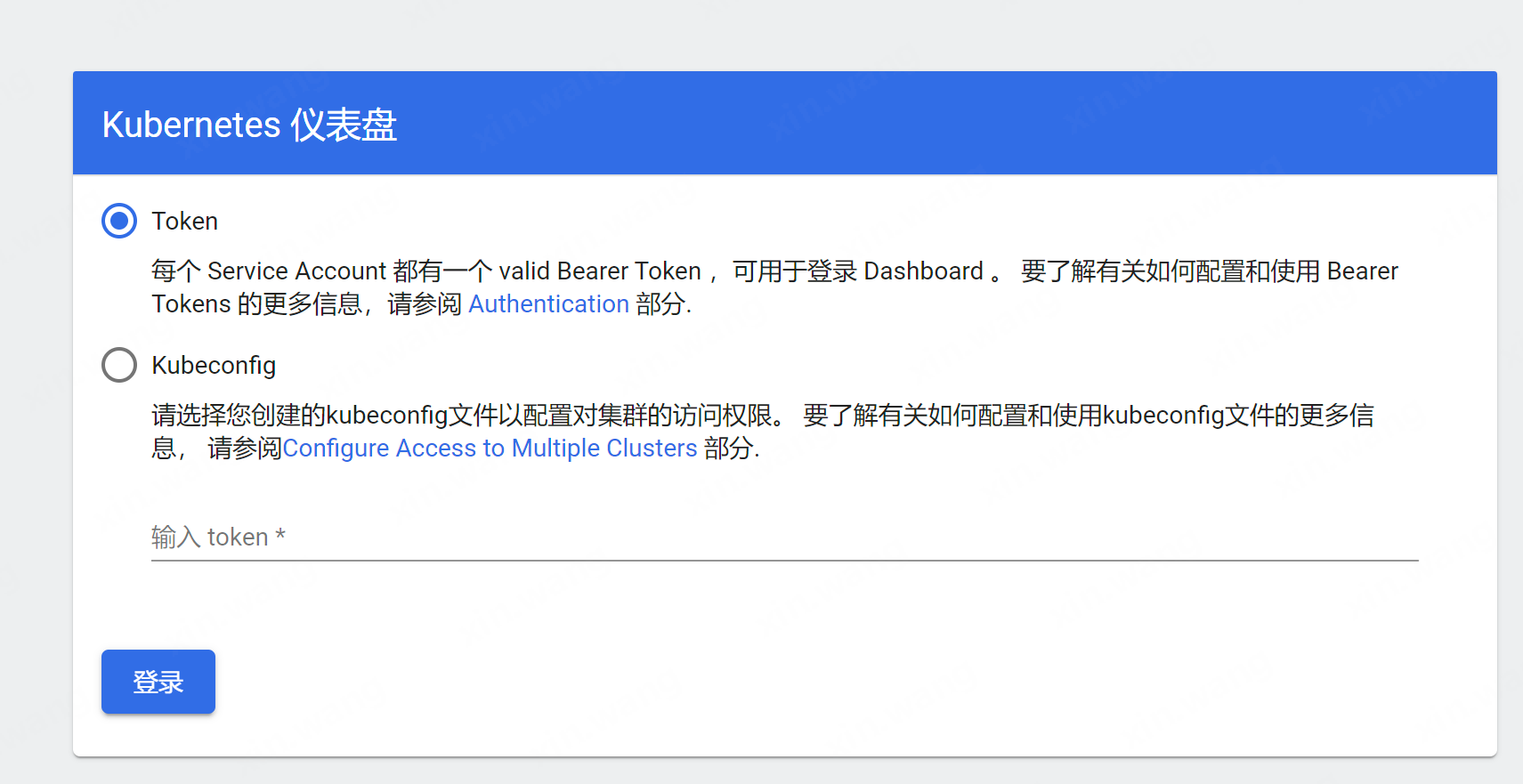

访问地址:https://192.168.200.150:30000/#/login

创建用户

如上图,跳转到了登录页面,那我们就先创建个用户:

1.创建服务账号

首先创建一个叫admin-user的服务账号,并放在kube-system名称空间下:

admin-user.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kube-system

执行kubectl create命令:

kubectl create -f admin-user.yaml

2.绑定角色

默认情况下,kubeadm创建集群时已经创建了admin角色,我们直接绑定即可:

admin-user-role-binding.yaml

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kube-system

执行kubectl create命令:

kubectl create -f admin-user-role-binding.yaml

3.获取Token

现在我们需要找到新创建的用户的Token,以便用来登录dashboard:

kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')

输出类似:

Name: admin-user-token-qrj82

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name=admin-user

kubernetes.io/service-account.uid=6cd60673-4d13-11e8-a548-00155d000529

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXFyajgyIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI2Y2Q2MDY3My00ZDEzLTExZTgtYTU0OC0wMDE1NWQwMDA1MjkiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.C5mjsa2uqJwjscWQ9x4mEsWALUTJu3OSfLYecqpS1niYXxp328mgx0t-QY8A7GQvAr5fWoIhhC_NOHkSkn2ubn0U22VGh2msU6zAbz9sZZ7BMXG4DLMq3AaXTXY8LzS3PQyEOCaLieyEDe-tuTZz4pbqoZQJ6V6zaKJtE9u6-zMBC2_iFujBwhBViaAP9KBbE5WfREEc0SQR9siN8W8gLSc8ZL4snndv527Pe9SxojpDGw6qP_8R-i51bP2nZGlpPadEPXj-lQqz4g5pgGziQqnsInSMpctJmHbfAh7s9lIMoBFW7GVE8AQNSoLHuuevbLArJ7sHriQtDB76_j4fmA

ca.crt: 1025 bytes

namespace: 11 bytes

然后把Token复制到登录界面的Token输入框中,登入后显示如下:

部署metrics-server

下载metrics-server(会抽风下载不下来)

wget https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

修改镜像地址

将部署文件中镜像地址修改为国内的地址,大概在部署文件的第140行。 原配置是:

image: k8s.gcr.io/metrics-server/metrics-server:v0.6.2

修改后的配置是:

image: registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server:v0.6.2

部署metrics server

kubectl create -f components.yaml

查看metric server的运行情况,发现探针问题:Readiness probe failed: HTTP probe failed with statuscode: 500

[root@centos05 deployment]# kubectl get pods -n kube-system | grep metrics

kube-system metrics-server-7f6b85b597-j2p5h 0/1 Running 0 2m23s

[root@centos05 deployment]# kubectl describe pod metrics-server-7f6b85b597-j2p5h -n kube-system

在大概 139 行的位置追加参数:–kubelet-insecure-tls,修改后内容如下:

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls

再次部署文件:

kubectl apply -f components.yaml

执行kubectl top命令成功:

[root@k8s-master kubernetes]# kubectl top pod

NAME CPU(cores) MEMORY(bytes)

busybox-7c84546778-69hq7 1m 0Mi

busybox-7c84546778-plzwg 1m 0Mi

nginx-deployment 0m 1Mi

nginx-deployment-788b8d7b98-528cj 0m 1Mi

nginx-deployment-788b8d7b98-8j6q4 0m 1Mi

再进入dashboard发现有监控了,token过期的话再执行上面获取token一次。

完结,后续继续学习k8s吧

更多推荐

已为社区贡献16条内容

已为社区贡献16条内容

所有评论(0)