ubuntu 20.04 K8S高可用集群部署

对于生产环境,我们需要考虑集群的高可用性。如果关键组件(例如 kube-apiserver,kube-scheduler 和 kube-controller-manager)都在同一主节点上运行,一旦主节点出现故障,Kubernetes 和 KubeSphere 将不可用。因此,我们需要通过用负载均衡器配置多个主节点来设置高可用性集群。您可以使用任何云负载平衡器或任何硬件负载平衡器(例如F5)。另

K8S高可用集群部署

在 VM安装 KubeSphere

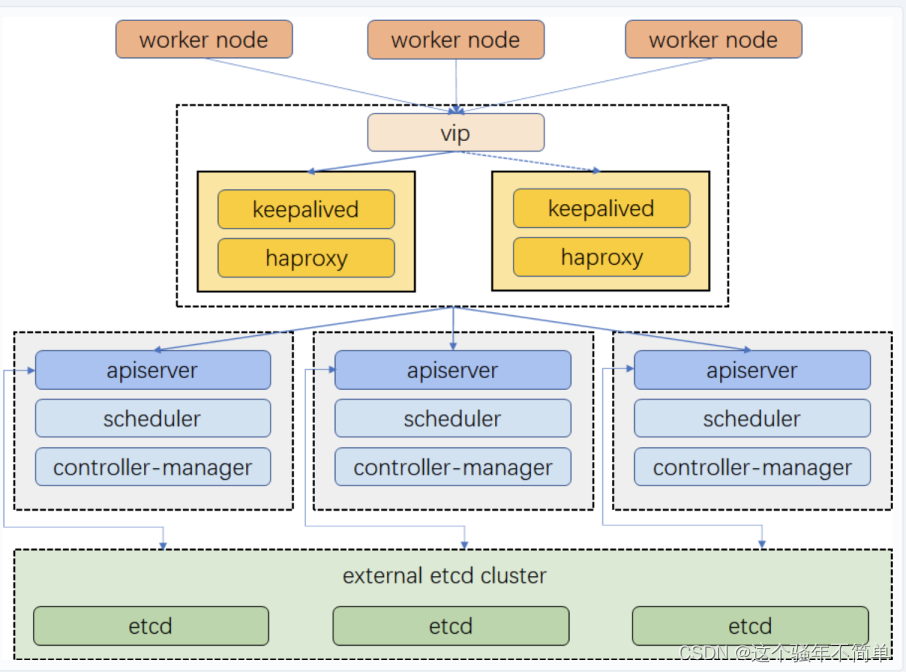

对于生产环境,我们需要考虑集群的高可用性。如果关键组件(例如 kube-apiserver,kube-scheduler 和 kube-controller-manager)都在同一主节点上运行,一旦主节点出现故障,Kubernetes 和 KubeSphere 将不可用。因此,我们需要通过用负载均衡器配置多个主节点来设置高可用性集群。您可以使用任何云负载平衡器或任何硬件负载平衡器(例如F5)。另外,Keepalived 和 HAproxy 或 NGINX 也是创建高可用性集群的替代方法。

本教程介绍如何使用 Keepalived + HAProxy 对 kube-apiserver 进行负载均衡,实现高可用 Kubernetes 集群。

提示

本教程通过安装KubeSphere来部署k8s高可用集群,要是不想使用KubeSphere来管理请参考博主另外一篇文章

考虑到数据的持久性,对于生产环境,我们建议您准备持久化存储。若搭建开发和测试环境,可以直接使用默认集成的 OpenEBS 的 LocalPV。

部署架构

创建主机

本示例创建 8 台 的虚拟机部署默认的最小化安装,每台配置为 2 Core,4 GB,40 G 即可。

主机 IP 主机名称 角色

10.20.30.1 master1 master, etcd

10.20.30.2 master2 master, etcd

10.20.30.3 master3 master, etcd

10.20.30.4 node1 worker

10.20.30.5 node2 worker

10.20.30.6 node3 worker

10.20.30.7 vip 虚拟 IP(不是实际的主机)

10.20.30.8 lb-0 lb(Keepalived + HAProxy

10.20.30.9 lb-1 lb(Keepalived + HAProxy

备注

vip 所在的是虚拟 IP,并不需要创建主机,所以只需要创建 8 台虚拟机。

选择可创建的资源池,点击右键,选择新建虚拟机(创建虚拟机入口有好几个,请自己选择)

部署 keepalived 和 HAproxy

生产环境需要单独准备负载均衡器,例如 NGINX、F5、Keepalived + HAproxy 这样的私有化部署负载均衡器方案。如果您是准备搭建开发或测试环境,无需准备负载均衡器,可以跳过此小节。

apt安装

在主机为 lb-0 和 lb-1 中部署 Keepalived + HAProxy 即 IP 为10.20.30.8与10.20.30.9的服务器上安装部署 HAProxy 和 psmisc。

apt install keepalived haproxy psmisc -y

配置 HAProxy

在 IP 为 10.20.30.8 与 10.20.30.8 的服务器上按如下参数配置 HAProxy (两台 lb 机器配置一致即可,注意后端服务地址)。

# HAProxy Configure /etc/haproxy/haproxy.cfg

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

# turn on stats unix socket

stats socket /var/lib/haproxy/stats

#---------------------------------------------------------------------

# common defaults that all the 'listen' and 'backend' sections will

# use if not designated in their block

#---------------------------------------------------------------------

defaults

log global

option httplog

option dontlognull

timeout connect 5000

timeout client 5000

timeout server 5000

#---------------------------------------------------------------------

# main frontend which proxys to the backends

#---------------------------------------------------------------------

frontend kube-apiserver

bind *:6443

mode tcp

option tcplog

default_backend kube-apiserver

#---------------------------------------------------------------------

# round robin balancing between the various backends

#---------------------------------------------------------------------

backend kube-apiserver

mode tcp

option tcplog

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server kube-apiserver-1 10.20.30.1:6443 check

server kube-apiserver-2 10.20.30.2:6443 check

server kube-apiserver-3 10.20.30.3:6443 check

启动之前检查语法是否有问题

haproxy -f /etc/haproxy/haproxy.cfg -c

启动 Haproxy,并设置开机自启动

systemctl restart haproxy && systemctl enable haproxy

停止 Haproxy

systemctl stop haproxy

配置 Keepalived

主 HAProxy lb-0-10.10.20.30.8 (/etc/keepalived/keepalived.conf)

global_defs {

notification_email {

}

smtp_connect_timeout 30 #连接超时时间

router_id LVS_DEVEL01 ##相当于给这个服务器起个昵称

vrrp_skip_check_adv_addr

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 2

weight 20

}

vrrp_instance haproxy-vip {

state MASTER #主服务器 是MASTER

priority 100 #主服务器优先级要比备服务器高

interface ens192 #实例绑定的网卡

virtual_router_id 60 #定义一个热备组,可以认为这是60号热备组

advert_int 1 #1秒互相通告一次,检查对方死了没。

authentication {

auth_type PASS #认证类型

auth_pass 1111 #认证密码 这些相当于暗号

}

unicast_src_ip 10.20.30.8 #当前机器地址

unicast_peer {

10.20.30.9 #peer中其它机器地址

}

virtual_ipaddress {

#vip地址

10.20.30.7/24

}

track_script {

chk_haproxy

}

}

备 HAProxy lb-1-10.20.30.9 (/etc/keepalived/keepalived.conf)

global_defs {

notification_email {

}

router_id LVS_DEVEL02 ##相当于给这个服务器起个昵称

vrrp_skip_check_adv_addr

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 2

weight 20

}

vrrp_instance haproxy-vip {

state BACKUP #备份服务器 是 backup

priority 90 #优先级要低(把备份的90修改为100)

interface ens192 #实例绑定的网卡

virtual_router_id 60

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

unicast_src_ip 10.20.30.9 #当前机器地址

unicast_peer {

10.20.30.8 #peer 中其它机器地址

}

virtual_ipaddress {

#加/24

10.20.30.7/24

}

track_script {

chk_haproxy

}

}

启动 keepalived,设置开机自启动

systemctl restart keepalived && systemctl enable keepalived

systemctl stop keepalived

开启 keepalived服务

systemctl start keepalived

验证可用性

使用ip a s查看各 lb 节点 vip 绑定情况

ip a s

暂停 vip 所在节点 HAProxy

systemctl stop haproxy

再次使用ip a s查看各 lb 节点 vip 绑定情况,查看 vip 是否发生漂移

ip a s

或者使用下面命令查看

systemctl status -l keepalived

安装依赖包

apt install socat conntrack ipvsadm ipset chrony containerd nfs-kernel-server rpcbind nfs-common -y

生成containetd的配置文件

containerd config default | sudo tee /etc/containerd/config.toml >/dev/null 2>&1

root@master1:~# ls /etc/containerd/

config.toml

如果提示不存在目录自行创建目录再运行以上命令

root@k8s-master1 :~# mkdir -p /etc/containerd/ && containerd config default | sudo tee /etc/containerd/config.toml >/dev/null 2>&1

root@k8s-master1 :~# ls /etc/containerd/

config.toml

修改Containerd的配置文件

root@k8s-master1 :~# sed -i "s#SystemdCgroup\ \=\ false#SystemdCgroup\ \=\ true#g" /etc/containerd/config.toml

root@k8s-master1 :~# cat /etc/containerd/config.toml | grep SystemdCgroup

#将镜像源设置为阿里云 google_containers 镜像源

root@k8s-master1 :~# sed -i "s#registry.k8s.io#registry.cn-hangzhou.aliyuncs.com/chenby#g" /etc/containerd/config.toml

root@k8s-master1 :~# cat /etc/containerd/config.toml | grep sandbox_image

#重新启动containerd

root@k8s-master1 :~# systemctl restart containerd

#开机启动 containerd服务

root@k8s-master1 :~# systemctl enable containerd

#添加 apt key

root@k8s-master1 :~# curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | sudo apt-key add -

#添加Kubernetes的apt源为阿里云的源

root@k8s-master1 :~# apt-add-repository "deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main"

#检查更新

root@k8s-master1 :~# apt update

下载 KubeKey 安装程序

下载可执行安装程序 kk 至一台目标机器:

如果您能正常访问 GitHub/Googleapis如果您访问 GitHub/Googleapis 受限

从 GitHub Release Page 下载 KubeKey 或直接使用以下命令。

curl -sfL https://get-kk.kubesphere.io | VERSION=v3.0.7 sh -

备注

执行以上命令会下载最新版 KubeKey,您可以修改命令中的版本号下载指定版本。

为 kk 添加可执行权限:

chmod +x kk

创建多节点集群

您可以使用高级安装来控制自定义参数或创建多节点集群。具体来说,通过指定配置文件来创建集群。

KubeKey 部署集群

创建配置文件(一个示例配置文件)。

./kk create config --with-kubernetes v1.22.12 --with-kubesphere v3.3.2

备注

安装 KubeSphere 3.3 的建议 Kubernetes 版本:v1.20.x、v1.21.x、* v1.22.x、* v1.23.x 和 * v1.24.x。带星号的版本可能出现边缘节点部分功能不可用的情况。因此,如需使用边缘节点,推荐安装 v1.21.x。如果不指定 Kubernetes 版本,KubeKey 将默认安装 Kubernetes v1.23.10。有关受支持的 Kubernetes 版本的更多信息,请参见支持矩阵。

如果您在这一步的命令中不添加标志 --with-kubesphere,则不会部署 KubeSphere,只能使用配置文件中的 addons 字段安装,或者在您后续使用 ./kk create cluster 命令时再次添加这个标志。

如果您添加标志 --with-kubesphere 时不指定 KubeSphere 版本,则会安装最新版本的 KubeSphere。

默认文件 config-sample.yaml 创建后,根据您的环境修改该文件。

vi ~/config-sample.yaml

apiVersion: kubekey.kubesphere.io/v1alpha1

kind: Cluster

metadata:

name: config-sample

spec:

hosts:

- {name: master1, address: 10.20.30.1, internalAddress: 10.20.30.1,port:3322, password: P@ssw0rd!}

- {name: master2, address: 10.20.30.2, internalAddress: 10.20.30.2,port:3322, password: P@ssw0rd!}

- {name: master3, address: 10.20.30.3, internalAddress: 10.20.30.3,port:3322, password: P@ssw0rd!}

- {name: node1, address: 10.20.30.4, internalAddress: 10.20.30.4,port:3322, password: P@ssw0rd!}

- {name: node2, address: 10.20.30.5, internalAddress: 10.20.30.5,port:3322, password: P@ssw0rd!}

- {name: node3, address: 10.20.30.6, internalAddress: 10.20.30.6,port:3322, password: P@ssw0rd!}

roleGroups:

etcd:

- master1

- master2

- master3

control-plane:

- master1

- master2

- master3

worker:

- node1

- node2

- node3

controlPlaneEndpoint:

domain: lb.kubesphere.local

# vip

address: "10.20.30.7"

port: 6443

kubernetes:

version: v1.22.12

imageRepo: kubesphere

clusterName: cluster.local

masqueradeAll: false # masqueradeAll tells kube-proxy to SNAT everything if using the pure iptables proxy mode. [Default: false]

maxPods: 110 # maxPods is the number of pods that can run on this Kubelet. [Default: 110]

nodeCidrMaskSize: 24 # internal network node size allocation. This is the size allocated to each node on your network. [Default: 24]

proxyMode: ipvs # mode specifies which proxy mode to use. [Default: ipvs]

network:

plugin: calico

calico:

ipipMode: Always # IPIP Mode to use for the IPv4 POOL created at start up. If set to a value other than Never, vxlanMode should be set to "Never". [Always | CrossSubnet | Never] [Default: Always]

vxlanMode: Never # VXLAN Mode to use for the IPv4 POOL created at start up. If set to a value other than Never, ipipMode should be set to "Never". [Always | CrossSubnet | Never] [Default: Never]

vethMTU: 1440 # The maximum transmission unit (MTU) setting determines the largest packet size that can be transmitted through your network. [Default: 1440]

kubePodsCIDR: 10.233.64.0/18

kubeServiceCIDR: 10.233.0.0/18

registry:

registryMirrors: []

insecureRegistries: []

addons: #持久化存储配置

- name: nfs-client

namespace: kube-system

sources:

chart:

name: nfs-client-provisioner

repo: https://charts.kubesphere.io/main

valuesFile: /home/ubuntu/nfs-client.yaml #对应下面的nfs文件

···

其它配置可以在安装后之后根据需要进行修改

持久化存储配置

如本文开头的前提条件所说,对于生产环境,我们建议您准备持久性存储,可参考以下说明进行配置。若搭建开发和测试环境,您可以跳过这小节,直接使用默认集成的 OpenEBS 的 LocalPV 存储。

继续编辑上述config-sample.yaml文件,找到[addons]字段,这里支持定义任何持久化存储的插件或客户端,如 NFS Client、Ceph、GlusterFS、CSI,根据您自己的持久化存储服务类型,并参考 持久化存储服务 中对应的示例 YAML 文件进行设置。

这里我们使用nfs作为持久化存储配置

vi ~/nfs-client.yaml

nfs:

server: "这里填写nsf服务器ip" # This is the server IP address. Replace it with your own.

path: "nfs共享路径" # Replace the exported directory with your own.

storageClass:

defaultClass: false

执行创建集群

使用上面自定义的配置文件创建集群:

./kk create cluster -f config-sample.yaml

根据表格的系统依赖的前提条件检查,如果相关依赖都显示 √,则可以输入 yes 继续执行安装。

root@k8s-master1:~# ./kk create cluster -f config-sample.yaml

_ __ _ _ __

| | / / | | | | / /

| |/ / _ _| |__ ___| |/ / ___ _ _

| \| | | | '_ \ / _ \ \ / _ \ | | |

| |\ \ |_| | |_) | __/ |\ \ __/ |_| |

\_| \_/\__,_|_.__/ \___\_| \_/\___|\__, |

__/ |

|___/

15:32:22 CST [GreetingsModule] Greetings

15:32:23 CST message: [k8s-node5]

Greetings, KubeKey!

15:32:23 CST message: [k8s-node1]

Greetings, KubeKey!

15:32:24 CST message: [k8s-master1]

Greetings, KubeKey!

15:32:24 CST message: [k8s-master2]

Greetings, KubeKey!

15:32:25 CST message: [k8s-master3]

Greetings, KubeKey!

15:32:26 CST message: [k8s-node3]

Greetings, KubeKey!

15:32:26 CST message: [k8s-node2]

Greetings, KubeKey!

15:32:27 CST message: [k8s-node4]

Greetings, KubeKey!

15:32:27 CST success: [k8s-node5]

15:32:27 CST success: [k8s-node1]

15:32:27 CST success: [k8s-master1]

15:32:27 CST success: [k8s-master2]

15:32:27 CST success: [k8s-master3]

15:32:27 CST success: [k8s-node3]

15:32:27 CST success: [k8s-node2]

15:32:27 CST success: [k8s-node4]

15:32:27 CST [NodePreCheckModule] A pre-check on nodes

15:32:27 CST success: [k8s-node2]

15:32:27 CST success: [k8s-master3]

15:32:27 CST success: [k8s-node3]

15:32:27 CST success: [k8s-node5]

15:32:27 CST success: [k8s-master2]

15:32:27 CST success: [k8s-master1]

15:32:27 CST success: [k8s-node4]

15:32:27 CST success: [k8s-node1]

15:32:27 CST [ConfirmModule] Display confirmation form

+-------------+------+------+---------+----------+-------+-------+---------+-----------+--------+--------+-------------------------+------------+-------------+------------------+--------------+

| name | sudo | curl | openssl | ebtables | socat | ipset | ipvsadm | conntrack | chrony | docker | containerd | nfs client | ceph client | glusterfs client | time |

+-------------+------+------+---------+----------+-------+-------+---------+-----------+--------+--------+-------------------------+------------+-------------+------------------+--------------+

| k8s-master1 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

| k8s-master2 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

| k8s-master3 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

| k8s-node1 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

| k8s-node2 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

| k8s-node3 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

| k8s-node4 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

| k8s-node5 | y | y | y | y | y | y | y | y | y | | 1.6.12-0ubuntu1~20.04.1 | y | | | CST 15:32:27 |

+-------------+------+------+---------+----------+-------+-------+---------+-----------+--------+--------+-------------------------+------------+-------------+------------------+--------------+

This is a simple check of your environment.

Before installation, ensure that your machines meet all requirements specified at

https://github.com/kubesphere/kubekey#requirements-and-recommendations

Continue this installation? [yes/no]: yes

15:32:41 CST success: [LocalHost]

15:32:41 CST [NodeBinariesModule] Download installation binaries

成功安装后提示

**************************************************

#####################################################

### Welcome to KubeSphere! ###

#####################################################

Console: http://10.20.30.1:30880

Account: admin

Password: P@88w0rd

NOTES:

1. After you log into the console, please check the

monitoring status of service components in

the "Cluster Management". If any service is not

ready, please wait patiently until all components

are up and running.

2. Please change the default password after login.

#####################################################

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)