用Vue和face-api.js实现人脸表情检测

只是简单的表情检测 其中参数可以随意修改

·

<template>

<div id="expression">

<video ref="video" width="720" height="560" autoplay></video>

<canvas ref="canvas" style="position: absolute"></canvas>

<div lass="fatigue-text">{{fatigue?"疲劳状态":"不疲劳"}}</div>

</div>

</template>

<script>

import * as faceapi from 'face-api.js';

export default {

data() {

return {

mouthOpen: false,

mouthOpenStartTime: null,

fatigue: false, // 新增的疲劳状态变量

};

},

mounted() {

this.init();

},

beforeDestroy() {

// 清理定时器

clearInterval(this.intervalId);

},

methods: {

async init() {

await this.loadModels();

const video = this.$refs.video;

const canvas = this.$refs.canvas;

canvas.width = 800;

canvas.height = 600;

this.startVideo(video, canvas);

},

async loadModels() {

try {

await Promise.all([

faceapi.nets.tinyFaceDetector.loadFromUri('/models'),

faceapi.nets.faceLandmark68Net.loadFromUri('/models'),

faceapi.nets.faceRecognitionNet.loadFromUri('/models'),

faceapi.nets.faceExpressionNet.loadFromUri('/models'),

console.log('model')

]);

} catch (error) {

console.error('加载 face-api.js 模型时出错:', error);

throw error;

}

},

startVideo(video, canvas) {

navigator.mediaDevices.getUserMedia({ video: true })

.then(stream => {

video.srcObject = stream;

video.addEventListener('loadedmetadata', () => {

this.initVideo(video, canvas);

});

})

.catch(err => {

console.error('获取摄像头流时出错:', err);

});

},

initVideo(video, canvas) {

const videoWidth = video.videoWidth;

const videoHeight = video.videoHeight;

if (videoWidth === 0 || videoHeight === 0) {

video.addEventListener('loadedmetadata', () => {

this.initVideoAfterMetadata(video, canvas);

});

} else {

this.initVideoAfterMetadata(video, canvas);

}

},

initVideoAfterMetadata(video, canvas) {

const videoWidth = video.videoWidth;

const videoHeight = video.videoHeight;

if (videoWidth === 0 || videoHeight === 0) {

console.error('无效的视频尺寸');

return;

}

const displaySize = {

width: videoWidth,

height: videoHeight

};

faceapi.matchDimensions(canvas, displaySize);

this.intervalId = setInterval(async () => {

// console.log('定时器执行中');

try {

const detections = await faceapi.detectAllFaces(video,

new faceapi.TinyFaceDetectorOptions({ inputSize:256, scoreThreshold: 0.5, scale: 1 }))

.withFaceLandmarks()

.withFaceExpressions();

// console.log('检测结果:', detections);

if (detections.length === 0) {

// console.log('未检测到人脸');

} else {

// console.log('检测到人脸数量:', detections.length);

}

const resizedDetections = faceapi.resizeResults(detections, displaySize);

// console.log('调整大小后的检测结果:', resizedDetections);

canvas.getContext('2d').clearRect(0, 0, canvas.width, canvas.height);

faceapi.draw.drawDetections(canvas, resizedDetections);

faceapi.draw.drawFaceLandmarks(canvas, resizedDetections);

faceapi.draw.drawFaceExpressions(canvas, resizedDetections);

// 获取嘴巴张开概率

const mouthOpenProbability = resizedDetections[0]?.expressions?.surprised ?? 0;

// 设置一个阈值,当嘴巴张开概率大于该阈值时认为嘴巴张开

const mouthOpenThreshold = 0.2;

if (mouthOpenProbability > mouthOpenThreshold) {

// 如果嘴巴之前是闭合状态,记录下开始时间

if (!this.mouthOpen) {

this.mouthOpenStartTime = new Date();

}

this.mouthOpen = true;

} else {

this.mouthOpen = false;

// 如果嘴巴之前是张开状态,计算时间差

if (this.mouthOpenStartTime) {

const mouthOpenTime = new Date() - this.mouthOpenStartTime;

console.log(mouthOpenTime)

// 设置一个时间阈值,当嘴巴张开时间超过该阈值时认为疲劳

const fatigueThreshold = 1000; // 毫秒

if (mouthOpenTime > fatigueThreshold) {

console.log('疲劳状态');

// 在这里触发疲劳状态的处理逻辑

this.fatigue = true; // 设置疲劳状态变量为 true

} else {

this.fatigue = false; // 否则设置为 false

}

this.mouthOpenStartTime = null;

}

}

} catch (error) {

console.error('人脸检测时出错:', error);

}

//给父组件传状态数组

this.$emit('my-event', );

}, 100);

}

}

};

</script>

<style>

#expression {

margin: 0;

padding: 0;

display: flex;

align-items: center;

justify-content: center;

}

.fatigue-text {

font-size: 24px;

color: red;

position: absolute;

top: 20px;

left: 20px;

}

</style>

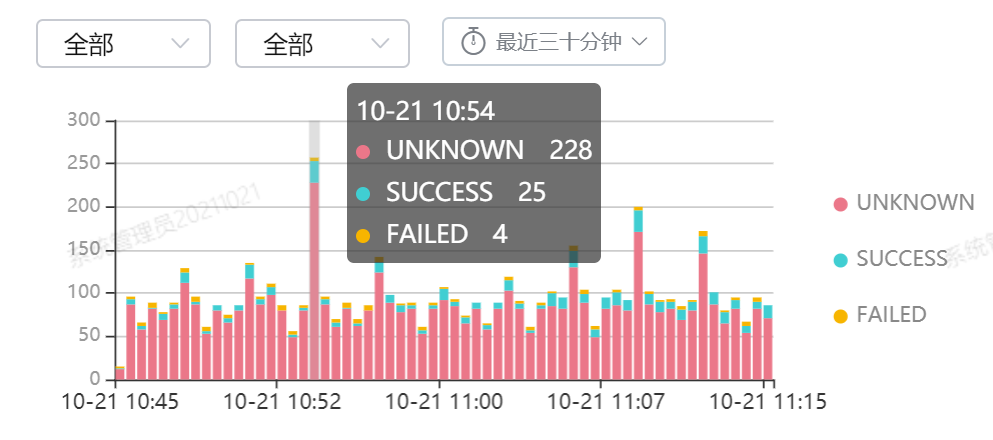

实现之后是这样

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)