centos7安装k8s1.23.6

注意centos7在安装的时候默认是不打开网络的,所以需要先设置网络其中需要将BOOTPROTO="static"设置为静态,然后将ONBOOT="yes"设置为yes,意思是打开网络。最后在下方设置网络ip等信息。设置完成后重启网络对于其他两个节点,我们可以先把这个文件传过去,然后只需要修改一个IP就行了然后还要开启SSH,三个节点的操作也是一样的1、需要把前面的注释去了,表示开启sshd的服务

1、准备三台主机,安装centos7

192.168.1.211 k8s-master

192.168.1.212 k8s-node1

192.168.1.213 k8s-node2

注意centos7在安装的时候默认是不打开网络的,所以需要先设置网络

vim /etc/sysconfig/network-scripts/ifcfg-ens192

TYPE="Ethernet"

PROXY_METHOD="none"

BROWSER_ONLY="no"

BOOTPROTO="static"

DEFROUTE="yes"

IPV4_FAILURE_FATAL="no"

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

IPV6_DEFROUTE="yes"

IPV6_FAILURE_FATAL="no"

IPV6_ADDR_GEN_MODE="stable-privacy"

NAME="ens192"

UUID="b31efbd7-8a69-45fa-a8f1-220cc4dd0511"

DEVICE="ens192"

ONBOOT="yes"

IPADDR=192.168.1.211

NETMASK=255.255.255.0

GATEWAY=192.168.1.1

DNS1=114.114.114.114

DNS2=8.8.8.8

其中需要将BOOTPROTO="static"设置为静态,然后将ONBOOT="yes"设置为yes,意思是打开网络。最后在下方设置网络ip等信息。

设置完成后重启网络

service network restart

对于其他两个节点,我们可以先把这个文件传过去,然后只需要修改一个IP就行了

[root@localhost network-scripts]# scp ifcfg-ens192 root@192.168.1.107:/etc/sysconfig/network-scripts

root@192.168.1.107's password:

ifcfg-ens192 100% 412 439.2KB/s 00:00

然后还要开启SSH,三个节点的操作也是一样的

yum install openssh-server

vim /etc/ssh/sshd_config

1、需要把Port 22前面的注释去了,表示开启sshd的服务端口

2、需要设置PermitRootLogin no/yes,如果允许使用root登陆则设为yes,否则为no

3、需要设置PasswordAuthentication no/yes,no为不允许使用密码登陆,yes为允许使用密码登陆,一般如果用自己账户登录都用密码,所以这一项最好设为yes,否则会导致window中能ping通linux系统,但是ssh连接不上。

systemctl restart sshd.service

二、关闭防火墙和内存交换

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

关闭selinux

sed -i s/SELINUX=enforcing/SELINUX=disabled/ /etc/selinux/config

setenforce 0

getenforce

# 永久禁用,打开/etc/fstab注释掉swap那一行。

sed -i 's/.*swap.*/#&/' /etc/fstab

三、配置ipv4和ipv6的转发

vim /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward=1 # 其值为0,说明禁止进行IP转发;如果是1,则说明IP转发功能已经打开。

net.bridge.bridge-nf-call-ip6tables = 1 # 是否在ip6tables链中过滤IPv6包

net.bridge.bridge-nf-call-iptables = 1 # 二层的网桥在转发包时也会被iptables的FORWARD规则所过滤,这样有时会出现L3层的iptables rules去过滤L2的帧的问题

sysctl -p #使配置生效

四、时间同步

yum install ntpdate -y

ntpdate time.windows.com

五、安装docker

yum install -y yum-utils

yum-config-manager \

--add-repo \

https://download.docker.com/linux/centos/docker-ce.repo

yum install docker-ce-20.10.7-3.el7 docker-ce-cli-20.10.7-3.el7

containerd.io docker-compose-plugin

启动docker

systemctl start docker

systemctl enable docker

测试docker是否正常运行

docker run hello-world

需要对docker的配置做一些修改,对/etc/docker/daemon.json文件中的cgroupdriver改成systemd。最后保存重启即可(没有这个文件,就在对应路径里创建即可)。

sudo cat <<EOF | /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

EOF

#然后执行

systemctl daemon-reload

systemctl restart docker

如果要重新安装docker的话,可以顺序执行下列步骤

sudo docker stop $(docker ps -aq)

sudo docker rm $(docker ps -aq)

sudo docker rmi $(docker images -q)

sudo yum remove docker-ce docker-ce-cli containerd.io

sudo rm -rf /var/lib/docker

sudo docker stop $(docker ps -aq) #停止所有运行的容器

sudo docker rm $(docker ps -aq) #删除所有容器

sudo docker rmi $(docker images -q) #删除所有镜像

sudo yum remove docker-ce docker-ce-cli containerd.io #载 Docker 引擎

sudo rm -rf /var/lib/docker #删除 Docker 数据目录

# 六、配置yum源

```bash

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

七、安装kubeadm

# 添加yum源

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 安装kubeadm、kubectl、kubelet

yum install -y kubeadm-1.23.6-0 kubectl-1.23.6-0 kubelet-1.23.6-0 --disableexcludes=kubernetes

八、锁定版本

# 安装

yum install -y yum-plugin-versionlock

# 锁定软件包

yum versionlock add kubeadm kubectl kubelet

# 查看锁定列表

yum versionlock list

yum install -y yum-plugin-versionlock

yum versionlock add kubeadm kubectl kubelet

yum versionlock list

kubeadm init \

--apiserver-advertise-address=192.168.1.121 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.6 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

# 九、初始化

```bash

[root@k8s-master manifests]# kubeadm init --apiserver-advertise-address=192.168.1.211 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.23.6 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.23.6

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.1.211]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.1.211 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.1.211 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 9.005001 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.23" in namespace kube-system with the configuration for the kubelets in the cluster

NOTE: The "kubelet-config-1.23" naming of the kubelet ConfigMap is deprecated. Once the UnversionedKubeletConfigMap feature gate graduates to Beta the default name will become just "kubelet-config". Kubeadm upgrade will handle this transition transparently.

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master as control-plane by adding the labels: [node-role.kubernetes.io/master(deprecated) node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 1i7jsl.y0nkiyyc0fzf6noz

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.1.211:6443 --token 1i7jsl.y0nkiyyc0fzf6noz \

--discovery-token-ca-cert-hash sha256:c246475ad57fe68d1b38a1d2dd7ded82b5fd7ecd0f7d41670a4fdd703170be0b

[root@k8s-master manifests]# mkdir -p $HOME/.kube

[root@k8s-master manifests]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master manifests]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master manifests]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane,master 91m v1.23.6

[root@k8s-master manifests]#

十、其余节点接入集群

现在master中拿到密钥

[root@k8s-master manifests]# kubeadm token create --print-join-command

kubeadm join 192.168.1.211:6443 --token 7h15yx.zk2qov4zfzxnk767 --discovery-token-ca-cert-hash sha256:c246475ad57fe68d1b38a1d2dd7ded82b5fd7ecd0f7d41670a4fdd703170be0b

在node节点中执行,但是发现不能正常使用kubectl

[root@k8s-node2 hjw]# kubeadm join 192.168.1.211:6443 --token 7h15yx.zk2qov4zfzxnk767 --discovery-token-ca-cert-hash sha256:c246475ad57fe68d1b38a1d2dd7ded82b5fd7ecd0f7d41670a4fdd703170be0b

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@k8s-node1 hjw]# kubectl get nodes

The connection to the server localhost:8080 was refused - did you specify the right host or port?

[root@k8s-master manifests]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane,master 3h10m v1.23.6

k8s-node1 NotReady <none> 6m10s v1.23.6

[root@k8s-master manifests]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-6d8c4cb4d-ttmjb 0/1 Pending 0 3h14m

kube-system coredns-6d8c4cb4d-vxfzh 0/1 Pending 0 3h14m

kube-system etcd-k8s-master 1/1 Running 0 3h15m

kube-system kube-apiserver-k8s-master 1/1 Running 0 3h15m

kube-system kube-controller-manager-k8s-master 1/1 Running 4 3h15m

kube-system kube-proxy-7zckm 1/1 Running 0 3h14m

kube-system kube-proxy-p99tz 1/1 Running 1 10m

kube-system kube-proxy-tj5xp 1/1 Running 0 69s

kube-system kube-scheduler-k8s-master 1/1 Running 4 3h15m

[root@k8s-master manifests]# cd /opt

[root@k8s-master opt]# mkdir k8s

[root@k8s-master opt]# ls

cni containerd k8s rh

[root@k8s-master opt]# cd k8s

[root@k8s-master k8s]# curl https://docs.projectcalico.org/manifests/calico.yaml -o

curl: option -o: requires parameter

curl: try 'curl --help' or 'curl --manual' for more information

[root@k8s-master k8s]# curl https://docs.projectcalico.org/manifests/calico.yaml -O

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 73 100 73 0 0 38 0 0:00:01 0:00:01 --:--:-- 38

[root@k8s-master k8s]# vim calico.yaml

[root@k8s-master k8s]# ls

calico.yaml

[root@k8s-master k8s]# rm -f ac

[root@k8s-master k8s]# rm -f calico.yaml

[root@k8s-master k8s]# ls

[root@k8s-master k8s]# curl https://raw.githubusercontent.com/projectcalico/calico/v3.26.0/manifests/calico.yaml -O

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 238k 100 238k 0 0 265k 0 --:--:-- --:--:-- --:--:-- 264k

[root@k8s-master k8s]# ls

calico.yaml

[root@k8s-master k8s]# vim calico.yaml

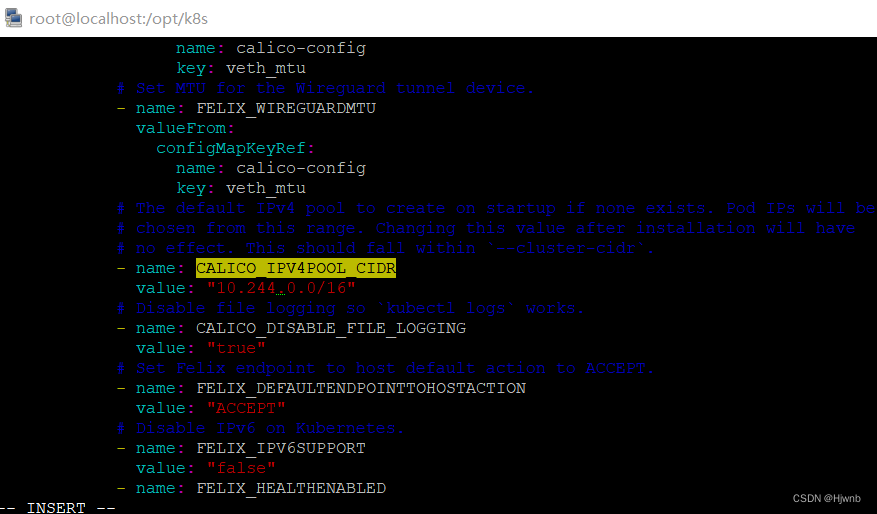

在vim中使用ESC+/输入 CALICO_IPV4POOL_CIDR

改成下面这样

value是自己初始化集群的时候设置的–pod-network-cidr=10.244.0.0/16

[root@k8s-master k8s]# grep image calico.yaml

image: docker.io/calico/cni:v3.26.0

imagePullPolicy: IfNotPresent

image: docker.io/calico/cni:v3.26.0

imagePullPolicy: IfNotPresent

image: docker.io/calico/node:v3.26.0

imagePullPolicy: IfNotPresent

image: docker.io/calico/node:v3.26.0

imagePullPolicy: IfNotPresent

image: docker.io/calico/kube-controllers:v3.26.0

imagePullPolicy: IfNotPresent

[root@k8s-master k8s]# ls

calico.yaml

[root@k8s-master k8s]# kubectl apply -f calico.yaml

poddisruptionbudget.policy/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

serviceaccount/calico-node created

serviceaccount/calico-cni-plugin created

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgpfilters.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrole.rbac.authorization.k8s.io/calico-cni-plugin created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-cni-plugin created

daemonset.apps/calico-node created

deployment.apps/calico-kube-controllers created

[root@k8s-master k8s]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-6bbd6cfcf-85rwt 1/1 Running 0 2m23s

kube-system calico-node-fp6vf 1/1 Running 0 2m24s

kube-system calico-node-pqf8v 1/1 Running 0 2m24s

kube-system calico-node-vnxpw 1/1 Running 0 2m24s

kube-system coredns-6d8c4cb4d-ttmjb 1/1 Running 0 3h29m

kube-system coredns-6d8c4cb4d-vxfzh 1/1 Running 0 3h29m

kube-system etcd-k8s-master 1/1 Running 0 3h29m

kube-system kube-apiserver-k8s-master 1/1 Running 0 3h29m

kube-system kube-controller-manager-k8s-master 1/1 Running 4 3h29m

kube-system kube-proxy-7zckm 1/1 Running 0 3h29m

kube-system kube-proxy-p99tz 1/1 Running 1 25m

kube-system kube-proxy-tj5xp 1/1 Running 0 15m

kube-system kube-scheduler-k8s-master 1/1 Running 4 3h29m

[root@k8s-master k8s]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 3h29m v1.23.6

k8s-node1 Ready <none> 25m v1.23.6

k8s-node2 Ready <none> 15m v1.23.6

[root@k8s-master k8s]#

master给其他主机发conf文件

[root@k8s-master k8s]# scp /etc/kubernetes/admin.conf root@192.168.1.212:/etc/kubernetes/admin.conf

The authenticity of host '192.168.1.212 (192.168.1.212)' can't be established.

ECDSA key fingerprint is SHA256:pPOL4lATDuhAGCQcPRxBdd2H3lmsRWWTAX7TPMLWLJo.

ECDSA key fingerprint is MD5:99:20:40:64:2a:23:b1:fc:f0:7c:72:70:94:24:a8:6d.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '192.168.1.212' (ECDSA) to the list of known hosts.

root@192.168.1.212's password:

admin.conf 100% 5637 4.0MB/s 00:00

[root@k8s-master k8s]# scp /etc/kubernetes/admin.conf root@192.168.1.213:/etc/kubernetes/admin.conf

The authenticity of host '192.168.1.213 (192.168.1.213)' can't be established.

ECDSA key fingerprint is SHA256:coToV4GIqzpEzwEraBaXSscAUjgPFxrm3xyCBfIbNF8.

ECDSA key fingerprint is MD5:cb:d7:4a:b4:87:54:b0:88:78:17:f4:d8:28:c3:3e:99.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '192.168.1.213' (ECDSA) to the list of known hosts.

root@192.168.1.213's password:

admin.conf 100% 5637 839.6KB/s 00:00

[root@k8s-master k8s]#

在其他节点上添加进环境变量

[root@k8s-node2 hjw]# echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

[root@k8s-node2 hjw]# source ~/.bash_profile

[root@k8s-node2 hjw]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 3h48m v1.23.6

k8s-node1 Ready <none> 43m v1.23.6

k8s-node2 Ready <none> 33m v1.23.6

[root@k8s-node2 hjw]#

然后就正常了

更多推荐

已为社区贡献5条内容

已为社区贡献5条内容

所有评论(0)