Go最全k8s学习 — (实践)第四章 资源调度(1),真的醉了

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上Go语言开发知识点,真正体系化!由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新如果你需要这些资料,可以戳这里获取[root@k8s-master deployments]# kubectl set image depl

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上Go语言开发知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

[root@k8s-master deployments]# kubectl set image deployment/nginx-deploy nginx=nginx:1.91

deployment.apps/nginx-deploy image updated

监控滚动升级状态,由于镜像名称错误,下载镜像失败,因此更新过程会卡住

kubectl rollout status deployments nginx-deploy

[root@k8s-master deployments]# kubectl rollout status deployments nginx-deploy

Waiting for deployment “nginx-deploy” rollout to finish: 1 out of 3 new replicas have been updated…

结束监听后,获取 rs 信息,我们可以看到新增的 rs 副本数是 1 个

kubectl get rs

[root@k8s-master deployments]# kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-deploy-754898b577 0 0 0 49m

nginx-deploy-78d8bf4fd7 3 3 3 70m

nginx-deploy-f7f5656c7 1 1 0 44s

通过 `kubectl get pods` 获取 pods 信息,我们可以看到关联到新的 rs 的 pod,状态处于 ImagePullBackOff 状态。

[root@k8s-master deployments]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deploy-78d8bf4fd7-25rmq 1/1 Running 0 28m

nginx-deploy-78d8bf4fd7-mq6kc 1/1 Running 0 28m

nginx-deploy-78d8bf4fd7-vxcpv 1/1 Running 0 28m

nginx-deploy-f7f5656c7-xfpq5 0/1 ImagePullBackOff 0 105s

为了修复这个问题,我们需要找到需要回退的 revision 进行回退。

通过 `kubectl rollout history deployment/nginx-deploy` 可以获取 revison 的列表。

[root@k8s-master deployments]# kubectl rollout history deployment/nginx-deploy

deployment.apps/nginx-deploy

REVISION CHANGE-CAUSE

2

3

4

通过 `kubectl rollout history deployment/nginx-deploy --revision=REVISION(版本号)` 可以查看详细信息。

[root@k8s-master deployments]# kubectl rollout history deployment/nginx-deploy --revision=4

deployment.apps/nginx-deploy with revision #4

Pod Template:

Labels: app=nginx-deploy

pod-template-hash=f7f5656c7

Containers:

nginx:

Image: nginx:1.91

Port:

Host Port:

Environment:

Mounts:

Volumes:

[root@k8s-master deployments]# kubectl rollout history deployment/nginx-deploy --revision=3

deployment.apps/nginx-deploy with revision #3

Pod Template:

Labels: app=nginx-deploy

pod-template-hash=78d8bf4fd7

Containers:

nginx:

Image: nginx:1.7.9

Port:

Host Port:

Environment:

Mounts:

Volumes:

[root@k8s-master deployments]# kubectl rollout history deployment/nginx-deploy --revision=2

deployment.apps/nginx-deploy with revision #2

Pod Template:

Labels: app=nginx-deploy

pod-template-hash=754898b577

Containers:

nginx:

Image: nginx:1.9.1

Port:

Host Port:

Environment:

Mounts:

Volumes:

确认要回退的版本后,可以通过 `kubectl rollout undo deployment/nginx-deploy` 可以回退到上一个版本。

[root@k8s-master deployments]# kubectl rollout undo deployment/nginx-deploy

deployment.apps/nginx-deploy rolled back

也可以回退到指定的 revision:

kubectl rollout undo deployment/nginx-deploy --to-revision=2

[root@k8s-master deployments]# kubectl rollout undo deployment/nginx-deploy --to-revision=2

deployment.apps/nginx-deploy rolled back

再次通过 `kubectl get deployment` 和 `kubectl describe deployment` 可以看到,我们的版本已经回退到对应的 revison 上了。

可以通过在`/opt/k8s/deployments/nginx-deploy.yaml`中设置 `.spec.revisonHistoryLimit` 来指定 Deployment 保留多少 revison,如果设置为 0,则不允许 Deployment 回退了。

### 2.4 扩容 / 缩容

只有修改 Deployment 配置文件中的配置,才会触发扩容 / 缩容操作。

修改`/opt/k8s/deployments/nginx-deploy.yaml`不行。

扩容 / 缩容只是改变 Pod 数,没有更新 pod template 因此不会创建新的 rs。

1 命令行方式

kubectl scale --replicas=6 deploy nginx-deploy

kubectl scale --replicas=6 deploy(扩容的类型) nginx-deploy(名称)

2 修改配置文件方式

kubectl edit deploy nginx-deploy

然后 修改 replicas

[root@k8s-master deployments]# kubectl get deployment

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-deploy 3/3 3 3 101m

[root@k8s-master deployments]# kubectl scale --replicas=6 deploy nginx-deploy

deployment.apps/nginx-deploy scaled

[root@k8s-master deployments]# kubectl get deployment

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-deploy 6/6 6 6 101m

[root@k8s-master deployments]# kubectl scale --replicas=6 deploy nginx-deploy

deployment.apps/nginx-deploy scaled

[root@k8s-master deployments]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deploy-754898b577-8ns26 1/1 Running 0 29m

nginx-deploy-754898b577-g9q9h 1/1 Running 0 29m

nginx-deploy-754898b577-ksfbw 1/1 Running 0 29m

nginx-deploy-754898b577-rwbxg 1/1 Running 0 2s

nginx-deploy-754898b577-xc88j 1/1 Running 0 2s

nginx-deploy-754898b577-xtmmc 1/1 Running 0 2s

[root@k8s-master deployments]# kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-deploy-754898b577 6 6 6 85m

nginx-deploy-78d8bf4fd7 0 0 0 106m

nginx-deploy-f7f5656c7 0 0 0 37m

### 2.5 暂停(滚动更新)与恢复(滚动更新)

由于每次 `pod template` 中的配置发生修改后,都会触发更新 deployment 操作,如果短时间频繁修改配置,就会产生多次更新,而实际上只需要执行最后一次滚动更新即可。

当出现此类情况时就可以先暂停 deployment 的 rollout,直到下次主动恢复后才会继续进行滚动更新。

`(未实践,仅记录暂停和恢复的命令)!!!`

**暂停滚动更新命令**:

kubectl rollout pause deploy

[root@k8s-master deployments]# kubectl rollout pause deploy nginx-deploy

deployment.apps/nginx-deploy paused

修改一些属性,如限制 nginx 容器的最大cpu为 0.2 核,最大内存为 128M,最小内存为 64M,最小 cpu 为 0.1 核。

kubectl set resources deploy <deploy_name> -c <container_name> --limits=cpu=200m,memory=128Mi --requests=cpu100m,memory=64Mi

通过格式化输出 `kubectl get deploy <name> -o yaml`,可以看到配置确实发生的修改,再通过 `kubectl get po` 可以看到 pod 没有被更新。

**恢复滚动更新命令**:

kubectl rollout resume deploy

[root@k8s-master deployments]# kubectl rollout resume deploy nginx-deploy

deployment.apps/nginx-deploy resumed

恢复后,再次查看 rs 和 po 信息,可以看到就开始进行滚动更新操作了。

kubectl get rs

kubectl get po

### 2.6 配置文件(nginx)

apiVersion: apps/v1 # deployment api 版本

kind: Deployment # 资源类型为 deployment

metadata: # 元信息

labels: # 标签

app: nginx-deploy # 具体的 key: value 配置形式

name: nginx-deploy # deployment 的名字

namespace: default # 所在的命名空间

spec:

replicas: 3 # 期望副本数

revisionHistoryLimit: 10 # 进行滚动更新后,保留的历史版本数

selector: # 选择器,用于找到匹配的 RS

matchLabels: # 按照标签匹配

app: nginx-deploy # 匹配的标签key/value

strategy: # 更新策略

rollingUpdate: # 滚动更新配置

maxSurge: 25% # 进行滚动更新时,更新的个数最多可以超过期望副本数的个数/比例

maxUnavailable: 25% # 进行滚动更新时,最大不可用比例更新比例,表示在所有副本数中,最多可以有多少个不更新成功

type: RollingUpdate # 更新类型,采用滚动更新

template: # pod 模板

metadata: # pod 的元信息

labels: # pod 的标签

app: nginx-deploy

spec: # pod 期望信息

containers: # pod 的容器

- image: nginx:1.7.9 # 镜像

imagePullPolicy: IfNotPresent # 拉取策略

name: nginx # 容器名称

restartPolicy: Always # 重启策略

terminationGracePeriodSeconds: 30 # 删除操作最多宽限多长时间

## 3 StatefulSet

### 3.1 创建

1. 创建 `StatefulSet` 的文件夹

mkdir /opt/k8s/statefulset/

2. 在`/opt/k8s/statefulset/`下编写配置文件`web.yaml`

apiVersion: v1

kind: Service

metadata:

name: nginx

labels:

app: nginx

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx

apiVersion: apps/v1

kind: StatefulSet # StatefulSet 类型的资源

metadata:

name: web # StatefulSet 对象的名字

spec:

serviceName: “nginx” # 使用哪个 service来管理 dns(这里使用nginx的service,因为在nginx的metadata的name是nginx)

replicas: 2

selector: # 选择器,用于找到匹配的 RS

matchLabels: # 按照标签匹配

app: nginx # 匹配的标签key/value

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports: # 容器内部要暴露的端口

- containerPort: 80 # 容器内部具体要暴露的端口号

name: web # 该端口号配置的名字

3. 根据配置文件创建 StatefulSet 应用

kubectl create -f web.yaml

[root@k8s-master statefulset]# kubectl create -f web.yaml

service/nginx created

statefulset.apps/web created

4. 查看创建的 Service 和 StatefulSet 应用

查看 service

kubectl get service nginx

[root@k8s-master statefulset]# kubectl get service nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx ClusterIP None 80/TCP 7m49s

查看 statefulset => sts

kubectl get statefulset web

[root@k8s-master statefulset]# kubectl get statefulset web

NAME READY AGE

web 2/2 32s

5. 查看创建的 pod

[root@k8s-master statefulset]# kubectl get po

NAME READY STATUS RESTARTS AGE

nginx-deploy-754898b577-8ns26 1/1 Running 0 5h14m

nginx-deploy-754898b577-g9q9h 1/1 Running 0 5h14m

nginx-deploy-754898b577-ksfbw 1/1 Running 0 5h14m

nginx-deploy-754898b577-rwbxg 1/1 Running 0 4h44m

nginx-deploy-754898b577-xc88j 1/1 Running 0 4h44m

nginx-deploy-754898b577-xtmmc 1/1 Running 0 4h44m

web-0 1/1 Running 0 2m25s

web-1 1/1 Running 0 2m23s

查看创建的 pod,这些 pod 是有序的

kubectl get pods -l app=nginx

[root@k8s-master statefulset]# kubectl get pods -l app=nginx

NAME READY STATUS RESTARTS AGE

web-0 1/1 Running 0 4m10s

web-1 1/1 Running 0 4m8s

6. 测试服务是否可访问(查看这些 pod 的 dns)

运行一个新的 pod,基础镜像为 busybox 工具包,利用里面的 nslookup 可以看到 dns 信息

kubectl run -i --tty --image busybox:1.28.4 dns-test /bin/sh

nslookup web-0.nginx

[root@k8s-master statefulset]# kubectl run -i --tty --image busybox:1.28.4 dns-test /bin/sh

If you don’t see a command prompt, try pressing enter.

/ # nslookup web-0.nginx

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: web-0.nginx

Address 1: 10.244.36.97 web-0.nginx.default.svc.cluster.local

/ #

/ # nslookup web-1.nginx

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: web-1.nginx

Address 1: 10.244.169.153 web-1.nginx.default.svc.cluster.local

### 3.2 扩容 / 缩容

只有修改了 `StatefulSet` 配置文件中的 `replicas` 的属性后,才会触发更新操作。

修改非 `replicas` 的属性或者是`/opt/k8s/statefulset/web.yaml`都不行。

#### 3.2.1 扩容

通过命令方式:

kubectl scale statefulset web --replicas=5

通过修改配置文件方式:(修改`spec.replicas`的值)

kubectl edit statefulset web

扩容前后的数量变化:

[root@k8s-master ~]# kubectl get sts

NAME READY AGE

web 2/2 22h

[root@k8s-master ~]# kubectl scale statefulset web --replicas=5

statefulset.apps/web scaled

[root@k8s-master ~]# kubectl get sts

NAME READY AGE

web 5/5 22h

扩容的具体过程:(可以看到是按顺序创建了web-2、web-3、web-4)

[root@k8s-master ~]# kubectl describe sts web

Name: web

Namespace: default

CreationTimestamp: Fri, 29 Dec 2023 22:30:25 +0800

Selector: app=nginx

Labels:

Annotations:

Replicas: 5 desired | 5 total

Update Strategy: RollingUpdate

Partition: 0

Pods Status: 5 Running / 0 Waiting / 0 Succeeded / 0 Failed

Pod Template:

Labels: app=nginx

Containers:

nginx:

Image: nginx:1.7.9

Port: 80/TCP

Host Port: 0/TCP

Environment:

Mounts:

Volumes:

Volume Claims:

Events:

Type Reason Age From Message

Normal SuccessfulCreate 107s statefulset-controller create Pod web-2 in StatefulSet web successful

Normal SuccessfulCreate 105s statefulset-controller create Pod web-3 in StatefulSet web successful

Normal SuccessfulCreate 103s statefulset-controller create Pod web-4 in StatefulSet web successful

#### 3.2.2 缩容

方式与3.2.1 扩容 一致,这里以缩容到2为例,查看具体变化。

kubectl scale statefulset web --replicas=2

缩容前后的数量变化:

[root@k8s-master ~]# kubectl get sts

NAME READY AGE

web 5/5 22h

[root@k8s-master ~]# kubectl scale statefulset web --replicas=2

statefulset.apps/web scaled

[root@k8s-master ~]# kubectl get sts

NAME READY AGE

web 3/2 22h

[root@k8s-master ~]# kubectl get sts

NAME READY AGE

web 2/2 22h

缩容的具体过程:(可以看到是按顺序从后往前删除 web-4、web-4、web-3,最后只剩web-0和web-1两个 pod)

[root@k8s-master ~]# kubectl describe sts web

Name: web

Namespace: default

CreationTimestamp: Fri, 29 Dec 2023 22:30:25 +0800

Selector: app=nginx

Labels:

Annotations:

Replicas: 2 desired | 2 total

Update Strategy: RollingUpdate

Partition: 0

Pods Status: 2 Running / 0 Waiting / 0 Succeeded / 0 Failed

Pod Template:

Labels: app=nginx

Containers:

nginx:

Image: nginx:1.7.9

Port: 80/TCP

Host Port: 0/TCP

Environment:

Mounts:

Volumes:

Volume Claims:

Events:

Type Reason Age From Message

Normal SuccessfulCreate 17m statefulset-controller create Pod web-2 in StatefulSet web successful

Normal SuccessfulCreate 17m statefulset-controller create Pod web-3 in StatefulSet web successful

Normal SuccessfulCreate 17m statefulset-controller create Pod web-4 in StatefulSet web successful

Normal SuccessfulDelete 12s statefulset-controller delete Pod web-4 in StatefulSet web successful

Normal SuccessfulDelete 10s statefulset-controller delete Pod web-3 in StatefulSet web successful

Normal SuccessfulDelete 9s statefulset-controller delete Pod web-2 in StatefulSet web successful

### 3.3 镜像更新

只有修改了 `StatefulSet` 配置文件中的 `template` 中的属性后,才会触发更新操作。

修改非 `template` 中的属性或者是 `/opt/k8s/statefulset/web.yaml` 都不行。

推荐通过修改配置文件方式:(在`3.2.2 缩容`操作后只剩`web-0`和`web-1`两个 pod,继续修改template中的image的值,从1.7.9改为1.9.1)

kubectl edit statefulset web

版本变化:

[root@k8s-master ~]# kubectl rollout history sts web

statefulset.apps/web

REVISION CHANGE-CAUSE

1

[root@k8s-master ~]# kubectl rollout history sts web --revision=1

statefulset.apps/web with revision #1

Pod Template:

Labels: app=nginx

Containers:

nginx:

Image: nginx:1.7.9

Port: 80/TCP

Host Port: 0/TCP

Environment:

Mounts:

Volumes:

[root@k8s-master ~]# kubectl edit sts web

statefulset.apps/web edited

[root@k8s-master ~]# kubectl rollout history sts web

statefulset.apps/web

REVISION CHANGE-CAUSE

1

2

[root@k8s-master ~]# kubectl rollout history sts web --revision=2

statefulset.apps/web with revision #2

Pod Template:

Labels: app=nginx

Containers:

nginx:

Image: nginx:1.9.1

Port: 80/TCP

Host Port: 0/TCP

Environment:

Mounts:

Volumes:

镜像更新的具体过程:(从最后四条事件可以看到是先删除web-1、再创建新的web-1;删除web-0、再创建新的web-0)

[root@k8s-master ~]# kubectl describe sts web

Name: web

Namespace: default

CreationTimestamp: Fri, 29 Dec 2023 22:30:25 +0800

Selector: app=nginx

Labels:

Annotations:

Replicas: 2 desired | 2 total

Update Strategy: RollingUpdate

Partition: 0

Pods Status: 2 Running / 0 Waiting / 0 Succeeded / 0 Failed

Pod Template:

Labels: app=nginx

Containers:

nginx:

Image: nginx:1.9.1

Port: 80/TCP

Host Port: 0/TCP

Environment:

Mounts:

Volumes:

Volume Claims:

Events:

Type Reason Age From Message

Normal SuccessfulCreate 36m statefulset-controller create Pod web-2 in StatefulSet web successful

Normal SuccessfulCreate 36m statefulset-controller create Pod web-3 in StatefulSet web successful

Normal SuccessfulCreate 36m statefulset-controller create Pod web-4 in StatefulSet web successful

Normal SuccessfulDelete 19m statefulset-controller delete Pod web-4 in StatefulSet web successful

Normal SuccessfulDelete 19m statefulset-controller delete Pod web-3 in StatefulSet web successful

Normal SuccessfulDelete 19m statefulset-controller delete Pod web-2 in StatefulSet web successful

Normal SuccessfulDelete 7m11s statefulset-controller delete Pod web-1 in StatefulSet web successful

Normal SuccessfulCreate 7m10s (x2 over 22h) statefulset-controller create Pod web-1 in StatefulSet web successful

Normal SuccessfulDelete 7m8s statefulset-controller delete Pod web-0 in StatefulSet web successful

Normal SuccessfulCreate 7m6s (x2 over 22h) statefulset-controller create Pod web-0 in StatefulSet web successful

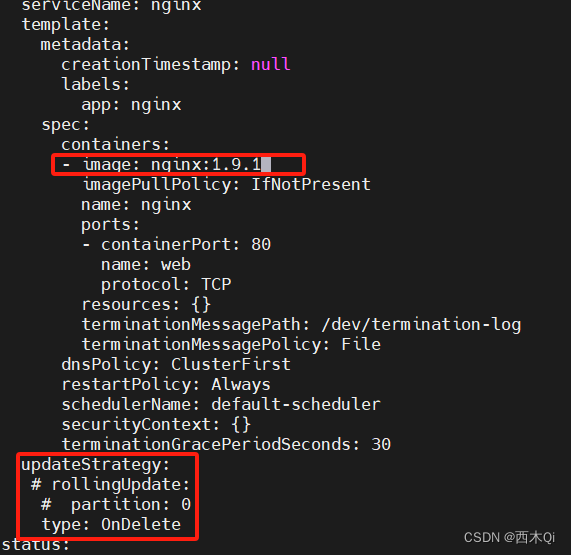

#### 3.3.1 RollingUpdate

StatefulSet 也可以采用滚动更新策略,同样是修改 template 属性后会触发更新,但是由于 pod 是有序的,**`在 StatefulSet 中更新时是基于 pod 的顺序,倒序更新的。`**

#### 3.3.2 灰度发布 / 金丝雀发布

利用 updateStrategy 中 rollingUpdate 的 partition 属性,可以实现简易的灰度发布的效果。目的是将项目上线后产生问题的影响,尽量降到最低。

利用该机制,我们可以通过控制 partition 的值,来决定只更新其中一部分 pod,确认没有问题后再逐步增大更新的 pod 数量,最终实现全部 pod 更新。

updateStrategy:

rollingUpdate:

partition: 0

type: RollingUpdate

例如我们有 5 个 pod,如果当前 partition 设置为 3,那么此时滚动更新时,只会更新那些 序号 >= 3 的 pod。(**`在 StatefulSet 中更新时是基于 pod 的顺序,倒序更新的。`**)

等到 序号 >= 3 的 pod更新完成后,再继续将 partition 设置为 2 或 1,就可以继续更新 序号 >= 2 或 1 的 pod,这样逐步趋于 0。

**步骤**:

1. 把 StatefulSet 为 web 的副本扩展到5个:(web0到web-4的 image 均是1.9.1)

kubectl scale statefulset web --replicas=5

2. 把 updateStrategy 中 rollingUpdate 的 partition 从 0 改为 3,然后把 image 从1.9.1 改为1.7.9

kubectl edit statefulset web

3. 查看各 pod 的镜像变化(可以发现只有web-4、web-3的image从1.9.1 改为了1.7.9,web-2、web-1、web-0的image依旧是1.9.1)

查看 web-4、web-3,以web-3为例

kubectl describe po web-4

kubectl describe po web-3

[root@k8s-master statefulset]# kubectl describe po web-3

Name: web-3

Namespace: default

Priority: 0

Node: k8s-node1/192.168.3.242

Start Time: Sun, 31 Dec 2023 09:39:49 +0800

Labels: app=nginx

controller-revision-hash=web-6c5c7fd59b

statefulset.kubernetes.io/pod-name=web-3

Annotations: cni.projectcalico.org/containerID: 3d2d85e0bfc230a058952778c01b1f32d6b780dbfb1186d108e24cc33e1da107

cni.projectcalico.org/podIP: 10.244.36.78/32

cni.projectcalico.org/podIPs: 10.244.36.78/32

Status: Running

IP: 10.244.36.78

IPs:

IP: 10.244.36.78

Controlled By: StatefulSet/web

Containers:

nginx:

Container ID: docker://a515f130287700a6dc9a5feb6fa180ea8b91d4eb47051aee5e731169c4b9f5e1

Image: nginx:1.7.9

Image ID: docker-pullable://nginx@sha256:e3456c851a152494c3e4ff5fcc26f240206abac0c9d794affb40e0714846c451

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sun, 31 Dec 2023 09:39:50 +0800

Ready: True

Restart Count: 0

Environment:

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-7cznh (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-7cznh:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Normal Scheduled 112s default-scheduler Successfully assigned default/web-3 to k8s-node1

Normal Pulled 111s kubelet Container image “nginx:1.7.9” already present on machine

Normal Created 111s kubelet Created container nginx

Normal Started 111s kubelet Started container nginx

查看 web-2、web-1、web-0,以web-2为例

kubectl describe po web-2

kubectl describe po web-1

kubectl describe po web-0

[root@k8s-master statefulset]# kubectl describe po web-2

Name: web-2

Namespace: default

Priority: 0

Node: k8s-node1/192.168.3.242

Start Time: Sun, 31 Dec 2023 09:34:14 +0800

Labels: app=nginx

controller-revision-hash=web-6bc849cb6b

statefulset.kubernetes.io/pod-name=web-2

Annotations: cni.projectcalico.org/containerID: 0ba36375d747e1055a0215fc41520a3084622a995053af5055f083d08a37a547

cni.projectcalico.org/podIP: 10.244.36.76/32

cni.projectcalico.org/podIPs: 10.244.36.76/32

Status: Running

IP: 10.244.36.76

IPs:

IP: 10.244.36.76

Controlled By: StatefulSet/web

Containers:

nginx:

Container ID: docker://d14a9dedbbb33b86a45e7feb4717fb4b4b5def92507a7f0b92e601132634988f

Image: nginx:1.9.1

Image ID: docker-pullable://nginx@sha256:2f68b99bc0d6d25d0c56876b924ec20418544ff28e1fb89a4c27679a40da811b

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sun, 31 Dec 2023 09:34:15 +0800

Ready: True

Restart Count: 0

Environment:

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-544jm (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-544jm:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Normal Scheduled 7m40s default-scheduler Successfully assigned default/web-2 to k8s-node1

Normal Pulled 7m39s kubelet Container image “nginx:1.9.1” already present on machine

Normal Created 7m39s kubelet Created container nginx

Normal Started 7m39s kubelet Started container nginx

4. 继续把 updateStrategy 中 rollingUpdate 的 partition 从 3 改为 1,还是把 image 从1.9.1 改为1.7.9

5. 查看各 pod 的镜像变化(可以发现除了web-4、web-3,web-2、web-1的image也从1.9.1 改为了1.7.9,web-0的image依旧是1.9.1)

查看 web-2、web-1,以web-1为例

[root@k8s-master statefulset]# kubectl describe po web-1

Name: web-1

Namespace: default

Priority: 0

Node: k8s-node2/192.168.3.243

Start Time: Sun, 31 Dec 2023 09:50:11 +0800

Labels: app=nginx

controller-revision-hash=web-6c5c7fd59b

statefulset.kubernetes.io/pod-name=web-1

Annotations: cni.projectcalico.org/containerID: d386a2c85ea388ce70b9a98ccb64032d4364ad548a0d59bc751ca91ac33c6e9b

cni.projectcalico.org/podIP: 10.244.169.143/32

cni.projectcalico.org/podIPs: 10.244.169.143/32

Status: Running

IP: 10.244.169.143

IPs:

IP: 10.244.169.143

Controlled By: StatefulSet/web

Containers:

nginx:

Container ID: docker://a40879bd7a3d561a73e026ff039745f3587427c2725fcda649938be6adaeed2a

Image: nginx:1.7.9

Image ID: docker-pullable://nginx@sha256:e3456c851a152494c3e4ff5fcc26f240206abac0c9d794affb40e0714846c451

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sun, 31 Dec 2023 09:50:12 +0800

Ready: True

Restart Count: 0

Environment:

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-qrsdj (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-qrsdj:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Normal Scheduled 17s default-scheduler Successfully assigned default/web-1 to k8s-node2

Normal Pulled 16s kubelet Container image “nginx:1.7.9” already present on machine

Normal Created 16s kubelet Created container nginx

Normal Started 16s kubelet Started container nginx

查看 web-0

[root@k8s-master statefulset]# kubectl describe po web-0

Name: web-0

Namespace: default

Priority: 0

Node: k8s-node2/192.168.3.243

Start Time: Sun, 31 Dec 2023 09:34:18 +0800

Labels: app=nginx

controller-revision-hash=web-6bc849cb6b

statefulset.kubernetes.io/pod-name=web-0

Annotations: cni.projectcalico.org/containerID: cac2f3c8afa1daf2b2d4805fe1c92356aa7c8f6fa5f6bd07acc4d3a50be7c41c

cni.projectcalico.org/podIP: 10.244.169.141/32

cni.projectcalico.org/podIPs: 10.244.169.141/32

Status: Running

IP: 10.244.169.141

IPs:

IP: 10.244.169.141

Controlled By: StatefulSet/web

Containers:

nginx:

Container ID: docker://536426425e1524fd3d71c318aaddd884867f7bb68d9ced331e587c9799f713b7

Image: nginx:1.9.1

Image ID: docker-pullable://nginx@sha256:2f68b99bc0d6d25d0c56876b924ec20418544ff28e1fb89a4c27679a40da811b

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sun, 31 Dec 2023 09:34:19 +0800

Ready: True

Restart Count: 0

Environment:

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-2pgwf (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-2pgwf:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Normal Scheduled 16m default-scheduler Successfully assigned default/web-0 to k8s-node2

Normal Pulled 16m kubelet Container image “nginx:1.9.1” already present on machine

Normal Created 16m kubelet Created container nginx

Normal Started 16m kubelet Started container nginx

6. 把 updateStrategy 中 rollingUpdate 的 partition 从 1 改为 0,然后把 image 从1.9.1 改为1.7.9,至此完成整个镜像的更新。

#### 3.3.3 OnDelete

只有在 pod 被删除时会进行更新操作,也就是删除某个 pod 后,会重新创建一个新的同名 pod,从而达到更新的目的。

这样可以实现只更新某个指定的 pod。

updateStrategy:

rollingUpdate:

partition: 0

type: RollingUpdate

type: OnDelete

`3.3.2 灰度发布 / 金丝雀发布` 操作完毕后 image 全部从1.9.1 改为了1.7.9。

**步骤**:

1. 把 updateStrategy 中 rollingUpdate 的相关配置注释掉,同时将更新策略的类型从 RollingUpdate 改为 OnDelete(然后把 image 从1.7.9 改为1.9.1)

kubectl edit statefulset web

2. 查看 pod 是 web-4 的信息(可以发现image依旧是1.7.9,且在最下面 Events 列表中也没有显示变动日志)

[root@k8s-master statefulset]# kubectl describe po web-4

Name: web-4

Namespace: default

Priority: 0

Node: k8s-node1/192.168.3.242

Start Time: Sun, 31 Dec 2023 09:39:47 +0800

Labels: app=nginx

controller-revision-hash=web-6c5c7fd59b

statefulset.kubernetes.io/pod-name=web-4

Annotations: cni.projectcalico.org/containerID: 5fea7938b6dabd02a07ece3afb77eb827c16bf96f0902ed2d3e84584b41b2b19

cni.projectcalico.org/podIP: 10.244.36.77/32

cni.projectcalico.org/podIPs: 10.244.36.77/32

Status: Running

IP: 10.244.36.77

IPs:

IP: 10.244.36.77

Controlled By: StatefulSet/web

Containers:

nginx:

Container ID: docker://d4ead41d6f391de3151bf5bb4b3498418184691c9fc824fda48d25aca8afb28d

Image: nginx:1.7.9

Image ID: docker-pullable://nginx@sha256:e3456c851a152494c3e4ff5fcc26f240206abac0c9d794affb40e0714846c451

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sun, 31 Dec 2023 09:39:48 +0800

Ready: True

Restart Count: 0

Environment:

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-clh6d (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-clh6d:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Normal Scheduled 25m default-scheduler Successfully assigned default/web-4 to k8s-node1

Normal Pulled 25m kubelet Container image “nginx:1.7.9” already present on machine

Normal Created 25m kubelet Created container nginx

Normal Started 25m kubelet Started container nginx

3. 删除 pod 是 web-4

kubectl delete po web-4

[root@k8s-master statefulset]# kubectl delete po web-4

pod “web-4” deleted

4. 再次查看 pod 是 web-4 的信息(可以发现 image 改为了 1.9.1,且在最下面 Events 列表中看到是18S前发生的变化)

[root@k8s-master statefulset]# kubectl describe po web-4

Name: web-4

Namespace: default

Priority: 0

Node: k8s-node1/192.168.3.242

Start Time: Sun, 31 Dec 2023 10:08:53 +0800

Labels: app=nginx

controller-revision-hash=web-6bc849cb6b

statefulset.kubernetes.io/pod-name=web-4

Annotations: cni.projectcalico.org/containerID: 638ae0252ecff158173d47826483023b157695ef5d35bce8db2c775e9b4c4a02

cni.projectcalico.org/podIP: 10.244.36.79/32

cni.projectcalico.org/podIPs: 10.244.36.79/32

Status: Running

IP: 10.244.36.79

IPs:

IP: 10.244.36.79

Controlled By: StatefulSet/web

Containers:

nginx:

Container ID: docker://a038da90c25fa18096ba833a0a51a65a27576c552609c3e72c67b397133513fc

Image: nginx:1.9.1

Image ID: docker-pullable://nginx@sha256:2f68b99bc0d6d25d0c56876b924ec20418544ff28e1fb89a4c27679a40da811b

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sun, 31 Dec 2023 10:08:54 +0800

Ready: True

Restart Count: 0

Environment:

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-ccnbf (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-ccnbf:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Normal Scheduled 19s default-scheduler Successfully assigned default/web-4 to k8s-node1

Normal Pulled 18s kubelet Container image “nginx:1.9.1” already present on machine

Normal Created 18s kubelet Created container nginx

Normal Started 18s kubelet Started container nginx

5. 依次删除 web-3、web-2、web-1、web-0 可实现image版本的更新

### 3.4 删除 StatefulSet 及其关联

StatefulSet 创建时会关联 Service 、PVC、Pod ,中间没有 ReplicaSet(RS)。

**级联删除**:

在删除 StatefulSet 时,默认关联的 Pod 会一起删除,也就是级联删除,但 PVC、 Service 不会一起删除。

级联删除:删除 statefulset 时会同时删除 pods

kubectl delete statefulset web

[root@k8s-master statefulset]# kubectl delete sts web

statefulset.apps “web” deleted

[root@k8s-master statefulset]# kubectl get sts

No resources found in default namespace.

[root@k8s-master statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 443/TCP 3d17h

nginx ClusterIP None 80/TCP 87m

[root@k8s-master statefulset]# kubectl get po

NAME READY STATUS RESTARTS AGE

dns-test 1/1 Running 1 (85m ago) 86m

[root@k8s-master statefulset]# kubectl get pvc

No resources found in default namespace.

---

**非级联删除**:在删除 StatefulSet 时,默认关联的 Pod 不会一起删除,只删除 StatefulSet 本身,PVC、 Service 也不会删除。

非级联删除:删除 statefulset 时不会删除 pods,删除 sts 后,pods 就没人管了,此时再删除 pod 不会重建的

kubectl delete sts web --cascade=orphan

[root@k8s-master statefulset]# kubectl delete sts web --cascade=false

warning: --cascade=false is deprecated (boolean value) and can be replaced with --cascade=orphan.

statefulset.apps “web” deleted

[root@k8s-master statefulset]# kubectl get sts

No resources found in default namespace.

[root@k8s-master statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 443/TCP 3d17h

nginx ClusterIP None 80/TCP 2m42s

[root@k8s-master statefulset]# kubectl get pod

NAME READY STATUS RESTARTS AGE

dns-test 1/1 Running 1 (92m ago) 93m

web-0 1/1 Running 0 2m50s

web-1 1/1 Running 0 2m48s

[root@k8s-master statefulset]# kubectl get pvc

No resources found in default namespace.

删除 Pod:

[root@k8s-master statefulset]# kubectl get po

NAME READY STATUS RESTARTS AGE

dns-test 1/1 Running 1 (95m ago) 96m

web-0 1/1 Running 0 6m22s

web-1 1/1 Running 0 6m20s

[root@k8s-master statefulset]# kubectl delete po web-0 web-1

pod “web-0” deleted

pod “web-1” deleted

[root@k8s-master statefulset]# kubectl get po

NAME READY STATUS RESTARTS AGE

dns-test 1/1 Running 1 (96m ago) 97m

删除 Service:

[root@k8s-master statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 443/TCP 3d17h

nginx ClusterIP None 80/TCP 7m53s

[root@k8s-master statefulset]# kubectl delete svc nginx

service “nginx” deleted

[root@k8s-master statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 443/TCP 3d17h

### 3.5 删除 StatefulSet 关联的 PVC

如果有关联的 PVC 则删除,没有则不删除。

StatefulSet删除后PVC还会保留着,数据不再使用的话也需要删除

$ kubectl delete pvc www-web-0 www-web-1

### 3.6 配置文件(与 3.1 创建 StatefulSet 用的一致)

**注意**:配置文件中有`---`分割,这是用于说明在这个`yaml`的配置文件里嵌套了另一个`yaml`的内容。

apiVersion: v1

kind: Service

metadata:

name: nginx

labels:

app: nginx

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx

apiVersion: apps/v1

kind: StatefulSet # StatefulSet 类型的资源

metadata:

name: web # StatefulSet 对象的名字

spec:

serviceName: “nginx” # 使用哪个 service来管理 dns(这里使用nginx的service,因为在nginx的metadata的name是nginx)

replicas: 2

selector: # 选择器,用于找到匹配的 RS

matchLabels: # 按照标签匹配

app: nginx # 匹配的标签key/value

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports: # 容器内部要暴露的端口

- containerPort: 80 # 容器内部具体要暴露的端口号

name: web # 该端口号配置的名字

volumeMounts: # 加载数据卷

- name: www # 加载哪个数据卷

mountPath: /usr/share/nginx/html # 加载到容器中的哪个目录

volumeClaimTemplates: # 数据卷模板

- metadata: # 数据卷描述

name: www # 数据卷的名称

annotations: # 数据卷的注解

volume.alpha.kubernetes.io/storage-class: anything

spec: # 数据卷的规约

accessModes: [ “ReadWriteOnce” ] # 访问模式

resources:

requests:

storage: 1Gi # 需要的存储资源大小

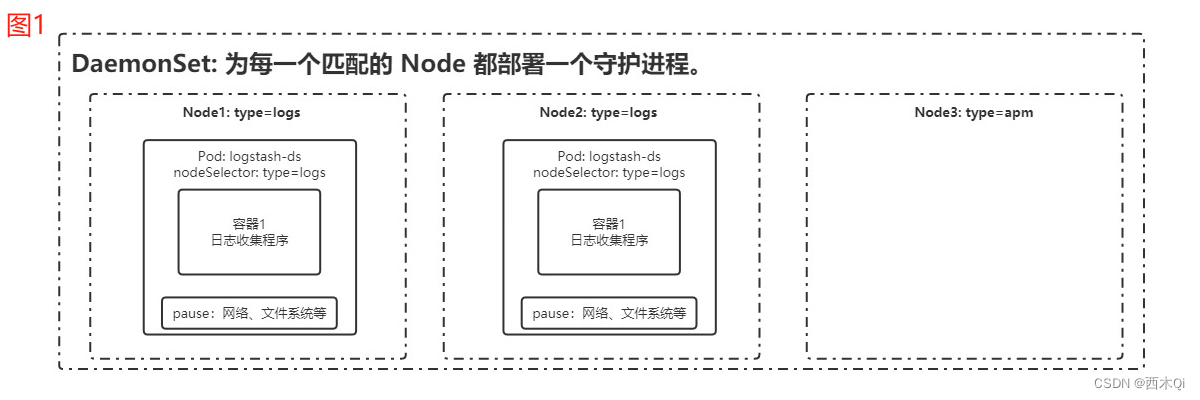

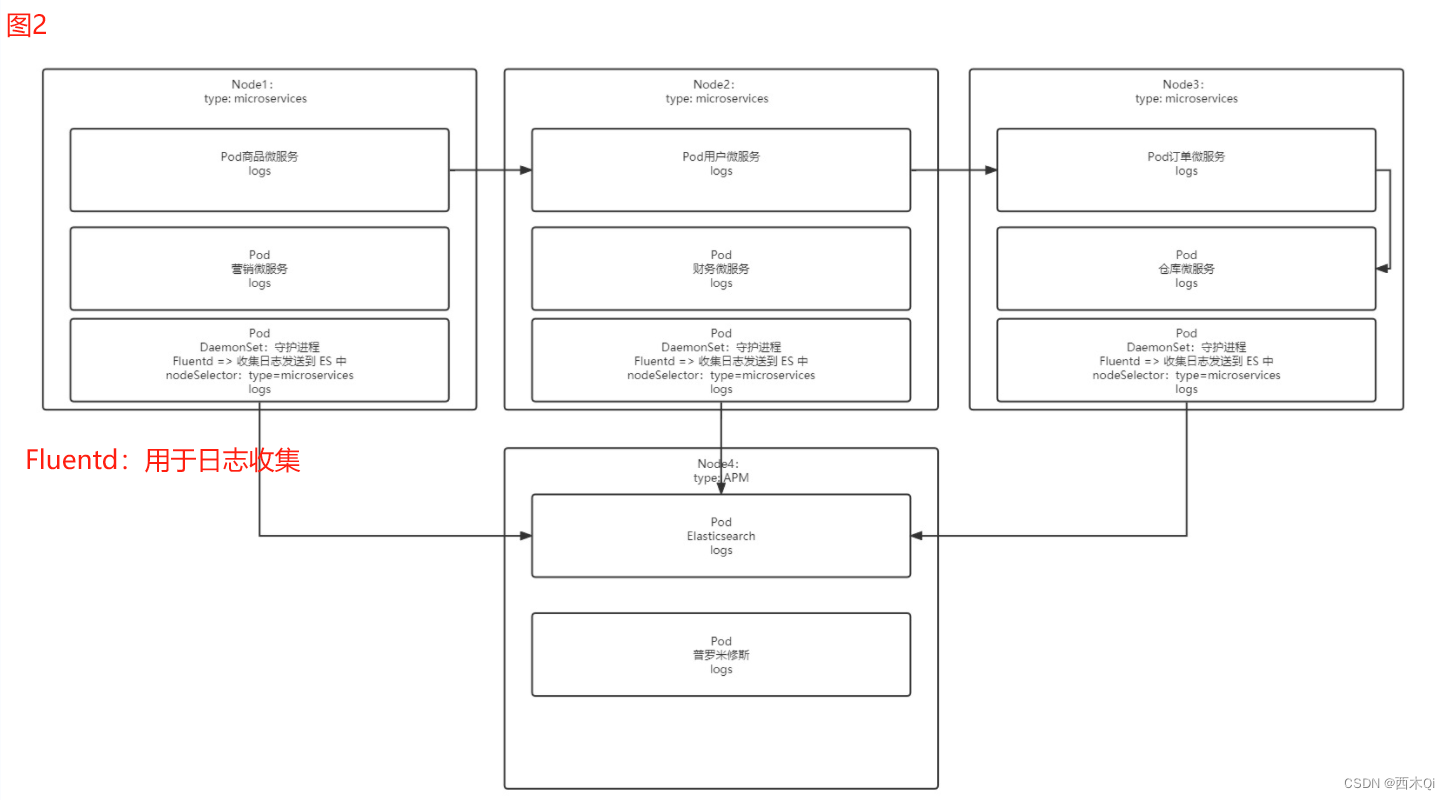

## 4 DaemonSet

会根据 `DaemonSet` 绑定的 `Node` 标签,为每一个匹配到的 `Node` 都自动部署一个有守护进程的 `Pod`。

即使后面又增加了新的节点,只要新的节点设置的标签和 `DaemonSet` 绑定的 `Node` 标签一致,`DaemonSet` 就会继续为这些新增加的节点自动部署一个有守护进程 `Pod`。

---

**示例图**:(收集 Node1、Node2、Node3 产生的日志)

### 4.1 配置文件

apiVersion: apps/v1

kind: DaemonSet # 创建 DaemonSet 资源

metadata:

name: fluentd # DaemonSet 资源的名称

spec:

selector:

matchLabels:

app: logging # 和下面 template.metadata.labels.app是匹配的

template:

metadata:

labels:

app: logging

id: fluentd

name: fluentd # Pod 的名字

spec:

containers:

- name: fluentd-es # 容器的名称

# image: k8s.gcr.io/fluentd-elasticsearch:v1.3.0 # 容器使用的镜像

image: agilestacks/fluentd-elasticsearch:v1.3.0 # 容器使用的镜像

env: # 环境变量配置

- name: FLUENTD_ARGS # 环境变量的 key

value: -qq # 环境变量的 value

volumeMounts: # 加载数据卷,防止数据丢失

- name: containers # 数据卷名称

mountPath: /var/lib/docker/containers # 将数据卷挂载到容器内哪个目录

- name: varlog

mountPath: /varlog

volumes: # 定义数据卷

- hostPath: # 数据卷类型,主机路径的模式,也就是与 node 共享目录

path: /var/lib/docker/containers # node中的共享目录 (将服务器的目录挂载到容器内部,如果服务器内不存在该目录,则会自动创建该目录)

name: containers # 定义的数据卷名称

- hostPath:

path: /var/log

name: varlog

### 4.2 创建 DaemonSet

1. 创建 DaemonSet 的文件夹

make /opt/k8s/daemonset/

2. 在`/opt/k8s/daemonset/`下编写配置文件`fluentd-ds.yaml`(来自 4.1 配置文件,未指定绑定的 `node`)

apiVersion: apps/v1

kind: DaemonSet # 创建 DaemonSet 资源

metadata:

name: fluentd # DaemonSet 资源的名称

spec:

selector:

matchLabels:

app: logging # 和下面 template.metadata.labels.app是匹配的

template:

metadata:

labels:

app: logging

id: fluentd

name: fluentd # Pod 的名字

spec:

containers:

- name: fluentd-es # 容器的名称

# image: k8s.gcr.io/fluentd-elasticsearch:v1.3.0 # 容器使用的镜像

image: agilestacks/fluentd-elasticsearch:v1.3.0 # 容器使用的镜像

env: # 环境变量配置

- name: FLUENTD_ARGS # 环境变量的 key

value: -qq # 环境变量的 value

volumeMounts: # 加载数据卷,防止数据丢失

- name: containers # 数据卷名称

mountPath: /var/lib/docker/containers # 将数据卷挂载到容器内哪个目录

- name: varlog

mountPath: /varlog

volumes: # 定义数据卷

- hostPath: # 数据卷类型,主机路径的模式,也就是与 node 共享目录

path: /var/lib/docker/containers # node中的共享目录 (将服务器的目录挂载到容器内部,如果服务器内不存在该目录,则会自动创建该目录)

name: containers # 定义的数据卷名称

- hostPath:

path: /var/log

name: varlog

3. 根据配置文件创建 DaemonSet 应用

kubectl create -f fluentd-ds.yaml

[root@k8s-master daemonset]# kubectl create -f fluentd-ds.yaml

daemonset.apps/fluentd created

4. 查看创建的 DaemonSet 应用

`DaemonSet` 的 `READY` 都是 0,进一步查看 `Pod`,发现 `Pod` 的状态也处于 `创建中` 或者 `镜像拉取失败`,原因都是镜像拉取失败,主要是网速差,解决办法是使用 Docker 命令单独拉取该镜像。(因一直未拉取成功,暂时跳过)

kubectl get daemonset

kubectl get ds

[root@k8s-master daemonset]# kubectl get daemonset

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

fluentd 2 2 0 2 0 20s

[root@k8s-master daemonset]# kubectl get ds

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

fluentd 2 2 0 2 0 22s

[root@k8s-master daemonset]# kubectl get po

NAME READY STATUS RESTARTS AGE

dns-test 1/1 Running 1 (9h ago) 9h

fluentd-96ms8 0/1 ContainerCreating 0 5m21s

fluentd-vbttv 0/1 ImagePullBackOff 0 5m21s

[root@k8s-master daemonset]# kubectl describe po fluentd-vbttv

Name: fluentd-vbttv

Namespace: default

Priority: 0

Node: k8s-node1/192.168.3.242

Start Time: Sun, 31 Dec 2023 18:29:32 +0800

Labels: app=logging

controller-revision-hash=b96747bc7

id=fluentd

pod-template-generation=1

Annotations: cni.projectcalico.org/containerID: 7df8b185447eedde818ee0acdebceb1cf02fe85d6b17a409f2749239a010ba93

cni.projectcalico.org/podIP: 10.244.36.83/32

cni.projectcalico.org/podIPs: 10.244.36.83/32

Status: Pending

IP: 10.244.36.83

IPs:

IP: 10.244.36.83

Controlled By: DaemonSet/fluentd

Containers:

fluentd-es:

Container ID:

Image: agilestacks/fluentd-elasticsearch:v1.3.0

Image ID:

Port:

Host Port:

State: Waiting

Reason: ImagePullBackOff

Ready: False

Restart Count: 0

Environment:

FLUENTD_ARGS: -qq

Mounts:

/var/lib/docker/containers from containers (rw)

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上Go语言开发知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

tainerCreating 0 5m21s

fluentd-vbttv 0/1 ImagePullBackOff 0 5m21s

[root@k8s-master daemonset]# kubectl describe po fluentd-vbttv

Name: fluentd-vbttv

Namespace: default

Priority: 0

Node: k8s-node1/192.168.3.242

Start Time: Sun, 31 Dec 2023 18:29:32 +0800

Labels: app=logging

controller-revision-hash=b96747bc7

id=fluentd

pod-template-generation=1

Annotations: cni.projectcalico.org/containerID: 7df8b185447eedde818ee0acdebceb1cf02fe85d6b17a409f2749239a010ba93

cni.projectcalico.org/podIP: 10.244.36.83/32

cni.projectcalico.org/podIPs: 10.244.36.83/32

Status: Pending

IP: 10.244.36.83

IPs:

IP: 10.244.36.83

Controlled By: DaemonSet/fluentd

Containers:

fluentd-es:

Container ID:

Image: agilestacks/fluentd-elasticsearch:v1.3.0

Image ID:

Port:

Host Port:

State: Waiting

Reason: ImagePullBackOff

Ready: False

Restart Count: 0

Environment:

FLUENTD_ARGS: -qq

Mounts:

/var/lib/docker/containers from containers (rw)

[外链图片转存中…(img-74DkIb5z-1715816682915)]

[外链图片转存中…(img-MPbfpMb0-1715816682915)]

[外链图片转存中…(img-mLWIt1ug-1715816682915)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上Go语言开发知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

更多推荐

已为社区贡献5条内容

已为社区贡献5条内容

所有评论(0)