k8s集群部署及可视化kuboard 部署

注意:这条命令执行之后产生的 kubeadm join 192.168.96.10:6443 --tokken...#需要记住。1.配置阿里云Docker Yum源。注意:这里有可能报错。

·

目录

6.如果net.bridge.bridge-nf-call-iptables报错,加载br_netfilter模块

一.准备环境

1.准备三台服务器

| 主机名 | 地址 | 角色 | 配置 |

|---|---|---|---|

| k8s-master | 10.36.192.181 | 主节点 | 2核4G |

| k8s-node1 | 10.36.192.182 | 工作节点 | 1核2G |

| k8s-node2 | 10.36.192.184 | 工作节点 | 1核2G |

2.做域名解析[集群]

cat >> /etc/hosts <<EOF

10.36.192.181 k8s-master

10.36.192.182 k8s-node1

10.36.192.184 k8s-node2

EOF3.时间同步[集群]

yum -y install ntpdate

ntpdate ntp.aliyun.com

hwclock --systohc4.关闭防火墙与selinux[集群]

systemctlstop firewalld && systemctl disable firewalld

setenforce 0 && sed -i 's/SELINUX=enforcing/SELINUX=disabled/' /etc/sysconfig/selinux5.配置静态ip[集群]

6.关闭swap分区[集群]

# swapoff -a

修改/etc/fstab文件,注释掉SWAP的自动挂载,使用free -m确认swap已经关闭。

2.注释掉swap分区:

# sed -i 's/.*swap.*/#&/' /etc/fstab

# free -m

total used free shared buff/cache available

Mem: 3935 144 3415 8 375 3518

Swap: 0 0 0

7.注意:

这里有[集群]的,三台都执行,[master]:master节点执行; [node]:node节点执行二.docker部署[集群]

1.配置阿里云Docker Yum源

# yum install -y yum-utils device-mapper-persistent-data lvm2 git

# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo2.安装最新版

yum install docker-ce -y3.查看Docker版本:

yum list docker-ce --showduplicates4.启动Docker服务:

systemctl enable docker

systemctl start docker 5.查看docker版本状态:

# docker -v

Docker version 1.13.1, build 8633870/1.13.1 6.生产docker的环境配置

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://pilvpemn.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

#注意:一定注意编码问题,出现错误---查看命令:journalctl -amu docker 即可发现错误三.k8s集群部署[集群]

1.配置k8s阿里云源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF2.安装相应的包

1.安装依赖包及常用软件包

# yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget vim net-tools git iproute lrzsz bash-completion tree bridge-utils unzip bind-utils gcc

2.安装对应版本

# yum install -y kubelet-1.22.0-0.x86_64 kubeadm-1.22.0-0.x86_64 kubectl-1.22.0-0.x86_64

3.加载ipvs相关内核模块

cat <<EOF > /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack_ipv4

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

4.配置:配置转发相关参数,否则可能会出错

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF5.使配置生效

sysctl --system6.如果net.bridge.bridge-nf-call-iptables报错,加载br_netfilter模块

# modprobe br_netfilter

# modprobe ip_conntrack

# sysctl -p /etc/sysctl.d/k8s.conf7.查看是否加载成功

lsmod | grep ip_vs8.配置启动kubelet[集群]

配置变量:

#DOCKER_CGROUPS=`docker info |grep 'Cgroup' | awk ' NR==1 {print $3}'`

#echo $DOCKER_CGROUPS

cgroupfs

2.配置kubelet的cgroups

cat >/etc/sysconfig/kubelet<<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=$DOCKER_CGROUPS --pod-infra-container-image=k8s.gcr.io/pause:3.5"

EOF9.启动

systemctl daemon-reload

systemctl enable kubelet && systemctl restart kubelet注意:

在这里使用 # systemctl status kubelet,你会发现报错误信息;

10月 11 00:26:43 node1 systemd[1]: kubelet.service: main process exited, code=exited, status=255/n/a

10月 11 00:26:43 node1 systemd[1]: Unit kubelet.service entered failed state.

10月 11 00:26:43 node1 systemd[1]: kubelet.service failed.

#这个错误在运行kubeadm init 生成CA证书后会被自动解决,此处可先忽略。

#简单地说就是在kubeadm init 之前kubelet会不断重启。10.配置master节点[master]

beadm init --kubernetes-version=v1.22.0 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.96.10注意:这条命令执行之后产生的 kubeadm join 192.168.96.10:6443 --tokken...#需要记住

注:

apiserver-advertise-address=192.168.96.10 ---master的ip地址。

--kubernetes-version=v1.22.0 --更具具体版本进行修改

如果报错会有版本提示,那就是有更新新版本了

[init] Using Kubernetes version: v1.22.0

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 18.03.0-ce. Latest validated version: 18.09

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kub-k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.96.10]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [kub-k8s-master localhost] and IPs [192.168.96.10 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [kub-k8s-master localhost] and IPs [192.168.96.10 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 24.575209 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.16" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node kub-k8s-master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node kub-k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 93erio.hbn2ti6z50he0lqs

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.96.10:6443 --tokken 93erio.hbn2ti6z50he0lqs \

--discovery-token-ca-cert-hash sha256:3bc60f06a19bd09f38f3e05e5cff4299011b7110ca3281796668f4edb29a56d9 #需要记住11.配置使用kubectl

rm -rf $HOME/.kube

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

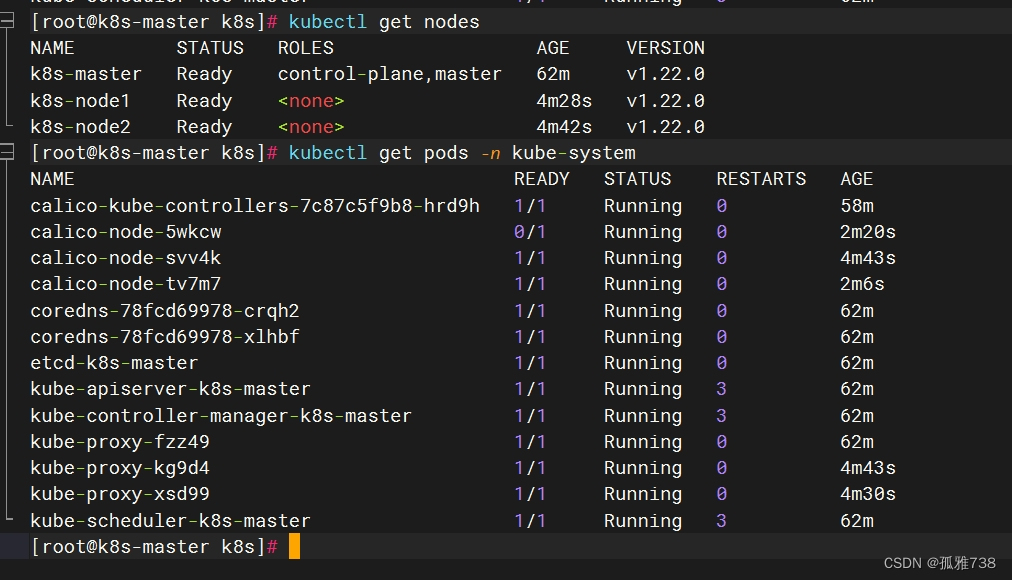

chown $(id -u):$(id -g) $HOME/.kube/config12. 查看node节点

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 2m41s v1.22.013.配置使用网络插件[master]

kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-6d9cdcd744-8jt5g 1/1 Running 0 6m50s

kube-system calico-node-rkz4s 1/1 Running 0 6m50s

kube-system coredns-74ff55c5b-bcfzg 1/1 Running 0 52m

kube-system coredns-74ff55c5b-qxl6z 1/1 Running 0 52m

kube-system etcd-kub-k8s-master 1/1 Running 0 53m

kube-system kube-apiserver-kub-k8s-master 1/1 Running 0 53m

kube-system kube-controller-manager-kub-k8s-master 1/1 Running 0 53m

kube-system kube-proxy-gfhkf 1/1 Running 0 52m

kube-system kube-scheduler-kub-k8s-master 1/1 Running 0 53m14.node加入集群[node]

配置node节点加入集群:

如果报错开启ip转发:

# sysctl -w net.ipv4.ip_forward=1

在所有node节点操作,此命令为初始化master成功后返回的结果

# kubeadm join 192.168.96.10:6443 --token 93erio.hbn2ti6z50he0lqs \

--discovery-token-ca-cert-hash sha256:3bc60f06a19bd09f38f3e05e5cff4299011b7110ca3281796668f4edb29a56d915.后续检查[master]

各种检测:

1.查看pods:

[root@kub-k8s-master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-5644d7b6d9-sm8hs 1/1 Running 0 39m

coredns-5644d7b6d9-vddll 1/1 Running 0 39m

etcd-kub-k8s-master 1/1 Running 0 37m

kube-apiserver-kub-k8s-master 1/1 Running 0 38m

kube-controller-manager-kub-k8s-master 1/1 Running 0 38m

kube-flannel-ds-amd64-9wgd8 1/1 Running 0 38m

kube-flannel-ds-amd64-lffc8 1/1 Running 0 2m11s

kube-flannel-ds-amd64-m8kk2 1/1 Running 0 2m2s

kube-proxy-dwq9l 1/1 Running 0 2m2s

kube-proxy-l77lz 1/1 Running 0 2m11s

kube-proxy-sgphs 1/1 Running 0 39m

kube-scheduler-kub-k8s-master 1/1 Running 0 37m

2.查看节点:

[root@kub-k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

kub-k8s-master Ready master 43m v1.22.0

kub-k8s-node1 Ready <none> 6m46s v1.22.0

kub-k8s-node2 Ready <none> 6m37s v1.22.0

到此集群配置完成注意:这里有可能报错

解决办法:

#出现kubectl get pods -A后calico和coredns这两个网络没起来(0/1)的情况,原因是网卡较多选择错误,需要

执行

kubectl edit daemonset calico-node-n kube-system

在文件里写入

#– name: IP_AUTODETECTION_METHOD

# value: “interface=ens*”

四.可视化kuboard 部署[master]

1.部署

kubectl apply -f https://addons.kuboard.cn/kuboard/kuboard-v3.yaml

[root@kube-master ~]# kubectl get pod -n kuboard

NAME READY STATUS RESTARTS AGE

kuboard-agent-2-5c54dcb98f-4vqvc 1/1 Running 24 (7m50s ago) 16d

kuboard-agent-747b97fdb7-j42wr 1/1 Running 24 (7m34s ago) 16d

kuboard-etcd-ccdxk 1/1 Running 16 (8m58s ago) 16d

kuboard-etcd-k586q 1/1 Running 16 (8m53s ago) 16d

kuboard-questdb-bd65d6b96-rgx4x 1/1 Running 10 (8m53s ago) 16d

kuboard-v3-5fc46b5557-zwnsf 1/1 Running 12 (8m53s ago) 16d

[root@kube-master ~]# kubectl get svc -n kuboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kuboard-v3 NodePort 10.36.192.181 <none> 80:30080/TCP,10081:30081/TCP,10081:30081/UDP 16d

账号:admin

密码:Kuboard1232.进去界面

3.创建一个容器pod

#cat nginx.yaml

---

apiVersion: v1

kind: Pod

metadata:

name: nginx

namespace: xian2304

labels:

name: nginx

spec:

containers:

- name: nginx

image: daocloud.io/library/nginx

imagePullPolicy: IfNotPresent

resources:

limits:

memory: "128Mi"

cpu: "500m"

ports:

- containerPort: 80

4.运行之后

#运行

kubectl apply -f nginx.yaml 5.界面展示

5.界面展示

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)