使用kubeasz安装高可用集群

致力于提供快速部署高可用k8s集群的工具,基于二进制方式部署和利用ansible-playbook实现自动化;既提供一键安装脚本, 也可以根据安装指南分步执行安装各个组件。

kubeasz 致力于提供快速部署高可用k8s集群的工具,基于二进制方式部署和利用ansible-playbook实现自动化;既提供一键安装脚本, 也可以根据安装指南分步执行安装各个组件。

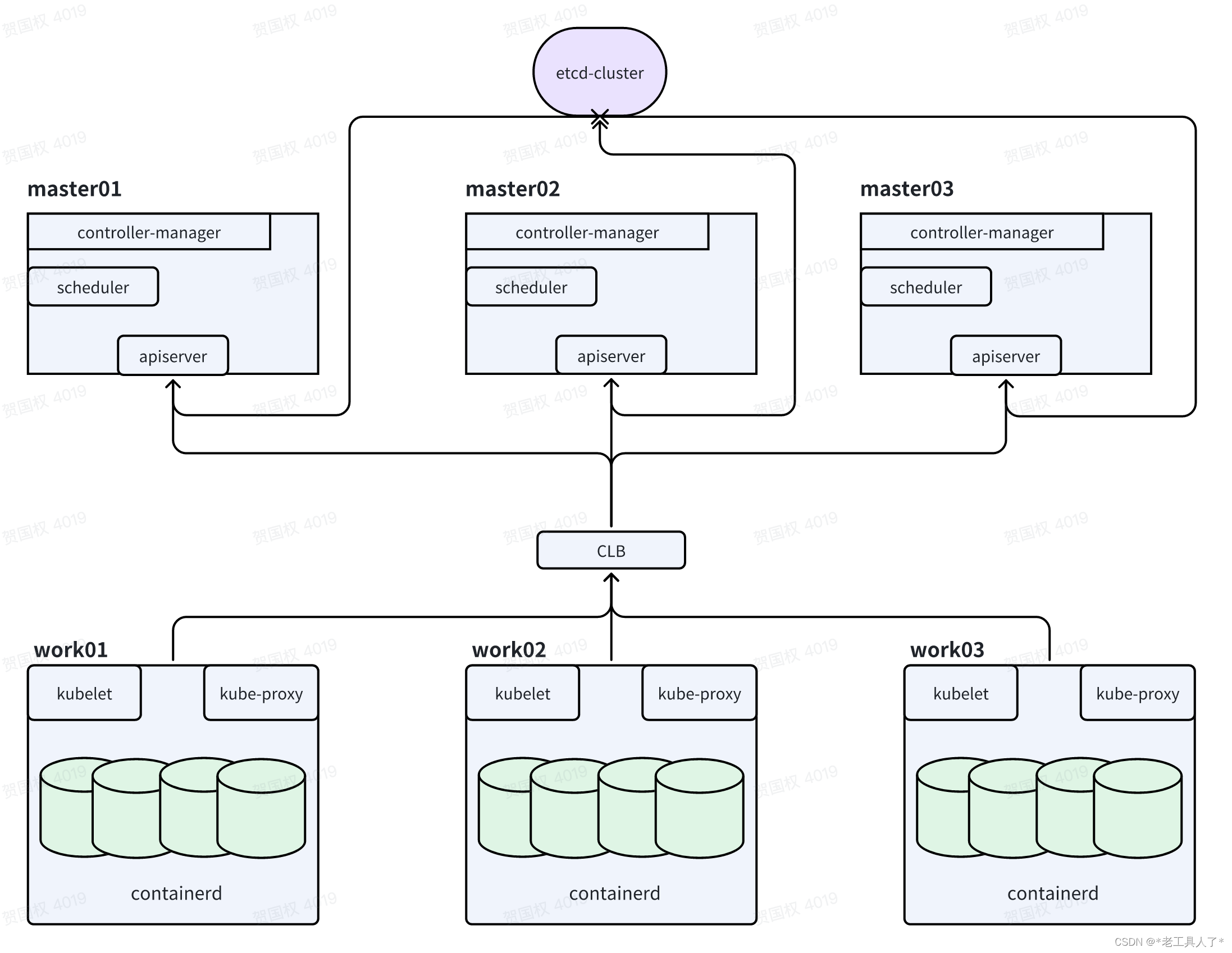

一、多master集群规划

1、多master集群架构

2、基础环境

6台4C+8GB的机器,3台做master节点,3台做work节点

Centos 7系统

初始化服务器

升级内核:https://github.com/easzlab/kubeasz/blob/master/docs/guide/kernel_upgrade.md

kubeasz官网:https://github.com/easzlab/kubeasz/tree/master

master01机器上安装docker:https://developer.aliyun.com/mirror/docker-ce

二、部署步骤

1、配置免密登陆

配置master01到其他所有节点包括自身的免密登陆

2、在master01上下载ezdown工具

#根据需要部署的k8s版本选择对应的kubasz版本

export release=3.5.0

wget https://github.com/easzlab/kubeasz/releases/download/${release}/ezdown

chmod +x ./ezdown

3、下载kubeasz代码、二进制、默认容器镜像

#国内环境

./ezdown -D

# 海外环境

#./ezdown -D -m standard

【可选】下载额外容器镜像(cilium,flannel,prometheus等)

#按需下载

./ezdown -X flannel

./ezdown -X prometheus

...

【可选】下载离线系统包 (适用于无法使用yum/apt仓库情形)

./ezdown -P

上述脚本运行成功后,所有文件(kubeasz代码、二进制、离线镜像)均已整理好放入目录**/etc/kubeasz**

/etc/kubeasz包含 kubeasz 版本为 ${release} 的发布代码/etc/kubeasz/bin包含 k8s/etcd/docker/cni 等二进制文件/etc/kubeasz/down包含集群安装时需要的离线容器镜像/etc/kubeasz/down/packages包含集群安装时需要的系统基础软件

4、 创建集群配置实例

#容器化运行kubeasz

./ezdown -S

# 创建新集群 k8s-01

docker exec -it kubeasz ezctl new k8s-01

2021-01-19 10:48:23 DEBUG generate custom cluster files in /etc/kubeasz/clusters/k8s-01

2021-01-19 10:48:23 DEBUG set version of common plugins

2021-01-19 10:48:23 DEBUG cluster k8s-01: files successfully created.

2021-01-19 10:48:23 INFO next steps 1: to config '/etc/kubeasz/clusters/k8s-01/hosts'

2021-01-19 10:48:23 INFO next steps 2: to config '/etc/kubeasz/clusters/k8s-01/config.yml'

然后根据提示配置’/etc/kubeasz/clusters/k8s-01/hosts’ 和 ‘/etc/kubeasz/clusters/k8s-01/config.yml’:根据前面节点规划修改hosts 文件和其他集群层面的主要配置选项;其他集群组件等配置项可以在config.yml 文件中修改。

5、修改配置文件

修改config.yml部分配置文件

#coredns 自动安装

dns_install: "yes"

# metric server 自动安装

metricsserver_install: "yes"

metricsVer: "v0.5.2"

# dashboard 自动安装

dashboard_install: "no"

dashboardVer: "v2.7.0"

dashboardMetricsScraperVer: "v1.0.8"

# prometheus 自动安装

prom_install: "no"

prom_namespace: "monitor"

prom_chart_ver: "39.11.0"

修改hosts文件的配置示例

cat hosts

# 'etcd' cluster should have odd member(s) (1,3,5,...)

[etcd]

172.16.185.245

172.16.185.247

172.16.185.248

# master node(s), set unique 'k8s_nodename' for each node

# CAUTION: 'k8s_nodename' must consist of lower case alphanumeric characters, '-' or '.',

# and must start and end with an alphanumeric character

[kube_master]

172.16.185.245 k8s_nodename='master-01'

172.16.185.247 k8s_nodename='master-02'

172.16.185.248 k8s_nodename='master-03'

# work node(s), set unique 'k8s_nodename' for each node

# CAUTION: 'k8s_nodename' must consist of lower case alphanumeric characters, '-' or '.',

# and must start and end with an alphanumeric character

[kube_node]

172.16.185.249 k8s_nodename='worker-03'

172.16.185.244 k8s_nodename='worker-01'

172.16.185.246 k8s_nodename='worker-02'

# [optional] harbor server, a private docker registry

# 'NEW_INSTALL': 'true' to install a harbor server; 'false' to integrate with existed one

[harbor]

172.16.185.244 NEW_INSTALL=false

# [optional] loadbalance for accessing k8s from outside

[ex_lb]

#192.168.1.6 LB_ROLE=backup EX_APISERVER_VIP=172.16.26.45 EX_APISERVER_PORT=6443

#192.168.1.7 LB_ROLE=master EX_APISERVER_VIP=172.16.26.45 EX_APISERVER_PORT=6443

# [optional] ntp server for the cluster

[chrony]

#192.168.1.1

[all:vars]

# --------- Main Variables ---------------

# Secure port for apiservers

SECURE_PORT="6443"

# Cluster container-runtime supported: docker, containerd

# if k8s version >= 1.24, docker is not supported

CONTAINER_RUNTIME="containerd"

#新版本已经不再支持docker,运行时应使用contained

# Network plugins supported: calico, flannel, kube-router, cilium, kube-ovn

CLUSTER_NETWORK="calico"

#calico的网络损耗要低于flannel

# Service proxy mode of kube-proxy: 'iptables' or 'ipvs'

PROXY_MODE="ipvs"

#ipvs适用于大规模的集群环境下,

# K8S Service CIDR, not overlap with node(host) networking

SERVICE_CIDR="10.68.0.0/16"

# Cluster CIDR (Pod CIDR), not overlap with node(host) networking

CLUSTER_CIDR="172.20.0.0/16"

# NodePort Range

NODE_PORT_RANGE="20000-32767"

# Cluster DNS Domain

CLUSTER_DNS_DOMAIN="cluster.local"

# -------- Additional Variables (don't change the default value right now) ---

# Binaries Directory

bin_dir="/opt/kube/bin"

# Deploy Directory (kubeasz workspace)

base_dir="/etc/kubeasz"

# Directory for a specific cluster

cluster_dir="{{ base_dir }}/clusters/jws2"

# CA and other components cert/key Directory

ca_dir="/etc/kubernetes/ssl"

# Default 'k8s_nodename' is empty

k8s_nodename='jws2'

注意:需要hosts文件修改这部分配置

CONTAINER_RUNTIME="containerd"

#新版本已经不再支持docker,运行时强烈建议使用contained

PROXY_MODE="ipvs"

#ipvs适用于大规模的集群环境下,性能比较好

#这两个网段不要与宿主机的ip冲突即可

SERVICE_CIDR="10.68.0.0/16"

CLUSTER_CIDR="172.20.0.0/16"

#对集群外可以进行暴露端口的范围

NODE_PORT_RANGE="20000-32767"

6、 开始安装

#建议使用alias命令,查看~/.bashrc 文件应该包含:alias dk='docker exec -it kubeasz'

source ~/.bashrc

# 一键安装,等价于执行docker exec -it kubeasz ezctl setup k8s-01 all

dk ezctl setup k8s-01 all

# 或者分步安装,具体使用 dk ezctl help setup 查看分步安装帮助信息

# dk ezctl setup k8s-01 01

# dk ezctl setup k8s-01 02

# dk ezctl setup k8s-01 03

# dk ezctl setup k8s-01 04

...

########

#coredns组件默认的副本数为1,可以修改为2,避免单点故障,提高集群可用性。

#######

7、验证安装

$ source ~/.bashrc

$ kubectl version # 验证集群版本

$ kubectl get node # 验证节点就绪 (Ready) 状态

$ kubectl get pod -A # 验证集群pod状态,默认已安装网络插件、coredns、metrics-server等

$ kubectl get svc -A # 验证集群服务状态

8、ezctl命令使用帮助

可以通过ezctl命令增加node/work节点,删除node/work节点等操作

./ezctl --help

Usage: ezctl COMMAND [args]

-------------------------------------------------------------------------------------

Cluster setups:

list to list all of the managed clusters

checkout <cluster> to switch default kubeconfig of the cluster

new <cluster> to start a new k8s deploy with name 'cluster'

setup <cluster> <step> to setup a cluster, also supporting a step-by-step way

start <cluster> to start all of the k8s services stopped by 'ezctl stop'

stop <cluster> to stop all of the k8s services temporarily

upgrade <cluster> to upgrade the k8s cluster

destroy <cluster> to destroy the k8s cluster

backup <cluster> to backup the cluster state (etcd snapshot)

restore <cluster> to restore the cluster state from backups

start-aio to quickly setup an all-in-one cluster with default settings

Cluster ops:

add-etcd <cluster> <ip> to add a etcd-node to the etcd cluster

add-master <cluster> <ip> to add a master node to the k8s cluster

add-node <cluster> <ip> to add a work node to the k8s cluster

del-etcd <cluster> <ip> to delete a etcd-node from the etcd cluster

del-master <cluster> <ip> to delete a master node from the k8s cluster

del-node <cluster> <ip> to delete a work node from the k8s cluster

Extra operation:

kca-renew <cluster> to force renew CA certs and all the other certs (with caution)

kcfg-adm <cluster> <args> to manage client kubeconfig of the k8s cluster

Use "ezctl help <command>" for more information about a given command.

三、清理

以上步骤创建的K8S开发测试环境请尽情折腾,碰到错误尽量通过查看日志、上网搜索、提交issues等方式解决;当然你也可以清理集群后重新创建。

在宿主机上,按照如下步骤清理

- 清理集群

docker exec -it kubeasz ezctl destroy default - 重启节点,以确保清理残留的虚拟网卡、路由等信息

四、单节点集群快速部署

升级内核

升级内核:https://github.com/easzlab/kubeasz/blob/master/docs/guide/kernel_upgrade.md

下载文件

下载工具脚本ezdown,举例使用kubeasz版本3.5.0

export release=3.5.0

wget https://github.com/easzlab/kubeasz/releases/download/${release}/ezdown

chmod +x ./ezdown

下载kubeasz代码、二进制、默认容器镜像

#国内环境

./ezdown -D

# 海外环境

#./ezdown -D -m standard

上述脚本运行成功后,所有文件(kubeasz代码、二进制、离线镜像)均已整理好放入目录/etc/kubeasz

/etc/kubeasz包含 kubeasz 版本为 ${release} 的发布代码/etc/kubeasz/bin包含 k8s/etcd/docker/cni 等二进制文件/etc/kubeasz/down包含集群安装时需要的离线容器镜像/etc/kubeasz/down/packages包含集群安装时需要的系统基础软件

安装集群

- 容器化运行 kubeasz

./ezdown -S

- 使用默认配置安装 aio 集群

docker exec -it kubeasz ezctl start-aio

# 如果安装失败,查看日志排除后,使用如下命令重新安装aio集群

# docker exec -it kubeasz ezctl setup default all

更多推荐

已为社区贡献5条内容

已为社区贡献5条内容

所有评论(0)