k8s:部署k8s单master节点集群(kubeadm)v1.23.13

k8s单节点集群部署

文章目录

一、规划

1.1 收集信息

官方部署文档:

https://v1-23.docs.kubernetes.io/zh/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

寻找兼容的docker版本:

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.23.md#dependencies-20

寻找兼容的calico版本:

https://docs.tigera.io/archive/v3.21/getting-started/kubernetes/requirements#kubernetes-requirements

寻找兼容的dashboard版本:

https://github.com/kubernetes/dashboard/releases

收集到的关键信息:

docker:github.com/docker/docker: v20.10.2+incompatible → v20.10.7+incompatible

calico:We test Calico v3.21 against the following Kubernetes versions.

- v1.20

- v1.21

- v1.22

1.2 集群版本规划

| 软件 | 版本 |

|---|---|

| kubernetes | 1.23.13 |

| docker-ce | 20.10.* |

| 操作系统 | centos7.9.2009_minimal |

1.3 服务器规划

| 角色 | IP |

|---|---|

| k8s-master01 | 192.168.56.51 |

| k8s-node01 | 192.168.56.54 |

| k8s-node02 | 192.168.56.55 |

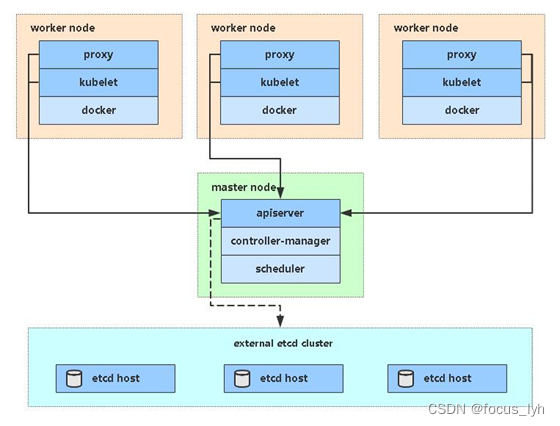

1.4 架构图

二、开始部署

2.1 系统初始化配置

# 内核升级

# 下载内核包

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm

# 升级内核

yum -y localinstall ~/kernel-ml*

# 按照/boot/目录内的文件自动创建grub引导菜单

grub2-mkconfig -o /etc/grub2.cfg

# 配置默认启动内核

grub2-set-default 'CentOS Linux (4.19.12-1.el7.elrepo.x86_64) 7 (Core)'

# 列出默认启动项以确认

grub2-editenv list

# 重启生效

reboot

# 验证

uname -r

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

# 关闭selinux

setenforce 0

sed -i 's/enforcing/disabled/' /etc/selinux/config

# 关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

# 根据规划设置主机名

hostnamectl set-hostname <hostname>

# 添加hosts

cat >> /etc/hosts << EOF

192.168.56.51 k8s-master01

192.168.56.54 k8s-node01

192.168.56.55 k8s-node02

EOF

# 时间同步

yum install ntpdate -y

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' >/etc/timezone

ntpdate time2.aliyun.com

crontab -e

*/5 * * * * ntpdate time2.aliyun.com

# 常用工具安装

yum -y install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git

# 配置limit

ulimit -SHn 65535

cat >> /etc/security/limits.conf <<EOF

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

# ipvs

yum -y install ipvsadm ipset sysstat conntrack libseccomp

cat >> /etc/modules-load.d/ipvs.conf <<EOF

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack_ipv4

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

systemctl enable --now systemd-modules-load.service

# 开启k8s集群中必须的内核参数

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

2.2 安装docker

注意:其它k8s版本,确认兼容的docker版本(1.1)

# 配置yum源

curl https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -o /etc/yum.repos.d/docker-ce.repo

yum clean all

# 安装docker

yum list docker-ce --showduplicates

yum -y install docker-ce-20.10.*

# 配置镜像下载加速器、 Cgroup Driver 配置为systemd

mkdir -p /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://pekwusel.mirror.aliyuncs.com"],

"exec-opts":["native.cgroupdriver=systemd"]

}

EOF

# 启动docker

systemctl daemon-reload

systemctl restart docker

systemctl enable docker

2.2 安装kubeadm、kubelet、kubectl

# 配置yum源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 查看可安装的kubeadm、kubelet、kubectl版本

yum list kubeadm --showduplicates

yum list kubelet --showduplicates

yum list kubectl --showduplicates

# 按照规划安装v.1.23版本

yum install -y kubelet-1.23.13 kubeadm-1.23.13 kubectl-1.23.13

systemctl enable kubelet && systemctl start kubelet

2.3 初始化

2.3.1 查看所需镜像

kubeadm config images list \

--kubernetes-version v1.23.13 \

--image-repository registry.aliyuncs.com/google_containers

2.3.2 提前拉取镜像

kubeadm config images pull \

--kubernetes-version v1.23.13 \

--image-repository registry.aliyuncs.com/google_containers

2.3.3 初始化首台master节点

命令行方式

kubeadm init \

--apiserver-advertise-address=192.168.56.51 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.13 \

--pod-network-cidr=10.101.0.0/16 \

--service-cidr=10.102.0.0/12 \

--ignore-preflight-errors=all \

| tee -a k8s-init.log

–apiserver-advertise-address 集群apiserver地址

–image-repository 由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址

–kubernetes-version K8s版本,与上面安装的一致

–service-cidr 集群内部虚拟网络,pod 统一访问入口

–pod-network-cidr pod网络,,与下面部署的cni网络组件yaml中保持一致

配置文件引导方式

cat >kubeadm.conf <<EOF

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.23.13

imageRepository: registry.aliyuncs.com/google_containers

networking:

podSubnet: 10.101.0.0/16

serviceSubnet: 10.102.0.0/16

EOF

kubeadm init --config kubeadm.conf --ignore-preflight-errors=all | tee -a k8s-init.log

2.4 集群管理

2.4.1 kubectl 配置

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

2.4.2 新增node节点

前提:本文 2.3 节前都已经执行

向集群添加新节点,node 节点执行在 kubeadm init 输出的 kubeadm join 命令:

kubeadm join 192.168.31.61:6443 --token 7gqt13.kncw9hg5085iwclx \

--discovery-token-ca-cert-hash sha256:66fbfcf18649a5841474c2dc4b9ff90c02fc05de0798ed690e1754437be35a01

默认token有效期为24小时,当过期之后,该token就不可用了。这时就需要重新创建token,可以直接使用命令快捷生成:

kubeadm token create --print-join-command

2.5 部署容器网络(CNI)

注意:其它k8s版本,确认兼容的calico版本(1.1)

Calico是一个纯三层的数据中心网络方案,是目前Kubernetes主流的网络方案

# 修改 pod 网络与前面kubeadm init的 --pod-network-cidr指定的一样

wget https://docs.projectcalico.org/archive/v3.21/manifests/tigera-operator.yaml --no-check-certificate

kubecetl apply -f tigera-operator.yaml

# 修改 pod 网络与前面kubeadm init的 --pod-network-cidr指定的一样

wget https://docs.projectcalico.org/archive/v3.21/manifests/custom-resources.yaml --no-check-certificate

sed -i 's# cidr: 192.168.0.0/16# cidr: 10.101.0.0/16#g' custom-resources.yaml

# 部署calico

kubectl apply -f custom-resources.yaml

# 确认所有calico pod 都已启动

kubectl get pods -n kube-system

# 确认所有node节点已经ready

kubectl get node

2.5 集群验证

kubectl create deployment nginx --image=nginx

kubectl expose deployment nginx --port=80 --type=NodePort

kubectl get pod,svc

访问url:http://nodeip:port

2.6 安装 dashboard

Dashboard是官方提供的一个UI,可用于基本管理 k8s 资源

# 下载部署文件

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml

# 默认 dashboad 只能集群内部访问,修改 service 为 nodeport 类型,暴露到外部

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

nodePort: 30001

selector:

k8s-app: kubernetes-dashboard

type: NodePort

# 安装dashboard

kubectl apply -f recommended.yaml

kubectl get pods -n kubernetes-dashboard

创建 service account 并绑定默认 cluster-admin 管理员集群角色:

# 创建用户

$ kubectl create serviceaccount dashboard-admin -n kube-system

# 用户授权

$ kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

# 获取用户Token

$ kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

访问地址:https://nodeip:30001,使用输出的 token 登录 dashboard

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)